⏱ 23 min read

Hybrid Cloud Architecture for Large Enterprises: Strategy, Design & Best Practices enterprise cloud architecture patterns

Explore hybrid cloud architecture for large enterprises, including design principles, security, scalability, governance, and integration best practices for modern IT environments. multi-cloud architecture strategy

hybrid cloud architecture, large enterprise cloud strategy, enterprise IT architecture, hybrid cloud design, cloud governance, cloud security, multi-cloud strategy, enterprise scalability, cloud integration, digital transformation event-driven architecture

Here is a final editorial review version that keeps the structure intact, smooths out remaining AI-style repetition, varies sentence rhythm, and adds a few realistic architecture micro-examples without materially expanding the piece.

Introduction

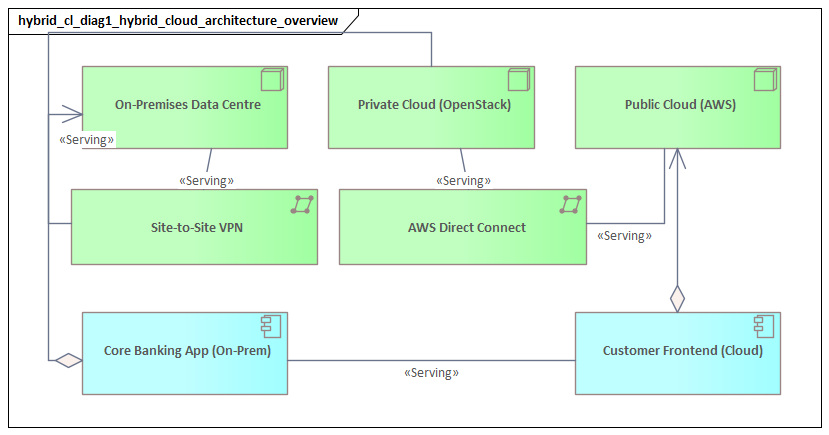

Hybrid cloud has become the default operating model for large enterprises because it mirrors how enterprise technology actually evolves. Very few organizations can move their entire estate to a single public cloud, and just as few benefit from keeping everything in a traditional data center. Most end up running across a mix of legacy platforms, private infrastructure, SaaS, edge environments, and one or more public cloud providers. The architectural task is not merely to connect those environments, but to make them work together as a secure, governable, and operationally coherent whole.

The complexity is driven as much by business conditions as by technology. Regulatory obligations, data residency rules, latency-sensitive workloads, merger activity, long refresh cycles, and existing commercial commitments all influence where systems should run. A core transaction platform may remain on-premises because of performance dependencies or licensing constraints, while digital channels, analytics, and customer-facing services are better suited to elastic cloud platforms. Workload placement therefore becomes a business architecture decision as much as an infrastructure one. A typical example is a payments engine retained in a primary data center for latency and audit reasons, while customer notification services are rebuilt on managed cloud messaging and serverless components.

For that reason, hybrid cloud should be treated first as an operating model and only then as an infrastructure pattern. Enterprises need clear principles for workload placement, identity federation, connectivity, policy enforcement, data management, and operational control across environments. Without those foundations, hybrid cloud quickly turns into a collection of silos with inconsistent tooling, uneven security, limited visibility, and rising cost. The objective is not uniform infrastructure everywhere, but a unified model for how the enterprise designs, governs, and runs technology across different platforms.

A useful way to frame the problem is to separate environment diversity from architectural coherence. Diversity is unavoidable. Large enterprises will continue to run multiple hosting models and service types for the foreseeable future. The real question is whether those environments participate in a shared set of capabilities and standards. In practice, that means standardizing the control plane more than the workload plane. Full application portability is often unnecessary, expensive, and sometimes counterproductive. Policy portability, operational consistency, and reliable integration matter far more.

That shift changes the role of central IT. Rather than acting only as an infrastructure provider, enterprise technology teams become designers of shared capabilities: identity, secure connectivity, secrets management, observability, automation, and policy guardrails. Those services allow application teams to move faster without stepping outside enterprise controls. In mature organizations, the success of hybrid cloud depends less on any single platform than on the strength of these cross-environment capabilities.

This article sets out a practical structure for hybrid cloud architecture in large enterprises. It defines hybrid cloud as a capability-based model, then examines business drivers, design principles, governance, integration, operations, and implementation. The core argument is straightforward: hybrid cloud works when enterprises accept platform diversity but insist on coherence in how systems are governed, connected, secured, and operated.

Defining Hybrid Cloud Architecture in the Enterprise Context

In a large enterprise, hybrid cloud architecture is the deliberate design of applications, platforms, data, and operational controls across multiple environments under a common architectural framework. That definition goes well beyond a simple link between an on-premises data center and a public cloud. The real challenge lies in coordinating different execution environments, service models, ownership boundaries, and risk profiles so the enterprise can deliver business capabilities consistently.

The key distinction, introduced earlier, is between environment diversity and architectural coherence. Enterprises will always run a varied estate: legacy infrastructure, private cloud, containers, colocation, SaaS, edge, and hyperscaler services. Diversity itself is not the problem. Problems begin when each environment develops its own identity model, network assumptions, policy interpretation, delivery process, and operational tooling. At that point, the organization does not have a hybrid cloud architecture; it has several disconnected estates.

A workable enterprise definition of hybrid cloud is therefore capability-based rather than location-based. The architecture should specify which shared capabilities must exist across environments and which can remain platform-specific. Common design domains usually include:

- Workload placement: where applications and data should run based on latency, compliance, dependency patterns, cost, and operational readiness.

- Identity and access: a federated model for workforce, machine, and service identities.

- Connectivity and traffic control: standards for private connectivity, ingress, egress, segmentation, DNS, and service exposure.

- Data architecture: rules for replication, synchronization, lifecycle management, sovereignty, and backup.

- Operations and observability: common expectations for monitoring, logging, incident response, and automation.

- Security and policy enforcement: centralized guardrails with approved implementation patterns per platform.

- Technology lifecycle governance: standards for introducing, reviewing, and retiring platforms and tools.

This framing helps avoid one of the most common mistakes in hybrid programs: treating portability as the main objective. In reality, full workload portability is often costly and overly restrictive. Many systems are not expected to move often, if at all. What matters more is whether they can participate in a shared enterprise model. A workload may remain on a mainframe or use cloud-native managed services and still fit the hybrid architecture if it follows common identity controls, approved integration patterns, enterprise observability, and governed delivery processes.

It also clarifies the relationship between hybrid cloud and multi-cloud. Multi-cloud means using multiple public cloud providers. Hybrid cloud is broader. It includes public cloud, private cloud, traditional infrastructure, SaaS, and edge. An enterprise may be hybrid without being multi-cloud, and multi-cloud without having a coherent hybrid architecture. The decisive question is not how many platforms are in use, but whether they are governed and operated as part of one enterprise model.

For large enterprises, hybrid cloud is best understood as a framework for managing unavoidable diversity. It does not attempt to make every platform identical. Instead, it standardizes where consistency matters most: control, security, integration, and operations. The rest of this article builds on that definition.

Business Drivers and Strategic Benefits for Large Enterprises

Large enterprises adopt hybrid cloud because it aligns with how they actually operate. Business units move at different speeds, legal obligations vary by market, and technology estates reflect years of investment, specialization, and acquisition. A hybrid model allows the enterprise to modernize selectively while retaining control over systems that cannot—or should not—be relocated.

One major driver is business agility with bounded risk. Enterprises want to launch digital products quickly, scale customer-facing services, and adopt managed services without forcing every legacy system through immediate redesign. Hybrid architecture makes that possible by allowing new capabilities to be built in cloud-native environments while core systems remain stable. That only works, however, when there is coherence across environments. New services must integrate with core systems through governed APIs, event-driven patterns, and explicit dependency management rather than informal network coupling.

Another driver is regulatory and jurisdictional complexity. Large organizations often operate across multiple legal regimes, each with different requirements for data location, retention, auditability, and resilience. Hybrid cloud allows architects to separate workload placement, control paths, and data handling so those obligations can be met without duplicating the entire stack in every region. The strategic benefit is not compliance alone. It is also the ability to enter markets, support local operating entities, and adapt to regulatory change without redesigning the whole estate. For example, a bank may keep customer account records in-country on private infrastructure while running fraud analytics in a public cloud region using tokenized or minimized datasets.

Economic flexibility is equally important. Some workloads benefit from elastic cloud consumption; others are better suited to private infrastructure because demand is predictable, utilization is high, or licensing models favor fixed capacity. Hybrid cloud allows placement decisions to reflect economics rather than ideology. That requires a capability-based model from the outset: cost transparency, workload placement criteria, and service consumption standards need to be built into the architecture.

Another benefit is portfolio resilience. Concentration risk now extends beyond physical data centers to cloud regions, providers, managed services, and supply chains. Hybrid cloud gives enterprises more options for distributing critical capabilities across operational domains. Still, resilience has to be designed deliberately. Not every workload needs cross-environment failover. Architects should determine which business services require it, what data consistency model is acceptable, and which recovery procedures are realistic. A manufacturer, for instance, may choose active-active resilience for plant telemetry ingestion but accept delayed recovery for historical reporting platforms.

Hybrid cloud also supports post-merger integration and organizational restructuring. Acquisitions often introduce overlapping platforms, regional hosting models, and inconsistent security standards. A coherent hybrid architecture provides a way to federate identity, standardize observability, connect environments, and expose shared services without forcing immediate consolidation. That reduces disruption while creating a practical path toward later rationalization.

At the deepest level, the benefit is organizational. Hybrid cloud changes central IT from a provider of infrastructure into a provider of shared capabilities and guardrails. When identity, connectivity, observability, policy enforcement, and automation are delivered as reusable enterprise services, local teams can move faster without creating uncontrolled variation. For large enterprises, that balance between decentralization and standardization is often where the real value lies.

Core Architectural Principles and Design Patterns

Because platforms will continue to change, hybrid cloud architecture should rest on a small set of stable principles. Those principles provide continuity across hosting models, organizational boundaries, and technology generations.

1. Design for explicit boundaries

Hybrid environments become fragile when dependencies are buried inside network assumptions, shared databases, or tightly coupled middleware. Systems should interact through explicit interfaces: APIs, asynchronous events, and governed data contracts. That reduces the chance that a change in one environment destabilizes another and makes modernization easier over time.

In practice, this means:

- Favoring service interfaces over direct infrastructure dependency

- Isolating state wherever possible

- Documenting trust boundaries and failure assumptions

- Avoiding cross-environment database coupling

This principle directly supports business agility. Innovation at the edge is safer when core systems are protected by clear interaction boundaries.

2. Centralize policy, decentralize execution

Large enterprises cannot scale if one team manually approves every platform choice. Mandatory controls should be defined centrally through standards, reference architectures, and policy-as-code, while implementation is handled by platform and application teams within approved patterns.

This principle matters most for:

- Identity and access

- Network segmentation

- Encryption and secrets handling

- Deployment controls

- Logging and evidence collection

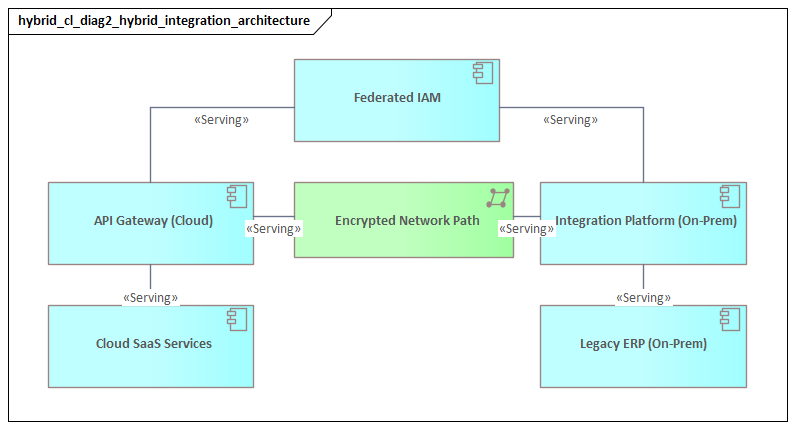

The goal is not identical implementation everywhere, but verifiable compliance with the same enterprise rules. A realistic example is an architecture board defining one enterprise IAM pattern—federated workforce access, short-lived privileged access, and managed workload identities—while allowing different technical implementations for cloud landing zones and older virtualized platforms.

3. Standardize the control plane more than the workload plane

Full workload portability is rarely the right objective. Enterprises usually get better results by standardizing control-plane capabilities instead: identity federation, CI/CD workflows, service catalogs, observability, configuration baselines, and policy enforcement. Workloads can then use platform-specific services where there is a clear business or technical advantage.

That approach preserves cloud-native value without sacrificing enterprise control.

4. Assume partial failure as normal

Hybrid architectures span different networks, providers, and operational teams. Links fail, dependencies degrade, and managed services behave differently under stress. Resilient systems should use local caching, queue-based decoupling, graceful degradation, and environment-specific recovery procedures. The architecture should make failure visible and manageable rather than pretending it can be designed away.

Common design patterns

Several design patterns support these principles.

Hub-and-spoke connectivity is common in enterprise hybrid models. Shared services such as identity, DNS, logging, inspection, and certificate management are anchored in controlled hubs, while application environments are segmented into spokes or landing zones. This improves governance and limits uncontrolled lateral movement, though architects need to avoid turning the hub into a performance or organizational bottleneck.

The strangler pattern is a practical modernization approach. New services are built around a stable core system and gradually take over specific capabilities through APIs and event streams rather than through immediate replacement. An insurer, for example, might leave policy administration on a legacy platform while moving quote generation and customer self-service into cloud-native services that consume policy events.

Distributed data with governed synchronization is another important pattern. Data often needs to exist in multiple environments, but replication has to follow explicit ownership, freshness, and compliance rules. Without that discipline, hybrid estates accumulate conflicting copies and unclear authority.

These principles matter because every later concern—governance, integration, operations, and cost—depends on them. If boundaries are unclear or controls are not standardized, every other architecture domain becomes harder to govern.

Governance, Security, and Compliance Across Hybrid Environments

Governance in hybrid cloud should operate as a continuous control system, not as a sequence of manual approval gates. In large enterprises, the problem is rarely the absence of policy. More often, it is inconsistent interpretation and uneven enforcement across clouds, data centers, SaaS, and edge environments.

A workable governance model separates three things:

- Policy definition

- Technical enforcement

- Evidence collection

That separation prevents a common failure mode: enterprise standards exist centrally, but each environment implements them differently and produces inconsistent audit evidence.

Common control taxonomy

A practical governance model starts with a shared enterprise control taxonomy covering areas such as:

- Identity lifecycle

- Privileged access

- Encryption and key management

- Logging and monitoring

- Vulnerability remediation

- Backup protection

- Third-party connectivity

- Data handling and retention

These controls should then be mapped to environment-specific implementation patterns. The control objective stays the same even when the technical method changes.

Identity-centric security

Identity is one of the most important control-plane capabilities in hybrid cloud. Traditional perimeter assumptions are too weak when users, services, and automation operate across multiple trust zones.

Enterprises should treat the following as tier-one architectural services:

- Identity providers

- Directory synchronization

- Certificate services

- Workload identity

- Secrets distribution

Machine identities deserve particular attention. Service accounts, API credentials, and workload tokens are often governed less rigorously than workforce identities, even though they can enable major lateral movement if compromised. A mature hybrid architecture uses short-lived credentials, strong federation, scoped authorization, and centralized revocation wherever possible. A common modernization step is replacing long-lived shared service accounts in legacy middleware with federated application identities and vault-issued secrets.

Managing policy drift

Policy drift is a persistent challenge. Public cloud platforms may enforce strong native guardrails, while older environments still depend on manual procedures. Over time, that creates the misleading impression that all environments are equally controlled.

Enterprises can reduce drift through:

- Policy-as-code

- Configuration baselines

- Continuous posture assessment

- Standard asset inventory signals

- Common access and vulnerability reporting

The goal is not a single tool everywhere, but comparable control outcomes across the estate.

Compliance beyond data location

Compliance in hybrid cloud is not only about where data resides. Many failures stem from control path ambiguity. Data may remain in an approved jurisdiction while administration, logging, support access, or key custody crosses restricted boundaries.

Architecture reviews should therefore examine:

- Where data is stored

- Who can administer the service

- Where metadata and logs flow

- How incident response is handled

- Which providers participate in the control chain

This is especially important in regulated sectors with sovereignty or operational resilience requirements. A healthcare organization, for example, may keep clinical data in a national private cloud but still fail policy if support logs containing patient identifiers are routed to a global SaaS monitoring platform without proper controls.

Federated governance

A federated model is usually the most effective. Central teams define mandatory controls, reference architectures, assurance methods, and exception processes. Platform and domain teams implement those controls within approved design envelopes. This avoids both excessive centralization and uncontrolled fragmentation.

Hybrid cloud becomes governable when controls are embedded into landing zones, platform templates, CI/CD pipelines, and onboarding processes. If compliance depends on manual review after deployment, it will not scale.

Technology lifecycle governance belongs here as well. Enterprises need a formal way to classify technologies as strategic, tolerated, contained, or retire. For example, an architecture board may designate Kafka as the strategic event backbone, tolerate an older message broker for existing workloads, and set a retirement deadline for unsupported integration middleware.

Integration, Data Management, and Application Modernization

Hybrid cloud succeeds or fails at the seams between environments. Even with strong infrastructure and sound security, the estate becomes fragile if integrations are brittle, data movement is uncontrolled, or modernization programs ignore operational reality.

Integration, data management, and modernization should therefore be treated as one architectural domain.

Integration architecture

Enterprises typically need several interaction styles:

- Synchronous APIs for real-time service access

- Asynchronous events for decoupled process flow

- Managed file exchange for controlled bulk transfer

- Batch pipelines for scheduled movement and reconciliation

The architectural task is to standardize when each pattern should be used. Real-time customer journeys may require API mediation with strong authentication and version management. Operational decoupling is often better served by events. Finance, regulatory, and partner workflows may still depend on scheduled transfer patterns with explicit lineage and reconciliation.

Hybrid environments are more stable when these patterns are selected deliberately rather than improvised project by project. A typical example is using Kafka for cross-domain business events such as order created or shipment dispatched, while keeping customer master updates behind governed APIs and using batch reconciliation for finance close processes.

Data management

A hybrid enterprise should not assume that one logical data model implies one physical platform. Operational, analytical, archival, and reference data often need different placement models. The key is to define ownership and movement rules clearly.

Each critical dataset should have:

- An authoritative source

- Approved consumers

- Data quality expectations

- Retention policy

- Permitted replication paths

This supports governed synchronization and reduces ambiguity in downstream use.

A particularly important distinction is between data integration and data duplication. Data integration provides controlled access through APIs, events, virtualization, or governed pipelines. Data duplication creates unmanaged copies that are difficult to secure, reconcile, or retire. That becomes especially dangerous when cloud analytics platforms are introduced alongside on-premises systems of record.

Architects should define:

- When replication is allowed

- How freshness is measured

- How schema and semantic changes are managed

- How lineage and metadata are captured

- Who owns the downstream data product

Application modernization

Modernization should be managed as portfolio engineering, not as a single migration doctrine. Different applications need different treatments:

- Rehosting for low-change workloads

- Refactoring for high-value systems with scaling or agility constraints

- Encapsulation for stable core systems that still provide essential business functions

- Replacement where a business case clearly justifies it

The key point is that even systems that cannot be fully redesigned should still be brought into the enterprise model. A legacy platform can participate effectively in hybrid cloud if it is exposed through governed APIs, surrounded by integration services, instrumented for observability, and isolated from uncontrolled point-to-point dependency growth.

A useful modernization approach is to organize around business capabilities rather than technical layers alone. Extracting or augmenting bounded business functions often delivers value faster and with less risk than decomposing entire systems by infrastructure tier. A retailer may, for example, leave inventory settlement in an ERP platform while extracting pricing, promotions, and digital catalog services into cloud-native components consumed by web and mobile channels.

The most effective hybrid architectures create a disciplined interaction model: APIs for controlled access, events for decoupled flow, governed pipelines for data movement, and modernization roadmaps aligned to business capability evolution. TOGAF roadmap template

Operational Excellence: Observability, Automation, and Cost Control

Operational excellence is where hybrid cloud becomes either sustainable or unmanageable. Connecting platforms is relatively straightforward. Operating them consistently is not.

The hybrid model depends on shared operational capabilities. Without them, complexity accumulates faster than value.

Observability by design

Telemetry should be treated as a mandatory architectural output, not an optional enhancement. Every workload and platform service should emit logs, metrics, traces, topology data, and audit events in a way that supports both local troubleshooting and enterprise-wide analysis.

A minimum telemetry standard should define:

- Service identifiers

- Timestamp conventions

- Environment tags

- Ownership metadata

- Severity models

- Retention rules

Without shared semantics, central dashboards may exist, but they will not support meaningful diagnosis across environments.

Large enterprises often cannot use a single tool everywhere, so the goal should be observability federation, not total consolidation. Different tools can remain in place as long as they feed a common operational framework that supports service health views, dependency mapping, alert correlation, and incident context.

Automation at multiple layers

Manual operations create delay, variability, and avoidable risk. Enterprises should automate:

- Infrastructure provisioning

- Configuration enforcement

- Patch orchestration

- Certificate renewal

- Backup validation

- Scaling actions

- Incident response workflows

This should extend beyond cloud-native environments wherever feasible. The objective is not identical tooling, but consistent outcomes.

Runbook automation is especially valuable in hybrid estates because many incidents involve repeatable cross-environment actions such as traffic rerouting, credential rotation, queue draining, service restarts, or failover checks. Encoding these into tested workflows reduces reliance on tribal knowledge and makes resilience assumptions executable.

Cost control as an architectural discipline

Hybrid cloud complicates cost visibility because spending spans capital infrastructure, cloud consumption, licensing, and shared services. Cost management therefore needs to be designed into the architecture, not added after the fact.

Enterprises need:

- Tagging standards

- Service catalogs

- Usage attribution models

- Shared cost allocation rules

- Visibility from technical resources to business services and owners

A strong model combines FinOps with architecture governance. Workload teams should be able to see the cost impact of design choices such as data egress, resilience patterns, storage tiers, and managed service usage. Central teams should monitor portfolio-level inefficiencies such as redundant platforms, underused capacity, and duplicated tooling.

Operational excellence in hybrid cloud ultimately means making systems observable, operations automatable, and costs understandable across platform boundaries.

Implementation Roadmap, Organizational Change, and Common Pitfalls

A hybrid cloud architecture becomes credible only when it can be implemented in stages. For large enterprises, this should be treated as a sequenced transformation of capabilities and operating models, not as a single migration program.

1. Baseline assessment and segmentation

The first step is not simply infrastructure inventory. Enterprises should classify applications and platforms by:

- Business criticality

- Regulatory sensitivity

- Integration dependency

- Technical constraints

- Modernization readiness

This creates the basis for workload placement and adoption patterns. Architects should also identify shared bottlenecks such as legacy identity stores, overlapping IP ranges, undocumented interfaces, or aging middleware.

2. Establish enterprise landing capabilities

Before broad adoption begins, core capabilities should be in place for:

- Environment provisioning

- Connectivity

- Policy enforcement

- Secrets handling

- Observability onboarding

- Deployment pipelines

These capabilities do not need to be perfect, but they must prevent every project from inventing its own model. Reference architectures should be published for a small number of approved patterns, such as digital services, hybrid integration workloads, regulated data processing, and legacy system extension.

3. Domain-led adoption

Adoption usually works better when it follows business domains or product boundaries rather than infrastructure layers alone. This allows the enterprise to test governance, support models, and integration standards in real delivery contexts. Early adopters should be significant enough to validate the model, but not so entangled that they absorb all transformation capacity.

Success metrics should include:

- Deployment speed

- Control compliance

- Incident performance

- Cost predictability

- Reuse of approved patterns

4. Evolve the organization

Hybrid cloud exposes the limits of siloed structures. Enterprises typically need stronger platform engineering capabilities, clearer ownership of shared services, and tighter collaboration between central architecture teams and domain delivery teams.

Architecture itself must evolve as well. Architects should focus less on static standards documents and more on:

- Reusable patterns

- Decision records

- Exception pathways

- Fitness criteria

- Continuous architecture review based on operational evidence

Common pitfalls

Several pitfalls appear repeatedly.

Premature standardization

Trying to define the entire target state before real adoption often produces frameworks that are too abstract or too rigid.

Tool-first transformation

Buying cloud management, security, or observability tools before defining the operating model usually adds complexity instead of reducing it.

Uncontrolled exception growth

If every difficult workload becomes a special case, the enterprise simply recreates fragmentation under a new name.

Underestimating people transition

Teams need new skills in automation, policy engineering, cost management, and distributed operations. They also need clear accountability for shared services, connectivity, incidents, and controls.

The strongest implementations treat hybrid cloud as a long-term operating model, built iteratively through repeatable capability delivery.

Conclusion

Hybrid cloud architecture is not a temporary bridge between old and new platforms. For large enterprises, it is the discipline of operating in the presence of permanent technology diversity without losing coherence.

The central idea throughout this article is simple: enterprises should accept environment diversity while enforcing architectural coherence. That means standardizing control planes, shared capabilities, and operating practices rather than forcing identical infrastructure or full workload portability. Once that distinction is understood, decisions about workload placement, governance, integration, security, observability, and cost become clearer.

The strongest hybrid strategies turn complexity into structured choice. They define where variation is acceptable, where consistency is mandatory, and how exceptions are governed. They build strong shared capabilities—identity, connectivity, policy enforcement, automation, and observability—so local teams can move quickly within enterprise guardrails. They modernize around business capabilities, not just platforms, and they treat governance as an architectural property rather than an after-the-fact review step.

Success should be measured through outcomes: how quickly teams can onboard to approved patterns, how consistently controls are enforced across environments, how recoverable critical services are, and how transparent cost and ownership have become. Those indicators show whether the enterprise has built a true hybrid operating model or merely connected a set of separate platforms.

In that sense, hybrid cloud is best understood as an enterprise architecture approach. It gives large organizations the ability to adapt hosting, sourcing, and modernization choices over time without repeatedly redesigning the whole estate. Done well, it provides optionality with control: the ability to evolve while preserving resilience, governance, and strategic flexibility. Sparx EA best practices

If you want, I can also provide:

- a tracked-change style editorial commentary explaining what was tightened and why, or

- a publication-ready final version with even sharper executive prose and slightly reduced word count.

Frequently Asked Questions

How is ArchiMate used in cloud architecture?

ArchiMate models cloud architecture using the Technology layer — cloud platforms appear as Technology Services, virtual machines and containers as Technology Nodes, and networks as Communication Networks. The Application layer shows how workloads depend on cloud infrastructure, enabling migration impact analysis.

What is the difference between hybrid cloud and multi-cloud architecture?

Hybrid cloud combines private on-premises infrastructure with public cloud services, typically connected through dedicated networking. Multi-cloud uses services from multiple public cloud providers (AWS, Azure, GCP) to avoid vendor lock-in and optimise workload placement.

How do you model microservices in enterprise architecture?

Microservices are modeled in ArchiMate as Application Components in the Application layer, each exposing Application Services through interfaces. Dependencies between services are shown as Serving relationships, and deployment to containers or cloud platforms is modeled through Assignment to Technology Nodes.