⏱ 23 min read

Sparx EA Best Practices: Enterprise Architecture Standards & Modeling Tips

Discover Sparx EA best practices for enterprise architecture, including repository structure, modeling standards, governance, traceability, collaboration, and documentation tips to improve consistency and project delivery.

Sparx EA best practices, Enterprise Architect best practices, Sparx Systems Enterprise Architect, enterprise architecture modeling, UML modeling standards, ArchiMate in Sparx EA, EA repository governance, requirements traceability, architecture documentation, model management, solution architecture, architecture governance ArchiMate modeling guide

Introduction

Sparx Enterprise Architect (EA) delivers the most value when it is managed as an architectural knowledge base rather than used only for drawing diagrams. In many organizations, it becomes the shared repository for business capabilities, processes, applications, interfaces, data, technology standards, and solution designs. Its real strength is not the number of diagrams it can produce, but the relationships it can preserve across those domains. When those relationships are modeled consistently, EA becomes genuinely useful for impact analysis, governance, roadmap planning, and end-to-end traceability from strategy through delivery.

That value does not emerge automatically. Repositories often become hard to trust because teams model too much, apply inconsistent conventions, or recreate the same content in multiple places. The pattern is common: individual diagrams are helpful in isolation, but the repository as a whole becomes difficult to navigate, reports lose credibility, and stakeholders return to spreadsheets and slide decks. Good practice in EA starts with a simpler principle: model with purpose. Before adding content, decide who the repository serves, which decisions it needs to support, and how much detail is actually needed.

That principle shapes every design choice that follows. A capability map built for investment planning should not be modeled at the same level of detail as a solution design prepared for delivery. A process view for executives should not look like a technical interaction model for engineers. Nor does every artifact require the same level of control. The objects that usually matter most are the ones that inform enterprise decisions: capabilities, applications, interfaces, data entities, technology products, standards, and the relationships between them. Those are the foundations of a repository people can rely on.

To keep EA sustainable at scale, organizations need a clear operating model. That means a lightweight metamodel, naming conventions, package structures, ownership responsibilities, lifecycle states, and review practices. These are not administrative extras. They are what allow multiple teams to contribute over time without turning the repository into a patchwork of disconnected modeling habits. The same applies to notation. ArchiMate, BPMN, UML, and SysML each have a role, but they work best when used for distinct purposes and connected through shared concepts rather than duplicated representations. ArchiMate tutorial for enterprise architects

This article looks at practical ways to use Sparx EA as a durable system of architectural record. It begins with standards and governance, then moves through repository structure, traceability, notation, collaboration, and automation. One idea runs through every section: model only what matters, govern the information that drives decisions, and maintain one trusted repository that can be reused across strategy, design, and delivery.

1. Establish Modeling Standards and Governance

The first requirement for a sustainable EA repository is consistency. If different architects model the same concept in different ways, the repository may still produce attractive diagrams, but it will not support dependable reporting, traceability, or impact analysis. Standards and governance provide the discipline needed to keep the repository usable across teams and over time.

A practical starting point is a lightweight metamodel. It does not need to be elaborate, but it should define the core element types the organization cares about, what each one means, and which relationships are allowed between them. Typical enterprise concepts include business capabilities, processes, applications, services, data entities, interfaces, technology components, standards, and initiatives. For each type, define mandatory attributes and clear usage rules. For example:

- Application: owner, lifecycle status, criticality, deployment type, hosting platform

- Interface: source, target, integration pattern, data classification, support owner

- Technology product: vendor, version, lifecycle status, standard status

- Business capability: description, owning function, maturity or criticality

This metamodel becomes the foundation for everything else. Repository structure, traceability, reporting, and notation choices all depend on shared definitions being in place first.

Naming standards matter just as much. In large repositories, weak naming quickly creates confusion and increases duplication. Element names, package names, and diagram names should follow clear conventions. The exact format can vary, but it should help users answer a few basic questions immediately: what is this object, where does it belong, and is it authoritative? Consistent naming also improves searchability and makes automation and reporting much easier later.

Package standards belong under governance as well, not just repository organization. Users should be able to predict where to find enterprise reference content, baseline architecture, and working solution material. If the package structure is inconsistent, contributors often recreate elements locally instead of reusing shared ones. That breaks traceability and weakens impact analysis.

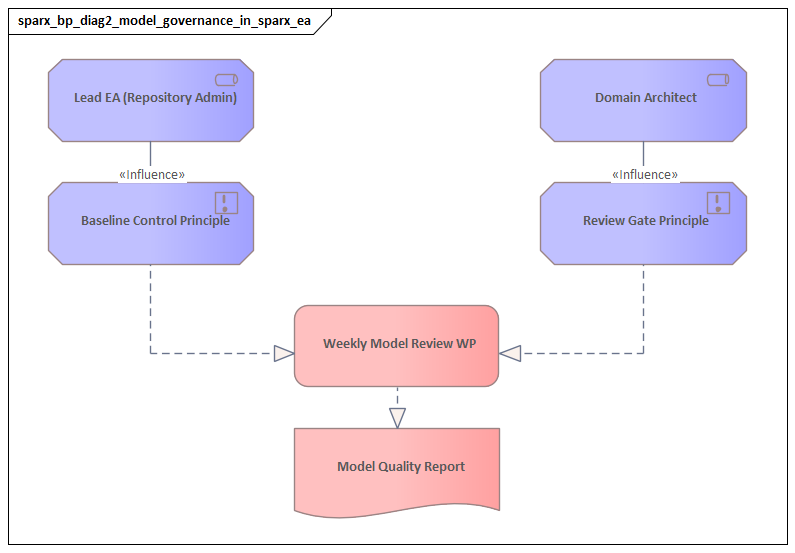

Governance only works when ownership is explicit. Every major domain, package, or catalog should have a named owner responsible for quality, review cadence, and alignment to standards. In most enterprises, a federated model works best: a central architecture function defines the metamodel, conventions, and quality rules, while domain architects maintain their own areas within those boundaries. That usually scales better than either tight central control or unrestricted local freedom.

Review processes should be part of normal repository management. New or changed content should pass through basic checks before it is treated as authoritative. Typical checks include:

- mandatory attributes completed

- duplicate detection

- correct relationship types

- lifecycle status assigned

- alignment to naming and package conventions

These controls do not need to be heavy, but they do need to be applied consistently. The goal is not administrative perfection. It is to ensure the repository can support decisions rather than simply store diagrams.

Lifecycle states are especially important. A proposed requirement, a draft solution component, and a production application should not all appear equally authoritative. Define clear states such as draft, in review, approved, baseline, implemented, and retired. These states become essential later when distinguishing current, planned, and obsolete content in traceability, reporting, and roadmap views.

The most effective governance remains focused on high-value information. Not every diagram needs the same level of control. Prioritize the objects and relationships that support decisions: capability-to-application mappings, application-to-interface dependencies, technology lifecycle, standards compliance, and ownership. A useful example is a platform standard decision. If an architecture board approves Azure API Management as the preferred external integration gateway, that decision should be reflected against the relevant technology standard, integration pattern, and affected solution designs. It should not live only in meeting minutes.

In short, standards and governance create the shared language of the repository. Without them, EA becomes a collection of diagrams. With them, it becomes a managed body of architectural knowledge.

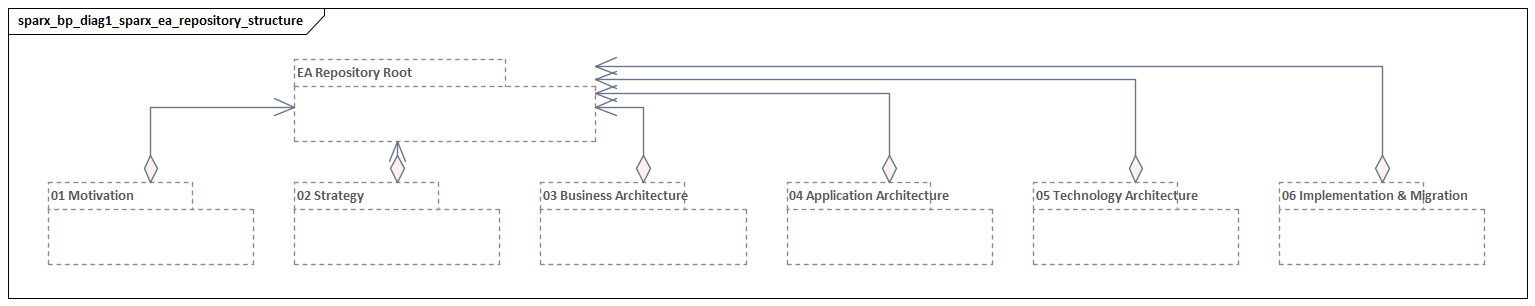

2. Structure Repositories and Packages for Scale

Once standards are in place, repository structure determines whether those standards can be applied consistently across the enterprise. A repository that works well for a small architecture team can become difficult to manage once multiple domains, portfolios, and delivery programs begin contributing content. Structure is therefore a design choice, not an administrative afterthought.

A useful pattern is to divide the repository into three broad zones:

- Reference content

Principles, standards, patterns, taxonomies, canonical concepts

- Baseline enterprise knowledge

Capabilities, applications, data domains, interfaces, technology products, current and target states

- Working or initiative areas

Solution designs, transition architectures, project-specific analysis, draft models

This separation helps distinguish long-lived enterprise assets from temporary delivery artifacts. Without it, repositories quickly blur the line between authoritative architecture and in-progress design work.

Package design should support both ownership and reuse. In practice, the most effective hierarchy usually aligns first to architecture domains, then to business or portfolio boundaries, and then to subject areas. For example:

- Business Architecture

- Application Architecture

- Data Architecture

- Technology Architecture

- Capability Model

- Value Streams

- Operating Model

- Customer Portfolio

- Finance Portfolio

- Shared Platforms

- Master Data

- Reporting Domains

- Infrastructure Platforms

- End User Computing

- Security Technologies

The exact layout matters less than consistency and clarity. Users should always know where the authoritative version of an element lives.

That point is central: reuse matters more than local convenience. If the same application, process, or interface is recreated in multiple packages, traceability becomes unreliable. Reports produce conflicting counts, dependencies are split across duplicates, and impact analysis starts to mislead. One authoritative element should be reused across diagrams and viewpoints wherever possible.

A simple micro-example makes the risk clear. Suppose a payments team creates its own “Customer Master API” element inside a project package instead of reusing the enterprise application service already held in the baseline repository. Six months later, the CRM team traces a data privacy change to the baseline object, while the payments team updates only its local copy. The result is predictable: impact reports understate the number of affected consumers, and governance decisions are made on incomplete information. The issue is not the diagram; it is the duplicated object.

Repository structure should also reflect maturity. Not every workshop sketch or early option model belongs in the shared enterprise knowledge base. Draft areas let teams work iteratively without weakening the integrity of approved content. Once material has been reviewed and linked to core enterprise objects, it can be promoted into the baseline repository. This promotion model works best when paired with the lifecycle states described earlier.

Version control is part of the same operating discipline. In repositories with many contributors, package control, locking, and controlled check-in/check-out help prevent conflicting edits and accidental overwrites. More importantly, versioning supports controlled movement between draft, approved, and baseline states. It provides auditability and helps teams understand how the architecture changed over time.

Scalable structure should also take downstream use into account. Packages should not be arranged purely for modeling convenience; they should also support reporting, publishing, and analysis. Ask practical questions such as:

- What needs to be published regularly?

- What needs to be queried consistently?

- Which content should be compared across time?

- Which domains need delegated ownership?

These questions matter because a repository that is difficult to report from will eventually be bypassed, no matter how carefully the diagrams have been drawn.

One final rule is to separate enterprise objects from viewpoints. Applications, capabilities, interfaces, and technologies are repository assets. Diagrams are viewpoints onto those assets. When teams treat diagrams as the primary artifact and create new elements inside each one, the repository fragments. When repository objects remain primary and diagrams are treated as reusable views, the model becomes much more durable.

With standards and structure in place, the next step is to use those shared objects to create meaningful traceability and impact analysis.

3. Use Requirements and Traceability to Support Decisions

One of EA’s strongest capabilities is traceability across strategy, architecture, and delivery. Used well, it helps teams answer practical questions with confidence: what is affected by a change, what depends on a technology, which requirements have been implemented, and where risk sits across the estate. Used poorly, it becomes an administrative burden made up of excessive links that add little analytical value.

The key is selective, decision-focused traceability. The repository should concentrate on the objects and relationships that matter most. Traceability should therefore connect requirements and change drivers to stable enterprise objects, not just to isolated project artifacts.

Start by distinguishing requirement types. Business requirements, regulatory obligations, stakeholder needs, architectural constraints, and non-functional requirements should not all be treated as one flat list. They serve different purposes and follow different validation paths. For example:

- A regulatory requirement may trace to policies, controls, processes, applications, and data stores.

- A performance requirement may trace to application services, infrastructure, and test evidence.

- A business requirement may trace to capabilities, processes, applications, and initiatives.

This structure makes reporting more useful and allows governance reviews to focus on the right evidence.

Traceability works best when it is anchored in the core concepts introduced earlier: capabilities, applications, interfaces, data entities, and technology services. These are stable enough to support enterprise-level analysis across multiple initiatives. A requirement linked only to a project document says little about wider impact. The same requirement linked to capabilities, processes, applications, and interfaces reveals whether the change is local or enterprise-wide.

A practical traceability chain often looks like this:

Driver or requirement → business capability → process → application → interface/data/technology

Not every change needs every link, but this pattern is usually enough to support governance and impact analysis without creating unnecessary maintenance overhead.

The most common mistake is trying to trace everything to everything else. That creates effort without producing better insight. Instead, prioritize traceability where it supports:

- architecture governance

- delivery risk management

- regulatory accountability

- investment decisions

- technology lifecycle planning

Relationship quality matters just as much as relationship quantity. Connectors should be semantically clear. A conceptual association, a runtime dependency, and an implementation usage relationship are not interchangeable. If teams use connectors inconsistently, impact analysis becomes unreliable even when the diagrams appear complete.

Lifecycle states make traceability more useful. A proposed requirement should not carry the same weight as an approved one. A planned application should not be treated as if it were already in production. When states are modeled consistently, the repository can show not only what is connected, but also what is current, planned, transitional, or retired. This matters especially in transformation programs where current-state and target-state architectures exist side by side.

Consider a realistic example from regulatory change. A bank receives a new requirement to retain customer consent evidence for seven years. If that requirement is linked only to the compliance project, the repository says very little. If it is traced to the customer onboarding capability, the consent capture process, the CRM platform, the document archive, the API that passes consent status to downstream channels, and the underlying storage standard, architects can immediately see where controls, design changes, and remediation work are required.

Impact analysis is the practical outcome of all this discipline. Before approving a technology retirement, process redesign, or regulatory change, architects should be able to identify affected capabilities, applications, interfaces, and business areas directly from the repository. An IAM modernization initiative, for example, might trace a zero-trust access requirement to identity services, HR joiner-mover-leaver processes, Active Directory, SSO platforms, and the applications that still rely on legacy LDAP integration. That kind of analysis is only possible when the core objects are authoritative, the package structure supports reuse, and relationships are governed consistently.

Traceability, then, is not just a compliance exercise. It is what turns the repository from documentation into decision support.

4. Use ArchiMate, BPMN, UML, and SysML Deliberately

Because Sparx EA supports multiple notations, teams often create overlap without meaning to. The issue is rarely lack of capability; it is usually lack of discipline. If the same application, process, or service is modeled separately in ArchiMate, BPMN, and UML with no clear relationship between those views, the repository becomes harder to maintain and harder to trust. ArchiMate layers explained

The principle remains the same: one authoritative concept, multiple viewpoints where needed.

ArchiMate for enterprise-level structure

ArchiMate is usually the best choice for cross-domain enterprise architecture views. It is strong at showing relationships between business capabilities, value streams, applications, data, technology services, and transformation initiatives. It works well for: ArchiMate relationship types

- current-state and target-state landscapes

- capability-to-application mappings

- dependency maps

- transition architectures

- roadmap communication

Its strength is abstraction across layers. It is less effective when used as a substitute for detailed technical design.

BPMN for operational behavior

BPMN is most useful when process flow, roles, events, handoffs, and exceptions need operational precision. It is well suited to:

- business workflows

- service interactions

- automation opportunities

- exception handling

- operational process redesign

BPMN models should link back to capabilities, applications, and information objects. A process model that is not connected to the rest of the repository quickly becomes isolated documentation.

UML for solution and software design

UML is most useful when more design precision is needed than ArchiMate or BPMN can provide. Typical uses include: ArchiMate modeling best practices

- use cases

- component models

- sequence diagrams

- class models

- deployment views

UML should be used selectively. Not every solution needs the full UML toolkit, and technical diagrams that are only partially maintained become cluttered quickly. Use it where engineering teams genuinely need the detail.

SysML for engineered systems

SysML is relevant where software, hardware, physical assets, and operational systems need to be modeled together. In many business-and-IT-focused enterprises, its use will be limited. In sectors such as manufacturing, transport, utilities, aerospace, or defense, it can be essential for end-to-end systems engineering.

Integrating the notations

The practical challenge is not choosing a single notation, but connecting several of them coherently. A common pattern is:

- ArchiMate for enterprise context and cross-domain relationships

- BPMN for the processes that realize capabilities

- UML for the solution components and interactions that implement those processes

- SysML where physical or engineered system concerns extend beyond software

This layered approach preserves traceability across abstraction levels without introducing unnecessary duplication.

A useful rule is to maintain one authoritative representation for each concept in the repository, then expose it through different notations as needed. For example, an application should remain the same repository object whether it appears in an ArchiMate landscape, a BPMN-supported process view, or a UML deployment model. If teams create separate versions simply to suit diagram preferences, they lose the benefits of the shared repository.

A practical micro-example is event-driven architecture. A Kafka-based order event platform might appear in ArchiMate as an application service supported by messaging technology, in BPMN as the event that triggers downstream fulfilment and invoicing steps, and in UML as producer-consumer interactions with topic contracts. Those are not three different systems. They are three views of the same architecture.

Notation choice should also follow audience and purpose. Executives rarely need UML sequence diagrams. Delivery teams may not need high-level capability maps except as context. Governance boards usually need concise views that connect enterprise concerns to implementation consequences. Clarity matters more than demonstrating modeling range.

With notation discipline in place, the repository can support broader participation. The next section looks at how to make EA useful not just for modelers, but also for reviewers, delivery teams, and decision-makers.

5. Enable Collaboration, Reviews, and Stakeholder Communication

A repository only becomes valuable at enterprise scale when it supports collaboration across roles. Architects may curate the model, but many others need to review it, challenge it, consume it, or rely on the information within it. If EA remains a specialist tool used only by a small modeling group, it may be technically sound yet organizationally weak.

Different stakeholders need different kinds of interaction with the same repository:

- Enterprise and solution architects need direct modeling access.

- Business stakeholders need concise views, heatmaps, and decision summaries.

- Delivery teams need standards, dependencies, and solution-relevant views.

- Governance boards need evidence of alignment, risk, and traceability.

- Operational stakeholders need ownership, lifecycle, and dependency information.

This is why the earlier sections matter. Standards make content consistent, structure makes it easy to find, traceability makes it useful, and notation discipline makes it understandable.

Review practices should align to decision points rather than informal circulation. Typical checkpoints include:

- concept review

- solution review

- design assurance

- implementation readiness

- post-delivery validation

At each point, reviewers should understand what they are being asked to assess and what outcomes are possible. Review comments, issues, and decisions should be linked back to the relevant model content wherever possible. That keeps the repository connected to governance history instead of leaving decisions scattered across email threads and slide decks.

It is also important to distinguish contribution from endorsement. Many people may provide input, but not all input should automatically become authoritative model content. Broad participation is useful in workshops and reviews; approval responsibility should remain with designated owners or governance bodies. That balance preserves openness without weakening control.

Workshops are often the most effective way to improve repository quality, especially for capability mapping, application rationalization, interface reviews, and target-state design. But workshops only add value when the follow-through is disciplined. Strong teams prepare viewpoints in advance, keep the scope clear, and turn outcomes into governed model updates quickly. Long delays between discussion and repository update reduce confidence and encourage unofficial copies of the architecture to appear elsewhere.

Communication quality matters as much as model quality. The repository does not explain itself. Stakeholders need curated outputs that answer their questions clearly. Common examples include:

- executive summaries

- roadmap views

- dependency visuals

- standards catalogs

- impact snapshots

- solution review packs

These outputs should be generated from the repository, not rebuilt manually each time. For example, a technology standards review pack may show that Windows Server 2012 is now in “retire” status, identify the applications still hosted on it, and present the agreed remediation timeline for the architecture board.

Another practical example is merger integration. After an acquisition, architects often need to brief executives on overlap across application portfolios. A concise repository-driven view can show that both firms run separate HR, CRM, and document management platforms, highlight the duplicated capabilities, identify integration dependencies, and support a rationalization decision. Without curated views, the same discussion tends to happen repeatedly in PowerPoint, with different numbers each time.

A mature EA practice therefore treats collaboration as part of the operating model. The repository enables participation, but standards, ownership, review discipline, and audience-specific communication are what make that participation effective. When stakeholders see that published views are current, governed, and traceable to the underlying model, trust in architecture increases.

6. Automate Reporting, Documentation, and Quality Assurance

As repositories mature, manual upkeep becomes one of the biggest threats to long-term value. Architects spend too much time producing reports, checking completeness, and maintaining catalogs by hand. Automation is what allows the repository to stay current and credible at scale.

The first priority is to automate the outputs stakeholders use repeatedly. Typical candidates include:

- application inventories

- interface catalogs

- technology standards reports

- capability-to-application mappings

- solution review packs

- lifecycle dashboards

- policy compliance reports

Automation improves consistency as much as efficiency. If the same dashboard is generated each month from the same authoritative attributes and relationships, architecture discussions become more evidence-based and less dependent on individually assembled slide decks.

As with traceability, reporting should be driven by decisions, not by generic extraction. The most useful reports answer specific questions, such as:

- Which applications are out of support?

- Which interfaces lack an owner or classification?

- Which solutions use non-standard technology?

- Which projects affect a regulated process?

- Which business capabilities depend on a platform marked for retirement?

Exception-oriented reporting is often more valuable than descriptive reporting. Stakeholders usually gain more from seeing gaps, policy breaches, and concentrations of risk than from receiving large volumes of static content.

Quality assurance should be automated in the same spirit. Rather than relying on periodic cleanup exercises, define repeatable validation checks such as:

- missing mandatory attributes

- invalid lifecycle combinations

- duplicate elements

- orphaned objects

- incorrect connector usage

- diagrams referencing deprecated content

- standards non-compliance

These checks should reflect the governance priorities established earlier. A missing owner on a production application matters more than a minor diagram formatting issue. Quality controls should therefore focus on the information needed for real decisions.

A practical approach is to define minimum data quality thresholds for key object types. For example, a production application may require:

- business owner

- technical owner

- lifecycle status

- criticality

- supported capability link

- hosting or platform information

If these are incomplete, the application should not appear as fully authoritative in official reporting. This ties quality assurance directly to enterprise management needs rather than to abstract modeling neatness.

Automation can also expose architecture debt that would otherwise remain hidden. For instance, a scheduled standards compliance report might show that twelve applications still depend on Oracle 11g, three of them supporting regulated customer processes. That single view can trigger a remediation roadmap, funding discussion, and targeted governance review. Without automation, the same issue might sit undetected across several disconnected diagrams and manually maintained inventories.

It is also useful to separate diagnostic reporting from stakeholder communication. Architects need detailed exception lists and validation findings. Executives and governance forums need summarized indicators, such as:

- completeness scores

- standards compliance trends

- technical debt exposure

- ownership gaps

- model confidence levels by domain

Scheduled publication strengthens trust even further. When web views, dashboards, catalogs, and review packs are refreshed on a predictable cadence, stakeholders know where to find current architecture information. That reduces the temptation to maintain unofficial copies in spreadsheets or presentations. Over time, the repository becomes the default source for architectural knowledge.

Automation does not replace stewardship. It amplifies it. If the metamodel is weak, the package structure inconsistent, or ownership unclear, automation will simply reproduce poor-quality content faster. But when the foundations described earlier are in place, automation turns EA into a scalable system of record that supports governance, communication, and decision-making with far less manual effort.

Conclusion

Sparx EA delivers the greatest value when it is run as an architectural capability rather than treated as just another modeling tool. The practices in this article reinforce one another. Define standards and governance so the repository has a shared language. Structure packages and ownership so that language can scale. Use traceability selectively to support real decisions. Apply notations deliberately so viewpoints complement one another instead of duplicating the same concepts. Enable collaboration through clear reviews and audience-specific communication. Then automate reporting and quality assurance so the repository remains useful over time.

Across all of these practices, the same principle holds: model with purpose. The repository should contain the information that supports enterprise decisions, and it should do so in a way that is consistent, governed, and reusable. When that happens, EA becomes more than a place to store diagrams. It becomes a trusted system of record for strategy, design, delivery, and change.

The most mature organizations use EA not only to describe the estate, but to guide how that estate evolves. They can trace a proposed change from business driver to capability, process, application, interface, and technology implication. They can see where standards are being breached, where dependencies create risk, and where investment should be focused. That is the practical result of strong EA practice: clearer communication, stronger governance, and better-informed change across the enterprise.

Frequently Asked Questions

What is Sparx Enterprise Architect used for?

Sparx Enterprise Architect (Sparx EA) is a comprehensive UML, ArchiMate, BPMN, and SysML modeling tool used for enterprise architecture, software design, requirements management, and system modeling. It supports the full architecture lifecycle from strategy through implementation.

How does Sparx EA support ArchiMate modeling?

Sparx EA natively supports ArchiMate 3.x notation through built-in MDG Technology. Architects can model all three ArchiMate layers, create viewpoints, add tagged values, trace relationships across elements, and publish HTML reports — making it one of the most popular tools for enterprise ArchiMate modeling.

What are the benefits of a centralised Sparx EA repository?

A centralised SQL Server or PostgreSQL repository enables concurrent multi-user access, package-level security, version baselines, and governance controls. It transforms Sparx EA from an individual diagramming tool into an organisation-wide architecture knowledge base.