⏱ 23 min read

Enterprise Architecture Governance Checklist with Examples ArchiMate for governance

Use this enterprise architecture governance checklist with practical examples to improve decision-making, align IT with business goals, manage risk, and standardize architecture oversight. how architecture review boards use Sparx EA

enterprise architecture governance checklist, enterprise architecture governance, architecture governance examples, EA governance framework, enterprise architecture checklist, architecture review board, IT governance, architecture standards, governance model, enterprise architecture best practices Sparx EA best practices

Introduction

Enterprise architecture governance is what turns architecture from intent into action. Many organizations invest heavily in principles, standards, target-state models, and roadmaps, then discover that projects still move ahead with little reference to any of them. Delivery teams optimize for speed. Business sponsors respond to immediate priorities. Procurement decisions are made under time and commercial pressure. In that setting, architecture can become something people endorse in principle but bypass in practice. architecture decision record template

Governance closes that gap. It defines how architecture decisions are made, who has authority, when review is required, what evidence teams need to bring, and how compliance and exceptions are managed over time. More importantly, it connects architectural direction to funding, design, procurement, delivery, and operational transition. In a large enterprise, that connection matters because business units, product teams, security, data, and infrastructure functions rarely move at the same pace or to the same priorities.

Effective governance does not have to be heavy. Its purpose is not to maximize review activity or centralize every technology choice. The aim is to apply the right level of control to decisions with genuine enterprise impact. A small enhancement to an internal workflow tool should not go through the same process as a new customer platform that processes regulated data, introduces AI services, or creates multi-region cloud dependencies. Mature architecture teams understand this and design governance around proportional review, clear decision rights, approved patterns, and controlled exceptions.

A good governance checklist shifts review away from opinion and toward evidence. Instead of asking whether a proposal simply “looks aligned,” reviewers can assess concrete factors such as business capability impact, conformance to standards, data ownership, integration design, security controls, operational readiness, and lifecycle implications. That makes decisions more consistent across portfolios and easier to explain to executives, auditors, and delivery leaders.

Visibility is just as important as rigor. Governance usually attracts resistance when it shows up late as an unexplained gate. A shared checklist makes expectations visible much earlier. Teams know what artifacts to prepare, where issues are likely to arise, and when a formal exception may be needed. Used well, governance becomes part of design rather than a barrier placed in front of it.

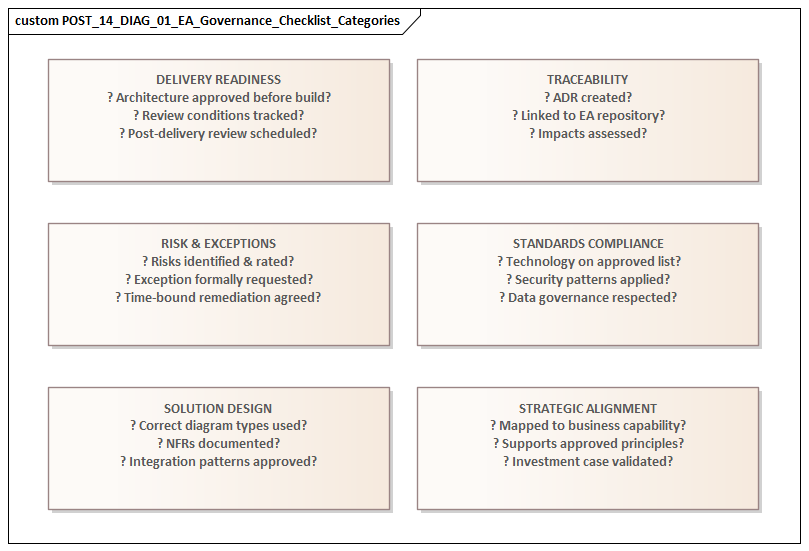

This article presents a practical enterprise architecture governance checklist across six areas: governance purpose, design principles, roles and decision rights, operating processes, standards and risk controls, and continuous improvement. The sections build in sequence. It begins with what governance is and why it matters, then sets out the principles behind an effective model, and finally moves into the checklist elements that make governance work in practice. Short examples throughout show how governance can support delivery speed while still protecting enterprise coherence, resilience, and strategic alignment.

1. What Enterprise Architecture Governance Is and Why It Matters

Enterprise architecture governance is the management system that connects architectural intent to execution. If enterprise architecture defines target states, standards, and roadmaps, governance determines how decisions are made to move toward them. It establishes the policies, forums, accountabilities, and control points that influence investment choices, solution design, technology selection, and operational risk.

That distinction matters. Architecture produces direction. Delivery teams implement change. Governance sits between them and ensures strategic direction is interpreted consistently in day-to-day decisions. Without it, even strong architecture can be displaced by urgent projects, fragmented funding, local technology preferences, or supplier-led choices.

The need becomes clearer as organizations scale. A larger enterprise accumulates more systems, more teams, more vendors, and more overlapping processes. Decision-making spreads out. Choices that appear reasonable in isolation can gradually create enterprise-wide problems: duplicate platforms, inconsistent customer data, fragile integrations, unsupported tooling, security exposure, and rising operating cost. Governance introduces discipline by evaluating decisions not only for local benefit, but also for their cumulative effect on the wider landscape.

Its practical value is simple: architecture creates value through decisions, not diagrams. A target-state application map changes very little unless funding approvals, procurement rules, design checkpoints, and implementation controls reinforce it. An organization may declare an API-first strategy, for example, but unless projects are required to justify point-to-point integrations and governance forums can challenge nonstandard designs, the strategy remains aspirational. The same holds for event-driven architecture. Naming Kafka as the enterprise event backbone means little if teams can still default to direct database coupling or one-off message brokers without scrutiny.

Governance also helps balance standardization with autonomy. Modern enterprises cannot centralize every technical decision, and in most cases should not try. Product teams and domain-aligned delivery groups need freedom to move within clear guardrails. Effective governance therefore focuses on decisions with wider impact: cross-domain data sharing, customer-facing platforms, regulatory controls, identity patterns, hosting models, resilience requirements, and major technology choices. Lower-risk decisions should move through approved standards, reference architectures, and delegated authority. That proportional model is what keeps governance credible instead of obstructive.

Another benefit is traceability. Executives want to know whether investments are reducing complexity, increasing reuse, strengthening resilience, and advancing strategic capabilities. Governance provides evidence through review records, exception logs, standards adoption measures, and post-implementation findings. In that sense, it makes architecture visible as an operating capability rather than a conceptual discipline.

Governance also acts as a feedback mechanism. Repeated waivers, recurring design issues, and persistent noncompliance usually point to something deeper: an outdated standard, an impractical process, or a reference pattern that no longer fits delivery reality. Good governance does more than enforce architecture. It helps improve it.

A simple example illustrates the point. A retail enterprise may have a principle that all customer interactions should be exposed through standard APIs. If three separate programs still procure SaaS products that each create their own customer profile store, the issue is not a missing principle. The issue is absent or ineffective governance. Someone should have challenged the duplication, the data ownership model, and the integration consequences before contracts were signed.

2. Core Principles of an Effective Governance Model

Governance succeeds or fails less because of formal structure than because of a small number of design principles. Those principles provide the foundation for the checklist that follows.

1. Proportionality

Governance should match the scale, complexity, and risk of the proposed change. If every initiative is treated the same way, governance becomes a bottleneck and teams start finding ways around it. High-impact changes such as replacing a core platform, integrating sensitive data, or selecting a major new vendor deserve deeper scrutiny. Lower-risk changes should move through lighter pathways with simpler evidence requirements. This principle shapes almost everything else: review forums, policy application, exception handling, and metrics.

2. Clarity of decision rights

Governance deteriorates quickly when people do not know who advises, who approves, and who is accountable. Enterprise architects may define principles and target states, domain architects may own technology standards, security may own control requirements, and delivery leaders may remain accountable for implementation choices within approved guardrails. Clear decision rights reduce confusion and stop governance forums from becoming discussion groups with no authority.

3. Governance by exception

Mature architecture teams do not inspect every design choice. Instead, they establish approved patterns, reference architectures, and reusable standards that teams can adopt without repeated review. Formal governance then concentrates on exceptions, novel technologies, high-risk trade-offs, and decisions with cross-domain impact. That preserves enterprise control where it matters while keeping routine delivery moving.

4. Evidence-based assessment

Governance should rest on explicit criteria and documented rationale, not personal preference. Reviews should ask for relevant evidence: business capability impact, nonfunctional requirements, integration design, data classification, operational ownership, vendor implications, and lifecycle cost. Evidence-based governance is more consistent, easier to defend, and far clearer to delivery teams because expectations are visible from the outset.

5. Early engagement

Governance works best before major commitments are locked in. If architecture review happens only at procurement or build stage, teams may already be committed to unsuitable technologies, timelines, or contracts. When governance is built into ideation, business case development, and early solution framing, architects can influence direction while change is still relatively inexpensive.

6. Lifecycle traceability

Governance should not stop at design approval. It needs to connect strategic intent, design review, implementation, release readiness, and operational outcomes. Without that continuity, governance becomes a document exercise rather than a mechanism that shapes real change.

7. Continuous adaptation

Enterprise environments change faster than static governance models. Cloud platforms evolve, delivery methods accelerate, regulatory expectations shift, and new architectural patterns emerge. Governance therefore needs regular review using evidence such as exception rates, review turnaround time, recurring control failures, and standards adoption.

Taken together, these principles create a governance model that is disciplined without becoming burdensome. They also provide a practical test: if a governance process is slow, opaque, or frequently bypassed, one or more of these principles is usually missing.

A small example makes this concrete. In one insurer, teams building low-risk internal automations were required to attend the same monthly architecture board as teams replacing policy administration platforms. The result was predictable: long queues, low-value review, and widespread bypass behavior. Splitting the process into lightweight domain review for standard changes and enterprise review for strategic or risky changes reduced turnaround times and improved compliance at the same time.

3. Governance Checklist: Roles, Policies, and Decision Rights

Once the principles are clear, the next step is to define the operating foundations of governance. In practice, many governance failures trace back to the same three issues: unclear ownership, weak policy structure, or ambiguous authority at key decision points. This section turns the principles from Section 2 into a practical checklist.

A. Roles and accountability

The first checklist item is whether governance roles are formally defined and understood beyond the architecture team itself. A workable model usually includes enterprise architects, domain architects, solution architects, security architects, data architects, platform owners, delivery leaders, and business sponsors. The important question is not simply who attends a review forum, but who is accountable for each type of decision.

Typical responsibilities may include:

- Enterprise architects: principles, target states, cross-portfolio alignment

- Domain architects: standards for cloud, integration, data, security, or infrastructure domains

- Solution architects: demonstrating that proposed solutions align with enterprise and domain guardrails

- Platform owners: shared service usage, operational standards, lifecycle requirements

- Architecture review boards: arbitration of major cross-domain issues and material exceptions

- Business or product sponsors: acceptance of cost, timing, and business-risk trade-offs

These role definitions need to be visible in delivery workflows, not buried in policy documents. If teams still have to ask who can approve an exception, or whether architecture is advisory or mandatory in a given case, governance is not yet operational.

B. Policy coverage and structure

The second checklist item is whether architecture policies are complete, current, and usable. Broad principles such as “cloud first” or “reuse before buy before build” are not enough on their own. They need supporting decision rules and clear applicability criteria.

A stronger policy set defines:

- which standards are mandatory, preferred, conditional, or advisory

- where each policy applies by business unit, platform, data type, or initiative type

- what triggers architecture review

- which technologies, patterns, and reference architectures are approved

- how exceptions and waivers are handled

- what evidence teams must provide at review

- what lifecycle obligations apply, including patching, decommissioning, and support ownership

Policy structure should also separate enterprise-wide controls from domain-specific rules. Identity, data classification, and regulatory controls may apply universally, while hosting, integration, or observability rules may vary by workload type. That distinction supports proportionality and makes policies easier to use.

C. Decision rights and escalation paths

The third checklist item is whether decision rights are explicit at each governance checkpoint. Many disputes that appear architectural are, in reality, disputes about authority. Teams need to know who can approve a standard design, who can authorize a deviation, who can accept residual risk, and when an issue must be escalated.

Decision rights should be mapped for recurring scenarios such as:

- selection of nonstandard technology

- use of regulated or sensitive data

- cross-domain integration design

- major SaaS or platform procurement

- extension of end-of-life technology

- acceptance of technical debt or resilience risk

Escalation paths should be just as clear. If architecture, security, and delivery disagree, the route to resolution should already be defined. Routine cases should move quickly. Exceptional cases should surface at the right level of accountability.

D. Micro-example: federated governance in practice

Consider a large enterprise with a federated operating model. Business units can choose local solutions within defined enterprise guardrails, while enterprise architecture retains authority over identity, customer data, event platforms, and strategic technologies.

In that model:

- a business unit can approve a local workflow tool if it uses approved identity services and does not create a new customer data store

- a domain architect can approve use of the standard Kafka pattern for publishing order events without board review

- a proposal to onboard a nonstandard SaaS platform handling regulated data must go to enterprise review and formal security risk acceptance

- a decision to extend a sunset database platform for 12 months must be escalated to a technology leadership forum with funded remediation

That is what operational governance looks like. Principles become real only when roles, policies, and decision rights are explicit.

4. Governance Processes in Practice: Reviews, Exceptions, and Escalation

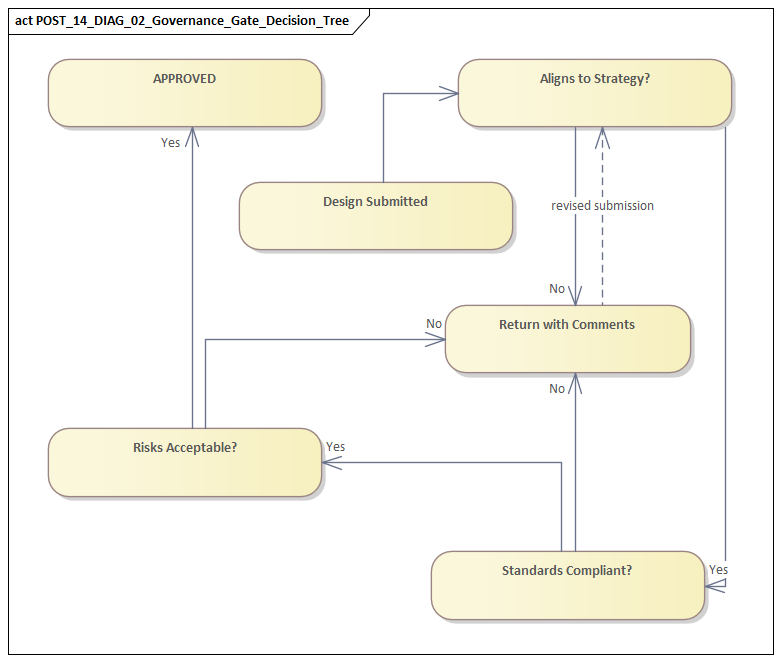

Once roles and authority are clear, governance stands or falls on the operating process. The practical question is not whether an organization has an architecture board, but whether governance activities happen at the right time, use the right evidence, and reach decisions quickly enough to influence delivery.

A. Review forums should make decisions

A common weakness is treating review boards as presentation forums instead of decision forums. Effective reviews are organized around clear outcomes: approve, approve with conditions, request changes, or escalate. That requires entry criteria, pre-read materials, and a defined agenda. Review time should not be spent discovering basic facts that should already have been documented.

As proportionality suggests, different forums are usually needed for different levels of change:

- Lightweight design checkpoints for standard project changes

- Domain reviews for integration, data, cloud, or security concerns

- Enterprise architecture reviews for cross-portfolio impact or major exceptions

- Executive or investment forums for high-cost, high-risk, or strategically significant escalations

This layered model keeps senior forums focused on enterprise-significant decisions while allowing routine cases to move quickly.

B. Review timing must fit delivery cadence

Governance loses relevance if it cannot operate at delivery speed. Product teams working in short increments cannot wait for monthly boards to resolve routine decisions. Review windows, service levels, and evidence requirements need to match the rhythm of delivery. Heavier forums should be reserved for high-impact decisions; standard cases should rely on approved patterns, delegated authority, or asynchronous review.

This is where early engagement matters. If governance appears only after procurement or build is underway, it becomes expensive and adversarial. If it is involved during initiative framing, it can shape options while trade-offs are still manageable.

C. Exception management as a controlled process

No governance model can eliminate deviations entirely. The real issue is whether exceptions are visible, justified, time-bounded, and linked to accountable risk acceptance.

A strong exception process requires teams to document:

- the standard or policy being bypassed

- the business rationale

- options considered

- risks introduced

- mitigating controls

- proposed expiry or review date

- accountable owner for remediation or ongoing management

That is substantially stronger than ad hoc approvals through email or personal influence. It also creates a reusable record of where standards may not be working in practice.

D. Exceptions as enterprise feedback

Exceptions should not be treated only as compliance records. They are also a source of insight. If multiple teams seek waivers for the same integration rule, cloud pattern, or vendor restriction, governance should ask whether the standard is outdated, whether the approved platform is too slow, or whether the delivery model has changed.

E. Escalation and dispute resolution

Disagreements are inevitable. Architecture may emphasize long-term coherence, security may emphasize control, delivery may emphasize deadlines, and sponsors may emphasize immediate business value. Governance needs a formal way to resolve those tensions without letting them drag on indefinitely.

Escalation should be triggered when there is:

- unresolved cross-domain impact

- material security or regulatory exposure

- significant unplanned cost

- major strategic technical debt

- enterprise-wide dependency creation

The escalation process should surface trade-offs rather than positions. The question is not whether architecture or delivery “wins,” but what each option means for cost, speed, resilience, compliance, and future complexity. Framed that way, accountable leaders can make informed decisions with visible ownership.

F. Micro-example: approving a niche cloud service

A product team wants to launch a new digital service quickly and proposes using a niche cloud service that is not part of approved enterprise patterns. The domain architect accepts that the service solves a real delivery problem, but security identifies gaps in identity integration and logging, while platform operations raises support concerns.

A practical governance path would be:

- a domain review assesses technical fit and alternatives

- security documents control gaps and compensating measures

- architecture evaluates strategic impact and potential reusability

- an enterprise forum decides whether to approve a time-bound exception, require additional controls, or reject the proposal

- the decision is recorded with an expiry date and review point

This is governance doing its job: not blocking novelty by default, but making the trade-offs explicit before the enterprise absorbs the consequences.

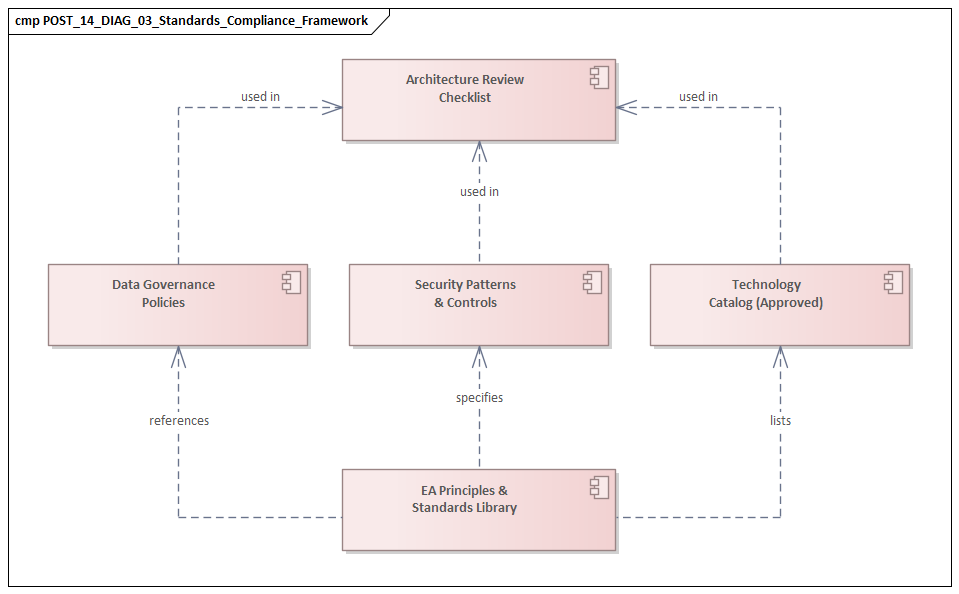

5. Standards, Compliance, and Risk Management with Examples

Standards, compliance, and risk management are where governance becomes testable in operational terms. It is one thing to define preferred technologies and principles; it is another to confirm that teams are using them, that deviations are visible, and that risks are being managed deliberately.

A. Classify standards clearly

A useful standards model distinguishes between types of control instead of treating every standard as equally critical:

- Mandatory standards: identity, encryption, logging, backup, regulatory controls

- Preferred standards: platforms, integration methods, observability tooling, deployment patterns

- Conditional standards: controls that depend on data class, region, workload, or business process

- Sunset standards: technologies that are end-of-life, restricted, or approved only for containment

This makes governance more practical. Some controls are non-negotiable. Others are optimization choices that may be waived with justification.

B. Treat compliance as a lifecycle activity

Compliance should not be reduced to a one-time design approval. A solution may be compliant during review and drift later through sprint decisions, emergency fixes, vendor changes, or infrastructure reconfiguration. Mature governance therefore checks compliance at multiple points:

- during initial design

- before production release

- after implementation or during operational review

Where possible, these checks should be automated through policy-as-code, cloud configuration scanning, API validation, software composition analysis, and infrastructure compliance tooling. Manual review still has a place, but automation makes governance more scalable and more consistent.

C. Assess risk, not just noncompliance

Governance should assess not only whether a standard was followed, but also what enterprise risk is created when it is not. Useful categories include:

- operational fragility

- cyber exposure

- vendor lock-in

- data quality risk

- resilience weakness

- support gaps

- cost volatility

This matters especially when teams request exceptions. The real question is not simply, “Is this nonstandard?” but “What risk does this introduce, who accepts it, and for how long?”

D. Micro-example: IAM modernization

An enterprise standardizes on a modern identity platform with single sign-on, MFA, and centralized lifecycle management. A business unit wants to keep a legacy application on local authentication because migration would delay release. Governance identifies the wider risks: duplicate identities, inconsistent access reviews, weak joiner-mover-leaver controls, and higher audit exposure.

A proportionate response may allow a short-lived exception with federation and MFA added immediately, while the application is scheduled into the next migration wave. That outcome protects delivery dates without normalizing a poor long-term pattern.

E. Micro-example: event architecture with Kafka

A payments team proposes direct database integration to publish transaction updates because it is faster than using the approved Kafka platform. Governance challenges the design on coupling, replay, scalability, and auditability. The team is asked either to adopt the standard event pattern or justify an exception.

In practice, the result is often a narrower and better decision: use Kafka for business events that need replay and broad consumption, while retaining batch reconciliation for low-value downstream reporting. Governance adds value here not by forcing purity, but by clarifying where standardization matters most.

F. Micro-example: technology lifecycle governance

A customer service platform still runs on a database version due to leave support within nine months. The application owner requests an extension because a replacement program is delayed. Governance should treat this as more than a technical inconvenience. It should require risk assessment, vendor support confirmation, a funded remediation date, and executive visibility if the platform supports regulated processes.

This is lifecycle governance in practice: making end-of-life risk visible before it becomes an incident, an audit finding, or a forced emergency upgrade.

6. Metrics, Continuous Improvement, and Common Pitfalls

A governance model is credible only if the organization can tell whether it is working. That takes more than counting reviews or published standards. Useful metrics show whether governance is influencing outcomes, reducing avoidable complexity, and doing so without imposing unnecessary drag on delivery.

A. Measure four things: flow, conformance, risk, and value

A practical metric set can be grouped into four categories.

1. Flow metrics

These show whether governance is timely enough to support delivery:

- review turnaround time

- percentage of reviews completed within service targets

- time to approve or reject exceptions

- percentage of initiatives engaging architecture early

These measures help reveal whether governance is acting as an enabler or a bottleneck.

2. Conformance metrics

These show how consistently teams align with architectural direction:

- adoption rates for approved platforms

- percentage of solutions using standard integration patterns

- number of unsupported technologies introduced

- count of expired exceptions still open

Tracking these by business unit or portfolio often reveals patterns that a single enterprise average hides.

3. Risk metrics

These show the enterprise consequences of architectural decisions:

- number of high-risk exceptions approved

- concentration of systems on sunset technology

- unresolved resilience gaps

- audit findings linked to architecture noncompliance

- incidents associated with nonstandard designs

These metrics move the conversation from abstract standards to visible exposure.

4. Value metrics

These are often the hardest to define, but they matter most for executive credibility:

- reduction in duplicate platforms

- increased reuse of shared services

- lower integration cost through standard APIs and event patterns

- faster onboarding to approved cloud patterns

- improved delivery predictability when reference architectures are used

Value metrics show that governance is improving enterprise economics, not merely enforcing process.

B. Use metrics to improve the model

Metrics matter only if they lead to change. A quarterly governance health review can bring together architecture, security, delivery, and platform leaders to examine trends, recurring exceptions, slow decision points, and standards that are routinely bypassed. The purpose should be learning, not blame.

One of the most useful techniques is to analyze exceptions as data. If teams repeatedly seek waivers for the same rule, the organization should ask whether the problem is poor discipline, an unrealistic standard, or a missing platform capability.

C. Common pitfalls to avoid

Several governance pitfalls appear repeatedly across enterprises:

- Measuring activity instead of impact: high review volume may indicate overload, not effectiveness.

- Late-stage governance: if review happens after key commitments, governance becomes reactive and adversarial.

- Permanent exceptions: repeatedly extended waivers undermine authority and become an alternative operating model.

- Centralized overreach: pulling every design decision into enterprise forums slows delivery and encourages bypass behavior.

- Opaque decision-making: if teams cannot understand why decisions are made, governance is seen as subjective.

- No feedback loop: if metrics and exceptions never influence standards, platforms, or investment priorities, governance stagnates.

D. Micro-example: improving cloud onboarding

Suppose governance metrics show that 40 percent of cloud-related exceptions involve requests for temporary development environments outside the standard landing zone. A weak response would be to tighten approvals. A stronger response would be to ask why teams are bypassing the standard path in the first place.

If the approved onboarding process takes six weeks and experimentation requires same-day provisioning, the issue is not only compliance. It is also governance design and platform capability. The right improvement may be a pre-approved lightweight sandbox pattern with automated controls.

That kind of response signals governance maturity: using evidence to improve the operating model rather than simply increasing enforcement. free Sparx EA maturity assessment

Conclusion

An enterprise architecture governance checklist is useful only if it becomes part of how change is actually run. The real test is whether governance improves decision quality at the moments that matter most: funding, design, technology selection, exception approval, and operational handover.

Strong governance models usually share the same traits. They apply proportional oversight, define decision rights clearly, rely on evidence rather than opinion, and govern by exception wherever possible. They also connect architecture to delivery across the full lifecycle, from early shaping through to operational reality. Those characteristics are what make governance practical rather than ceremonial.

Effective organizations do not eliminate exceptions or achieve perfect compliance. What they do instead is make trade-offs visible, assign accountability clearly, and learn from recurring patterns. Roles, policies, review forums, standards, and metrics all matter, but only when they work together as a single operating capability.

For architecture leaders, the priority is to keep governance focused on enterprise-significant outcomes: coherence, resilience, security, cost control, and strategic alignment. For delivery teams, the priority is to engage early, use approved patterns where possible, and surface exceptions transparently. When both sides work from the same governance logic, architecture stops feeling like a late-stage obstacle and becomes what it should be: a practical decision discipline that helps the enterprise change with greater confidence and less fragmentation.

Frequently Asked Questions

What is architecture governance in enterprise architecture?

Architecture governance is the set of practices, processes, and standards that ensure architectural decisions are consistent, traceable, and aligned to strategy. It includes an Architecture Review Board, modeling standards, lifecycle management, compliance checking, and exception handling.

How does Sparx EA support architecture governance?

Sparx EA supports governance through package-level security, model validation rules, tagged value lifecycle tracking, baseline management, and report generation. Architecture decisions, compliance status, and review outcomes can all be tracked as model elements with defined owners and statuses.

What are the key elements of an effective EA governance checklist?

An effective EA governance checklist covers: principles alignment, standards compliance, integration impact assessment, security and data classification review, requirements traceability, roadmap alignment, and operational readiness. Each gate should produce model-based evidence, not just a presentation.