⏱ 25 min read

How Architecture Review Boards Use Sparx EA Models for Governance Sparx EA training

Learn how Architecture Review Boards use Sparx Enterprise Architect models to review standards, assess solution designs, improve governance, and support consistent enterprise architecture decisions. Sparx EA best practices

Architecture Review Board, Sparx EA, Sparx Enterprise Architect, enterprise architecture governance, architecture review process, solution architecture review, architecture modeling, EA repository, design governance, architecture standards, technical review board, enterprise architecture tools

Introduction

Architecture Review Boards (ARBs) are most effective when they assess change against a shared view of the enterprise rather than a mix of slide decks, spreadsheets, and project narratives. In that setting, Sparx Enterprise Architect (EA) is not simply a modeling tool. It is a working repository for architectural intent, relationships, standards, decisions, and governance evidence. That matters because the quality of a review depends on context: what is changing, what it affects, which standards apply, what risks are introduced, and how the proposal fits the target state.

ARBs operate at different altitudes. Some reviews are strategic, focused on platform rationalization, cloud adoption, or target-state transformation. Others are narrower, such as approving an integration approach for a delivery team or reviewing a standards exception. Sparx EA supports both by linking business capabilities, reference architectures, applications, interfaces, data models, technologies, and decisions in one place. The board can then review a proposal in enterprise context instead of treating it as a stand-alone design.

That is the real advantage: evidence-based governance. A well-structured EA model lets reviewers test whether a proposal aligns with approved standards, reuses existing services, duplicates capability, introduces unmanaged dependencies, or creates data ownership and control issues. It also brings security, resilience, compliance, and operational concerns into view early enough to influence delivery. When those concerns are visible in the model instead of hidden in attachments, reviews become faster, clearer, and more consistent.

Sparx EA also helps preserve continuity across the architecture lifecycle. In many organizations, what is approved and what is eventually implemented drift apart. By maintaining relationships between requirements, principles, solution elements, exceptions, and review outcomes, the repository creates a traceable path from proposal to decision to follow-through. That gives governance a stronger audit trail and gives the ARB a practical institutional memory. The board can revisit earlier decisions, understand why exceptions were granted, and spot recurring design issues across portfolios.

None of this happens by default. The value of Sparx EA depends on model discipline. A repository full of inconsistent diagrams and thinly documented elements adds little to governance. To support ARB decision-making, the model has to be curated around review needs: clear viewpoints, consistent conventions, trusted reference content, and explicit links between architecture artifacts and governance decisions.

Used in that way, Sparx EA becomes a decision-support environment for the ARB. It helps the board evaluate change consistently, connect local proposals to enterprise strategy, and govern architecture through structured evidence rather than presentation quality or individual persuasion.

1. The Role of the Architecture Review Board in Enterprise Governance

The Architecture Review Board is the mechanism that turns architectural policy into operational decision-making. Enterprise principles, standards, target states, and roadmaps influence outcomes only when there is a governance forum able to apply them consistently to real initiatives. The ARB sits between enterprise intent and delivery execution and makes that connection practical.

This role is often reduced to “design approval,” but that is too narrow. A capable ARB governs architectural coherence across portfolios, programs, and products. It asks whether a proposed change supports strategic direction, duplicates existing capability, introduces unmanaged coupling, weakens non-functional integrity, or pushes cost and risk into the future. The board creates value by assessing change in context, not by critiquing isolated technical designs.

It also serves as a balancing mechanism. The board weighs innovation against control, local delivery autonomy against enterprise consistency, and short-term pragmatism against long-term sustainability. That balance matters most in federated organizations, where product teams and business units operate with a high degree of independence. Without a governance body to assess cross-cutting impact, architecture decisions fragment quickly. The pattern is familiar: duplicated platforms, inconsistent integration approaches, conflicting data ownership, and avoidable operational complexity.

A mature ARB works through defined decision rights. Not every architecture issue belongs at board level. Many decisions should remain with domain architects, solution design authorities, or platform councils. The ARB is most useful when it focuses on changes with enterprise-wide significance: exceptions to standards, major investments, new technology classes, core data integrations, strategic platform choices, or designs that materially affect interoperability, resilience, compliance, or total cost of ownership.

There is also an accountability dimension. The ARB may not own delivery, but it does own the integrity of architecture governance. That includes setting review criteria, requiring evidence, recording decisions, assigning conditions, and tracking exceptions over time. In strong operating models, the board becomes a source of institutional memory and feedback. Patterns across repeated reviews can expose systemic issues such as recurring point-to-point integration, weak data stewardship, or late security engagement. Sparx EA is particularly useful here because it can preserve those patterns in a form the board can actually use. Sparx EA guide

Consider a retail organization introducing a second customer notification platform because one program needs SMS and another needs email orchestration. In isolation, each proposal may look reasonable. Viewed through the ARB, the issue becomes broader: duplicated capability, fragmented customer communication preferences, inconsistent audit controls, and higher support overhead. Without an enterprise model, that broader impact is easy to miss.

The practical implication is straightforward. ARBs need more than polished presentations. They need a reliable way to examine affected capabilities, impacted systems, standards alignment, dependencies, risks, and decision rationale. That is where the Sparx EA model becomes essential. free Sparx EA maturity assessment

2. Why Sparx EA Models Matter in Architecture Review

If the ARB’s role is to evaluate change in context, Sparx EA matters because it provides that context in a structured form. Its value is not that it stores diagrams. Its value lies in helping the board review architecture as a connected system of business, application, data, and technology concerns.

In many organizations, review evidence is scattered across slide decks, spreadsheets, policy documents, and the tacit knowledge of individual architects. That fragmentation weakens governance because the board has to reconstruct enterprise context every time it reviews a proposal. A Sparx EA repository reduces that burden by linking architectural elements and governance artifacts in one place. The ARB can then focus on the actual decision: what is changing, what standards apply, where exceptions are being requested, and what enterprise consequences follow.

That changes the quality of the review. Instead of asking teams to explain the whole environment from scratch at each meeting, reviewers can assess the delta from a known baseline. The approach supports consistent review across very different types of change, from large transformation programs to narrower solution designs. It also makes the process fairer. Decisions depend less on the presenter’s narrative skill and more on visible relationships, classifications, and evidence in the model.

Sparx EA also makes assumptions easier to surface. Many review failures happen not because a design is obviously weak, but because critical assumptions remain hidden until late in delivery. A team may assume an existing API can handle higher throughput, that a shared data source can be extended without governance consequences, or that a cloud service is compliant in every jurisdiction where the business operates. When those assumptions are captured as dependencies, constraints, requirements, risks, and decisions, the ARB can test them early.

Another reason Sparx EA matters is its ability to connect solution proposals to reference content. Review boards need to know whether a team is reusing approved patterns or introducing unnecessary variation. That becomes much easier when solutions are linked to standards, canonical information models, reference architectures, strategic platforms, and approved integration patterns already stored in the repository. The discussion then shifts from subjective claims of compliance to observable evidence of alignment or justified divergence.

For example, an ARB reviewing IAM modernization can trace a proposed customer identity platform to enterprise security principles, regulatory controls, existing directory services, and the target-state access architecture. Instead of debating identity in the abstract, the board can see whether the proposal reduces duplicated authentication services, supports federation, and retires legacy access stores on a defined roadmap.

A second example is more operational. A bank may propose a new API gateway for a digital lending platform because the delivery team wants environment-level autonomy. In the repository, the board can see that the enterprise already operates a strategic gateway with approved security controls, developer onboarding, traffic policies, and audit integration. The discussion changes immediately. It is no longer “does the new gateway work?” but “why is a second gateway justified, and what enterprise problem does it solve that the strategic platform does not?”

Model discipline remains critical. ARBs do not need every design detail. They need the right viewpoints at the right level of abstraction. A useful review model is concise, navigable, and explicit about the decisions the board must make. The next section looks at the Sparx EA artifacts that most often support effective ARB review. fixing Sparx EA performance problems

3. Core Sparx EA Artifacts Reviewed by Architecture Review Boards

An ARB rarely reviews a single diagram in isolation. More often, it reviews a linked set of artifacts that together explain scope, rationale, impact, and conformance. The most useful artifacts are the ones that help the board answer a small number of governance questions: what is changing, why it is changing, what enterprise assets are affected, what standards apply, and where risks or exceptions exist.

3.1 Business context and capability views

A common starting point is the business context. Capability maps, business process views, value streams, and ownership relationships help the board understand which business capabilities are being created, enhanced, or constrained by the change. This keeps the review anchored in enterprise intent rather than technical preference.

These views become especially important when a technically sound proposal has unclear business accountability or overlaps with other initiatives. By showing business sponsorship and capability impact early, architects help the ARB assess whether the proposal is strategically relevant and whether its scope boundaries are understood.

For instance, a healthcare provider may propose a new patient scheduling service. The application design may be sound, but the capability view reveals that appointment management is already being transformed under a wider outpatient operations program. That changes the governance question from “is this service well designed?” to “should this initiative proceed independently at all?”

3.2 Application and service landscape views

Once the business context is clear, the board typically turns to the application and service landscape. These views show which systems participate in the solution, which enterprise services are being reused, and where new components are introduced.

This is often where governance issues first become visible. The ARB needs to distinguish between a genuinely necessary addition and avoidable duplication of an existing enterprise service. Application catalogs, dependency maps, and interface relationships make that judgment more systematic. They also reveal whether the proposal creates tight coupling that could undermine maintainability, resilience, or future change.

A typical board decision might be to reject a proposed notification microservice because the model shows an existing enterprise messaging service already supports the required channels. In that case, Sparx EA helps the ARB base its decision on visible reuse opportunities rather than opinion. multi-team Sparx EA collaboration

3.3 Integration and information views

Integration and information views are central because they show how systems interact and how data moves across domain boundaries. In Sparx EA, these may include information models, message schemas, interface contracts, data lineage, and flow diagrams linked to applications and services.

For many ARBs, this is one of the clearest indicators of design maturity. Poorly defined data flows, unclear system-of-record ownership, or a proliferation of point-to-point interfaces often suggest that a solution is not ready for approval. By contrast, a clear information and integration model allows the board to test alignment with data governance, integration strategy, and interoperability standards.

For instance, a team proposing an event-driven architecture using Kafka can model producers, topics, consumers, retention rules, and ownership of event schemas. The ARB can then assess whether Kafka is being used as an approved enterprise event backbone, whether sensitive events cross trust boundaries appropriately, and whether the design avoids creating another unmanaged integration silo.

A more subtle example appears in finance transformation. A team may model a new “customer” event stream that publishes legal entity changes, contact updates, and credit status changes from three different upstream systems. In the model, the ARB can see that there is no single system of record for the customer domain and no agreed canonical definition. That is not just an integration concern; it is a data governance issue that should affect approval.

3.4 Technology and deployment views

Logical designs can appear sound until deployment implications come into view. Technology and deployment models show where the solution will run, what platforms it depends on, how environments are separated, and whether the design fits approved hosting and operational patterns.

These views are particularly important for decisions involving cloud adoption, legacy dependencies, network segmentation, resilience patterns, runtime products, and support boundaries. They help the board test assumptions about operational feasibility, lifecycle support, and technology standard alignment.

Technology lifecycle governance is a common example. A deployment view may show that a proposed service depends on a database version already classified as “contain” or “retire.” That allows the ARB to require an upgrade plan or to approve the design only as a short-lived transition state.

3.5 Requirements, principles, constraints, and decisions

Structural views are not enough on their own. The ARB also needs the governance layer around the design. Requirements traceability shows whether major solution elements address real business or control needs. Links to architecture principles and standards show whether the proposal aligns with established direction or requires formal exception handling.

Decision records are especially valuable because they expose the rationale behind significant trade-offs. The model may show, for example, why a synchronous integration pattern was chosen, why a temporary technology deviation was accepted, or why a control enhancement was deferred to a later increment.

3.6 Risks, issues, and review outcomes

Mature review boards also expect risks, issues, and review outcomes to be visible in the model. These should be attached to the relevant capabilities, applications, interfaces, or technologies rather than left in disconnected documents.

That supports a more realistic governance view. The board can see where uncertainty remains, what mitigation is planned, what conditions are attached to approval, and which concerns require follow-up. This is also the basis for traceable governance workflows.

Taken together, these artifacts allow the ARB to review architecture as an interconnected set of concerns rather than a series of isolated diagrams. The objective is not maximum documentation. It is a coherent model package that supports informed decision-making.

4. How Review Boards Assess Alignment, Standards, and Risk

The artifacts above matter only if they support clear governance tests. In practice, ARBs use Sparx EA to assess proposals across three related dimensions: alignment, standards conformance, and risk. Those dimensions are where the repository stops being descriptive and starts becoming useful.

4.1 Assessing strategic and target-state alignment

The first question is whether the proposal supports the intended enterprise direction. Reviewers need to understand whether the change advances the target state, represents a justified transition step, or moves the landscape further away from strategic intent.

Sparx EA supports this by tracing solution elements to business capabilities, target architectures, roadmaps, and transition states. That traceability helps expose proposals that are locally reasonable but strategically misaligned. A team may want to introduce a new workflow engine or data store for speed, while the enterprise is trying to consolidate onto a smaller set of strategic platforms. In the model, that misalignment becomes visible through missing or conflicting links to target-state building blocks.

Alignment does not always mean immediate conformity. Sometimes the board approves a transitional design, but only if the model makes the transition explicit. A temporary departure from the target state can be acceptable if the destination, timing, and exit conditions are clear.

4.2 Assessing standards conformance

The second question is whether the proposal conforms to enterprise standards in a meaningful way. ARBs are not interested in standards as a checklist exercise alone. They want to know whether standards are being used to improve interoperability, operability, security, and maintainability.

In Sparx EA, standards can be represented through reference architectures, approved patterns, technology taxonomies, lifecycle classifications, control requirements, and architecture principles. Reviewers can then test whether solution elements are classified correctly, whether interfaces follow approved patterns, whether technologies sit within supported lifecycle states, and whether mandatory controls have been addressed.

This creates a more disciplined review than relying on narrative claims of alignment. The model either shows the relevant relationships and classifications, or it shows that they are missing.

4.3 Assessing exceptions

In practice, many proposals will not align perfectly with standards. That is not automatically a governance failure. What matters is whether the deviation is explicit, justified, time-bounded, and understood in enterprise terms.

Sparx EA can support this by linking solution elements to waiver records, decision elements, exception metadata, or remediation milestones. This helps the board distinguish deliberate deviation from accidental non-compliance. It also enables portfolio-level visibility. Repeated exceptions in the same area may point to weak delivery discipline, unrealistic standards, or both.

A good example is a manufacturing firm that allows a plant automation solution to use a non-standard historian database because of vendor certification constraints. The exception may be valid, but the model should still show the affected systems, support boundaries, security controls, review period, and retirement trigger. Without that context, a temporary exception has a habit of becoming permanent architecture.

4.4 Assessing architecture risk

The third question is risk. ARBs are generally less concerned with generic project risk than with architecture risk: fragility, unclear ownership, unmanaged dependencies, resilience weaknesses, control gaps, and design choices that constrain future change.

This is where a connected model is especially useful. Reviewers can see that a critical service depends on a legacy platform nearing end of life, that sensitive data crosses trust boundaries without an approved control pattern, or that a proposed interface introduces a new single point of failure. Because these risks are linked to actual architecture elements, they can be assessed in enterprise context rather than discussed in the abstract.

4.5 Comparing options rather than describing one design

Strong reviews are comparative, not merely descriptive. The most useful question is not just whether the preferred design is acceptable, but whether it is the best available option under enterprise constraints.

Sparx EA supports this when architects model alternatives, transition architectures, and trade-off decisions rather than presenting only a final design. That gives the ARB visibility into the reasoning behind the recommendation and makes accepted risks explicit.

For example, a logistics company choosing between direct ERP integration and an event-based order hub can model both options against latency, reuse, operational ownership, and future onboarding of carriers. The board is then reviewing a decision, not just a diagram. That usually leads to better governance outcomes because trade-offs are visible rather than implied.

In short, the model supports governance when it helps the board see where the proposal fits, where it diverges, and where it may fail. The next section extends that by showing how these assessments become durable decisions and follow-up actions.

5. Decision-Making, Traceability, and Governance Workflows in Sparx EA

Effective review does not end when the board reaches a decision. Conclusions need to be recorded, linked to the architecture, and followed through. This is one of the clearest ways Sparx EA moves beyond documentation support into governance support.

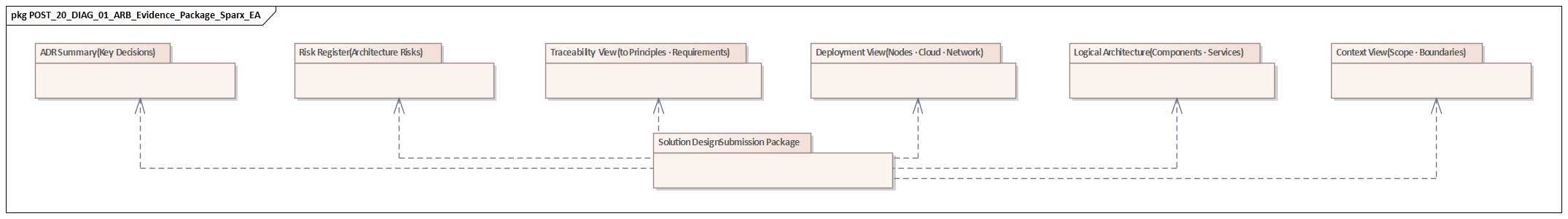

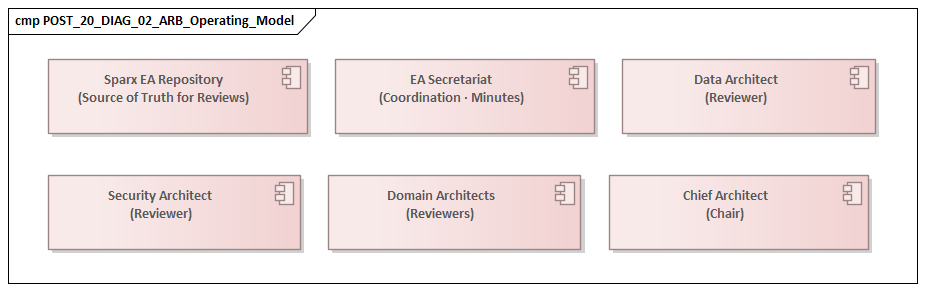

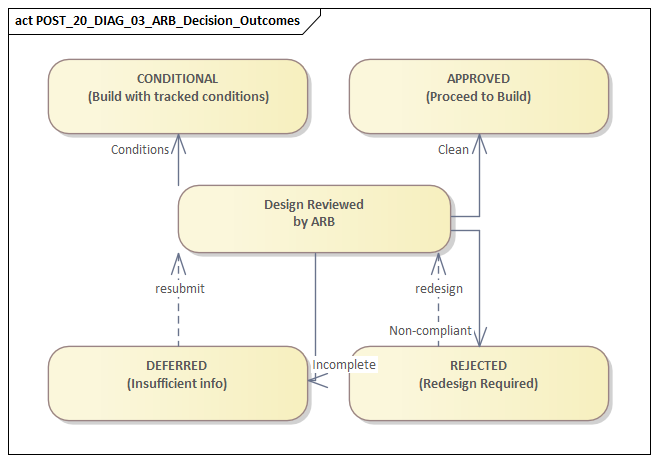

5.1 Capturing the governance workflow

A proposal usually passes through several stages: submission, architectural assessment, standards and risk review, board decision, conditional approval or rejection, and later verification. Sparx EA can represent these stages through package status, workflow states, review elements, decision records, and linked actions.

This helps the ARB understand whether a proposal is mature enough for review and where it sits in the governance lifecycle. It also addresses a common failure mode: initiatives reaching the board before the necessary analysis is complete.

5.2 Recording decisions with context

Board decisions often become hard to interpret later because the rationale is scattered across meeting minutes or email threads. In Sparx EA, decision elements can be linked directly to affected capabilities, applications, interfaces, technologies, standards, and risks. This preserves not only the decision itself but also the context in which it was made.

That matters when teams, reviewers, or strategic priorities change. If a later review asks why a legacy platform was retained, why a non-standard integration pattern was accepted, or why a data ownership issue was deferred, the repository can provide an answer grounded in the original architectural context.

A realistic example is an ARB decision to approve an IAM modernization phase on the condition that workforce authentication remains on the existing directory for six months while customer identity moves to a new federated platform. In the model, that decision can be linked to transition architectures, risk acceptance, and the retirement milestone for the legacy access service.

5.3 Managing conditional approvals

Many ARB decisions are conditional. A design may be approved subject to completion of a threat model, retirement of a temporary interface, clarification of system-of-record ownership, or migration to a strategic platform in a later release.

If those conditions exist only in meeting minutes, they are easily lost. In Sparx EA, they can be represented as actions, constraints, issues, or linked work items attached to the relevant architecture elements. That allows later checkpoint reviews to test whether the conditions were actually met. It also keeps governance attached to the design rather than separated from it.

A common example is a cloud migration approved on the condition that production logging is integrated with the enterprise SIEM before go-live. If that condition is linked to the deployment model, operational controls, and release milestone, the board can verify closure. If it lives only in a PDF, it will likely be rediscovered during an audit.

5.4 Enabling portfolio-level governance visibility

Repository discipline becomes even more valuable when governance metadata is applied consistently. Standard stereotypes, tagged values, and status conventions can support reporting on open exceptions, recurring standards breaches, overdue remediation actions, and decisions awaiting closure.

This gives the ARB a view beyond individual reviews. It can identify patterns across portfolios, such as repeated dependency on unsupported technologies, chronic delays in control remediation, or widespread requests for the same exception. In that sense, the repository becomes a learning tool for the architecture practice as well as a review tool for individual proposals.

5.5 Linking decisions to transition architectures and roadmaps

Many ARB decisions concern staged movement toward a target state rather than immediate conformity. A proposal may be acceptable only as an interim step, with explicit commitments to converge later on strategic services, canonical models, or approved hosting patterns.

When those commitments are modeled as part of transition architectures and roadmap increments, the board can govern technical debt more deliberately. Temporary deviations remain visible, reviewable, and time-bounded instead of becoming permanent through neglect.

The practical lesson is simple: a review process is only as strong as its follow-through. Sparx EA strengthens governance when the repository connects architecture evidence, board decisions, approval conditions, and transition obligations in one traceable structure.

6. Common Challenges and Best Practices

The earlier sections describe what effective ARB use of Sparx EA looks like. In practice, organizations often struggle to reach that point. The obstacles usually have less to do with tool capability than with model discipline, curation, and usability in real governance settings.

6.1 Challenge: over-modeling

One of the most common problems is too much detail. Architects sometimes bring extensive low-level design content into ARB review packages, obscuring the enterprise questions the board actually needs to assess. The result is noise rather than clarity.

Best practice: prepare layered viewpoints. Start with a concise review view focused on business impact, affected systems, integration, standards position, risks, and requested decisions. Support it with drill-down detail only where deeper scrutiny is likely to be needed.

6.2 Challenge: inconsistent modeling conventions

If different teams model similar concepts in different ways, the board cannot compare proposals reliably. One architect may use formal application components, another may rely on generic boxes, and a third may create free-form diagrams with few real relationships. Review quality then depends too heavily on presenter explanation.

Best practice: define a lightweight ARB review metamodel. It should specify the minimum artifact types, naming conventions, lifecycle states, relationship types, and governance metadata needed for review. It does not need to constrain every modeling activity in the repository, but it should standardize what the board relies on.

6.3 Challenge: weak reference content

ARBs depend on reference content as much as on solution models. If standards, approved patterns, target-state definitions, or canonical data models are outdated or hard to find, teams will either ignore them or interpret them inconsistently.

Best practice: assign stewardship for the governance backbone of the repository. Technology standards, reference architectures, integration patterns, security controls, and strategic platform definitions should have named owners and maintenance cycles.

6.4 Challenge: low model credibility

Reviewers stop trusting the repository if diagrams do not reflect reality, interfaces are missing, or decisions and exceptions are not kept current. Once trust is lost, teams revert to slide-driven governance.

Best practice: treat review-critical content as governed data. Core inventories, interface relationships, lifecycle statuses, and exception records should have owners, quality controls, and periodic review. The goal is not perfection everywhere, but confidence in the content used for governance decisions.

6.5 Challenge: poor linkage to delivery governance

Even when ARB decisions are well recorded, they may have little practical effect if they are disconnected from delivery planning, risk management, or later design checkpoints.

Best practice: establish explicit handoffs between architecture governance and delivery governance. Conditions of approval should map to accountable owners, target dates, and checkpoint reviews. External workflow tools may still be used, but the architecture repository should remain the authoritative source of architectural context.

6.6 Challenge: uneven Sparx EA fluency among reviewers

Not every ARB participant will be comfortable navigating a detailed model. Business stakeholders, security leads, and senior technologists may prefer curated outputs rather than direct repository exploration.

Best practice: improve consumption, not just modeling. Use curated review diagrams, clear legends, consistent status indicators, short decision summaries, and structured navigation paths. The objective is to make the model reviewable by mixed audiences without weakening modeling rigor.

One practical technique is to create a standard ARB review package in EA: a business context diagram, a solution context view, an integration view, a standards and exceptions summary, and a decision page. Reviewers learn where to look, and teams learn what “review-ready” means. That consistency often improves governance more than adding more model detail.

Overall, the most effective use of Sparx EA in ARBs combines disciplined modeling with disciplined consumption. Success depends less on repository volume than on review readiness: consistent viewpoints, trusted reference content, clear ownership, and governance workflows that connect model evidence to real decisions.

Conclusion

Sparx EA delivers the most value to an Architecture Review Board when it is treated as a governance asset rather than a diagram repository. That distinction runs through the entire review lifecycle described here. The ARB’s task is not simply to judge whether a design is technically coherent. It is to determine whether the organization is making sustainable architecture decisions under real business and delivery constraints.

For that reason, the most useful Sparx EA models are the ones that support a small set of core governance questions: what is changing, how it aligns to strategy, what standards apply, where risks and dependencies exist, and what decisions or exceptions are required. The specific artifacts reviewed by the board, the alignment and risk assessments it performs, and the traceable workflows it relies on all serve that purpose.

There is also a broader organizational benefit. Over time, ARB use of Sparx EA exposes patterns that individual reviews cannot. Repeated exceptions may reveal unrealistic standards. Persistent duplication of services may indicate weak platform discoverability. Ongoing disputes over data ownership may point to operating model issues rather than isolated design flaws. Continued use of technologies in “retire” status may show that lifecycle governance is not reaching delivery teams early enough.

Ultimately, the strongest review boards use Sparx EA to create continuity between strategy, solution design, governance decisions, and implementation accountability. When that continuity exists, architecture governance becomes more than a control mechanism. It becomes a practical way to improve decision quality, reduce avoidable fragmentation, and guide enterprise change with greater confidence.

Frequently Asked Questions

What is an Architecture Review Board (ARB)?

An ARB is a governance body responsible for reviewing architecture decisions, ensuring compliance with enterprise standards, and approving or rejecting solution proposals. It typically includes enterprise architects, domain architects, security, and sometimes business representatives.

How does Sparx EA support an ARB?

Sparx EA gives the ARB access to model evidence rather than relying on slides alone. Architects submit impact analyses, traceability views, integration landscapes, and compliance assessments generated directly from the Sparx EA repository — enabling reviewers to interrogate the architecture rather than accept a presentation at face value.

What should an ARB evidence package contain?

A good ARB evidence package should include: a context diagram showing the solution scope, an impact analysis showing affected capabilities and applications, a requirements traceability view, an integration view showing new and modified interfaces, and a risks and constraints summary — all generated from the architecture model.