⏱ 23 min read

Architecture Governance with Sparx EA in a Large Transformation Program Sparx EA training

Learn how to establish architecture governance with Sparx Enterprise Architect in a large transformation program. Explore governance frameworks, model management, traceability, standards compliance, and decision support for enterprise-scale delivery. Sparx EA best practices

architecture governance, Sparx EA, Sparx Enterprise Architect, transformation program, enterprise architecture, governance framework, architecture review board, model governance, traceability, standards compliance, solution architecture, enterprise transformation, repository management, architecture decisions, EA governance

Introduction

Architecture governance becomes critical once a transformation program moves beyond a small set of coordinated projects and begins reshaping multiple business capabilities, platforms, and delivery teams at the same time. At that point, the problem is no longer the production of architecture artifacts. The harder task is establishing enough control to guide decisions across the program without creating so much overhead that delivery teams route around governance altogether.

Large transformations bring parallel workstreams, external vendors, evolving target states, and tightly coupled dependencies. Business processes, applications, data, integration, security, and infrastructure rarely move at the same pace. Without a governance mechanism that connects those layers, teams make sensible local decisions that collectively produce poor enterprise outcomes. The symptoms are predictable: duplicated capabilities, inconsistent technology choices, blurred ownership, unmanaged exceptions, and solution decisions taken in delivery forums with little enterprise context.

In that setting, Sparx Enterprise Architect (EA) is most useful when it is treated as more than a modeling tool or document store. Used well, it becomes a control point for architectural intent, traceability, and decision assurance. It can hold the governed baseline of principles, standards, reference architectures, target-state models, solution designs, and transition dependencies. More importantly, it can connect them. That traceability is what gives governance practical value. Reviews no longer depend only on presentation quality or individual interpretation; they can draw on evidence of alignment, impact, standards compliance, and dependency risk.

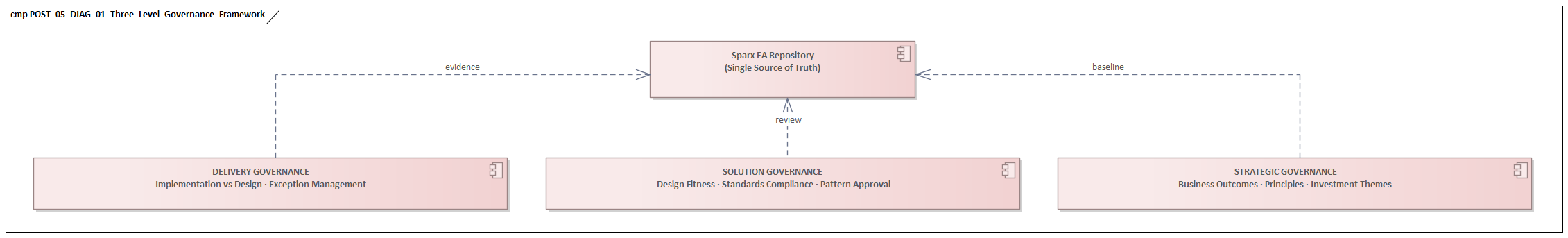

Architecture governance in a large program also operates at several levels. Strategic governance checks whether initiatives remain aligned to business outcomes, target capabilities, and investment themes. Solution governance checks whether designs are fit for purpose and consistent with approved patterns. Delivery governance checks whether implementation is drifting from approved design intent. Sparx EA can support all three, but only when the repository is structured deliberately, ownership is clear, and governance activities are built into the delivery lifecycle. Sparx EA guide

The purpose of governance is not administrative control for its own sake. It is disciplined decision-making. Good governance makes design intent visible, allows deviations to be assessed consciously, and preserves continuity between planning and execution. In a large transformation, variation and compromise are inevitable. The aim is not to eliminate them, but to manage them deliberately. Sparx EA is most effective when it is positioned as part of that operating model: a repository of record, a mechanism for traceability, and a practical enabler of architectural control at scale. free Sparx EA maturity assessment

1. The Role of Architecture Governance in Large-Scale Transformation

The central problem in large-scale transformation is not a shortage of architecture content. Most programs generate plenty of diagrams, principles, and design papers. What is usually missing is a mechanism that turns architecture into coordinated action. Governance provides that mechanism. It translates architectural intent into repeatable decision-making across a program where multiple teams are changing processes, applications, data, integration patterns, and technology platforms at the same time.

In a single-project environment, architecture review can often be handled through periodic checkpoints and relatively stable assumptions. A large transformation program is different. Priorities move. Sequencing changes. Vendors introduce constraints. Legacy realities force compromise. Governance therefore has to operate as a continuing discipline rather than a one-off validation step. It must support both conformance and controlled deviation. The real question is rarely whether every solution matches the target state perfectly. It is whether departures are understood, justified, and time-bound.

This is where governance adds value beyond compliance. It links architecture to investment and delivery decisions. If several workstreams depend on the same master data capability, integration platform, or identity service, governance should expose those dependencies early enough to influence scope, sequencing, and funding. In one retail transformation, three customer-facing initiatives initially proposed separate authentication extensions for web, mobile, and partner channels. Governance redirected them to a single enterprise IAM platform, avoiding duplicated build effort and a future identity consolidation program.

Governance becomes especially important during transition states, because that is where architecture quality often starts to erode. Large programs rarely move directly from current state to target state. They pass through interim states in which legacy and modern platforms coexist, duplicated processes remain temporarily in place, and data is synchronized across old and new environments. Without governance, those interim arrangements harden into accidental architectures: expedient in the short term, expensive and persistent in the long term. A sound governance model treats transition states as deliberate design constructs. It defines what is temporary, why it exists, how long it is acceptable, and what conditions will trigger its retirement.

Another essential role is clarifying accountability. In complex programs, decision rights can blur between enterprise architects, domain architects, solution architects, product owners, program managers, and delivery leads. Governance establishes who approves principles, who owns standards, who can accept risk, and who is responsible for maintaining traceability between business outcomes and technical change. Many architecture failures stem less from weak design than from unresolved ownership and inconsistent decisions made in different forums.

A practical governance model has to be light enough to support delivery cadence and structured enough to maintain control across domains. It should provide clear review criteria, visible decision records, and an explicit escalation path when trade-offs are needed. In a regulated banking program, for example, a payments team may be allowed to proceed with a tactical batch interface to meet a statutory deadline, but only if the exception is approved by the right forum, linked to a retirement milestone, and funded for removal in a later release.

Governance is therefore not a brake on delivery. It is the means by which variation is made visible and managed deliberately rather than allowed to accumulate unnoticed.

2. Positioning Sparx EA as the Governance Backbone

If governance is the operating discipline, Sparx EA is the platform that gives that discipline continuity and evidence. To play that role, EA has to be more than a repository for diagrams produced after decisions have already been made. It needs to function as the authoritative environment in which key architectural objects, relationships, and decisions are maintained in a controlled and reusable form.

A common failure pattern is to use EA as a documentation archive. Diagrams are stored for reference, but the repository does not influence delivery behavior. A governance backbone requires the opposite approach. The repository should contain the core objects that governance needs to control change: business capabilities, value streams, applications, information entities, interfaces, technology components, principles, standards, risks, approved patterns, and transition states. Just as important, those objects must be linked. A solution should be traceable to the business capability it supports, the applications and data it affects, the standards it must comply with, and the roadmap stage to which it belongs. Those relationships are what make governance evidence-based rather than opinion-based.

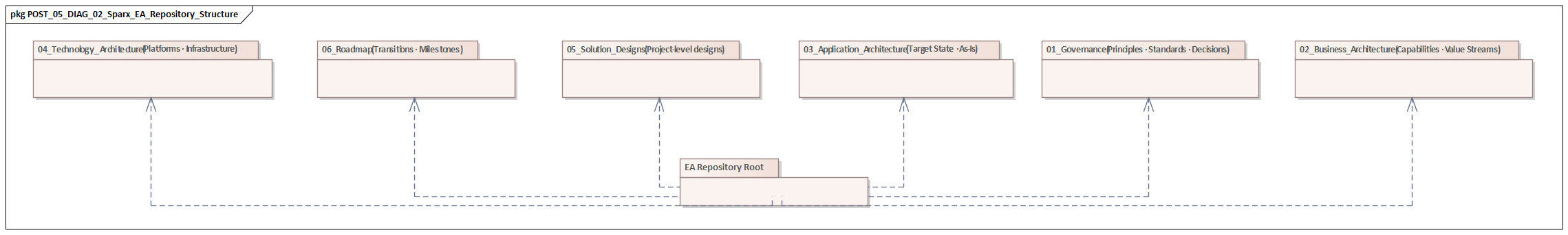

Repository structure matters as much as repository content. In a large program, EA should support both enterprise continuity and initiative execution. Stable architectural assets such as principles, standards, reference architectures, and canonical information models should be maintained as controlled baseline content. Program and project packages should then consume or specialize those assets without duplicating them. This separation helps governance teams distinguish between enterprise direction and initiative-specific design decisions. It also reduces the risk that different workstreams create parallel definitions of the same concept.

The repository should reflect the multiple levels of governance. Strategic governance needs views of business outcomes, capabilities, target states, and investment themes. Solution governance needs views of solution structures, dependencies, standards obligations, and exception status. Delivery governance needs enough linkage to implementation artifacts to check whether build decisions still align to approved design intent. EA does not need to become every tool in the delivery toolchain, but it does need to preserve the architectural relationships that keep those governance levels connected.

Decision visibility is another core requirement. In large programs, architecture decisions often end up scattered across meeting minutes, slide decks, approval emails, and personal notes. That weakens continuity and makes later review difficult. In EA, decisions, issues, and exceptions can be linked directly to affected elements. This creates a durable record of why a standard was waived, why a temporary integration was accepted, or why a dependency was deferred. Consider a manufacturing program introducing an event-driven integration model. The target pattern may mandate Kafka for new domain events, while one factory control system is temporarily allowed to continue sending nightly flat files because the vendor upgrade is eighteen months away. If both the target pattern and the exception are captured in EA against the affected interfaces and transition roadmap, the compromise remains visible and governable.

To establish EA as the governance backbone, operating discipline is essential. Ownership of repository areas must be clear. Update expectations must align with delivery milestones. Model quality standards need to be applied consistently. The objective is not to model everything. It is to model what governance needs in order to control change. When that principle is followed, EA becomes the shared architectural control system the rest of the governance model can rely on.

3. Defining Governance Structures, Decision Rights, and Review Cadences

A well-structured repository does not create governance by itself. EA provides the backbone, but governance only becomes effective when it is supported by a clear operating model. In a large transformation program, that means defining governance forums, decision rights, and review cadences in a way that matches both enterprise complexity and delivery speed.

The first step is to separate governance forums by purpose. Large programs rarely succeed with a single architecture board responsible for every decision. In practice, at least three levels are usually required.

Strategic architecture governance focuses on target-state alignment, investment implications, enterprise principles, and major exceptions. It addresses questions such as whether an initiative supports the intended business capability model, whether a platform choice is consistent with enterprise direction, or whether a deviation introduces enterprise-wide risk.

Domain or design governance focuses on cross-solution consistency within specific areas such as business architecture, data, applications, integration, security, or technology. It ensures that recurring patterns are applied consistently and that solutions do not conflict within a domain.

Solution governance focuses on initiative-specific designs. It checks fitness for purpose, conformance to approved patterns, and impact on dependencies already in flight.

These forums should complement one another rather than duplicate effort. Decisions should be taken at the lowest competent level, with only material deviations, cross-domain conflicts, or principle-level exceptions escalated upward. That escalation model is critical if governance is to remain proportionate and avoid becoming a bottleneck. For example, a solution review may approve an API design within an established integration pattern, while a strategic board reserves the right to approve or reject the introduction of an entirely new integration platform.

Decision rights need to be defined precisely. It is rarely enough to say that “architecture approves designs.” A workable governance model distinguishes between approval, endorsement, consultation, and risk acceptance. Enterprise architecture may approve deviations from standards. Security may approve control exceptions. Data governance may approve classification or retention impacts. Program leadership may accept delivery-driven risk where timelines force compromise. These distinctions should be visible in EA through ownership, status, and approval metadata attached to standards, solution packages, and exception records.

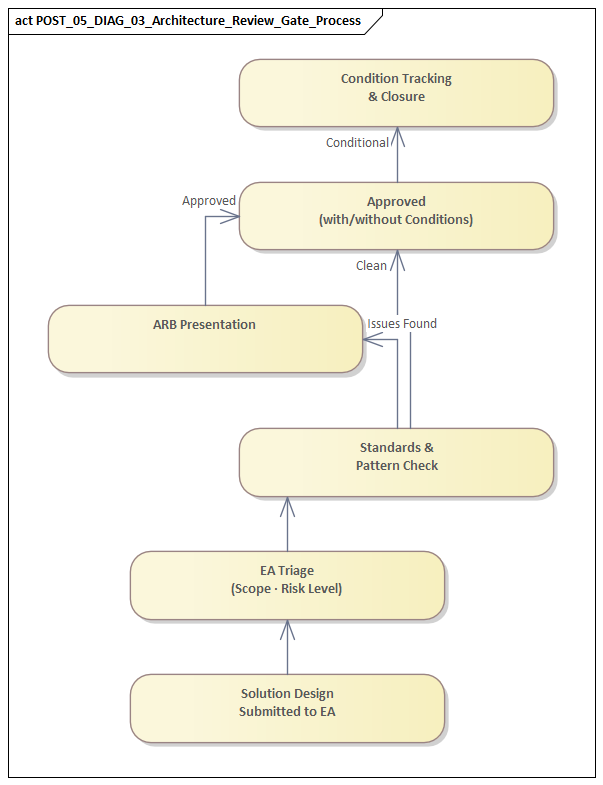

Review timing is equally important. Governance often fails because reviews happen after major commitments have already been made. Effective governance requires touchpoints that match the delivery lifecycle. At minimum, there should be:

- Early shaping reviews, when scope and options are still flexible

- Formal design reviews, before build, procurement, or contractual commitments are locked in

- Implementation assurance reviews, during delivery, to check for drift from approved design intent

For high-risk initiatives, additional reviews may be needed at transition-state milestones, especially where interim integrations, data migration waves, or coexistence architectures introduce significant complexity.

Review criteria should be standardized but proportionate. Not every change deserves the same level of scrutiny. A minor enhancement to an existing service should not follow the same governance path as a new platform introduction or a major process redesign. Programs usually benefit from defining review triggers based on architectural novelty, cross-domain impact, regulatory exposure, data sensitivity, and dependency criticality. EA can support this by classifying solution artifacts and linking them to predefined review checklists and required evidence.

A mature governance model also needs a deliberate way to handle exceptions. Controlled deviation is part of real transformation. The objective is not to eliminate exceptions, but to make them visible, justified, time-bound, and traceable. Exception registers linked directly to EA elements provide an effective mechanism for doing this. They allow governance teams to distinguish between approved transitional variance and unmanaged divergence.

Without that structure, review forums tend to drift into one of two unhelpful modes: rubber-stamping or endless debate. With it, governance becomes faster, clearer, and easier for delivery teams to navigate.

4. Establishing Standards, Reference Architectures, and Compliance Controls in Sparx EA

The governance structures described above only become effective when they are supported by clear architectural control mechanisms. In practice, that means standards, reference architectures, and compliance expectations must be explicit, accessible, and connected to solution design inside Sparx EA. fixing Sparx EA performance problems

A useful starting point is to treat standards as modeled assets rather than static text in policy documents. Technology standards, integration principles, security controls, data handling rules, and design patterns should exist in EA with clear metadata: status, owner, applicability, rationale, review date, and approved alternatives. This matters because standards in large transformation programs are rarely universal. Some apply only to cloud-native solutions, some only to regulated data domains, and some only during a transition period while legacy platforms remain active. Capturing that context in the repository allows standards to be applied intelligently rather than as generic compliance statements.

Reference architectures provide the next layer of practical control. Their value lies in bridging the gap between principle and implementation. A reference architecture should do more than illustrate a concept. It should define the expected structural pattern for recurring solution types: customer-facing digital services, event-driven integration, master data management, analytics platforms, identity-enabled applications, and similar patterns that appear repeatedly across the program. In EA, these can be modeled as reusable building blocks with prescribed relationships, required controls, and approved technology options.

A clear example is an event architecture in which domain services publish business events to Kafka, downstream consumers subscribe asynchronously, and point-to-point middleware is allowed only by exception. Another might be a digital channel pattern requiring customer applications to authenticate through a central identity provider, consume enterprise APIs through an API gateway, and avoid direct database access to core systems. When those patterns are modeled in EA, delivery teams can derive solution views from them instead of inventing structures from scratch.

It is important to distinguish between levels of obligation. Many governance models lose credibility because they present all guidance as equally binding. In reality, programs need a more nuanced structure. Standards and patterns should typically be classified as:

- Mandatory: must be followed unless a formal exception is approved

- Preferred: expected by default, but alternatives may be used with justification

- Conditional: applicable only in specific contexts

- Deprecated: still present but targeted for retirement

This distinction helps review forums assess compliance realistically. Diverging from a preferred pattern may only require explanation. Using a deprecated technology may require remediation planning. Failing to implement a mandatory security control may require formal escalation. That nuance makes governance more usable and more credible.

Compliance controls should also be embedded in the architecture lifecycle rather than applied only in review meetings. EA should be used to link standards and reference architectures directly to solution components, interfaces, data objects, and deployment elements. This allows architects and reviewers to test whether required controls are present, whether approved technologies are being used, and whether important relationships are missing.

For example, an application handling sensitive customer data should be linked to:

- the relevant information classification,

- retention and privacy obligations,

- required security controls,

- approved integration constraints, and

- the target or transition architecture it supports.

If those links are missing, governance has early evidence of an incomplete design before implementation moves too far.

Compliance should focus on architectural significance rather than exhaustive model policing. The goal is not to enforce every notation preference or naming convention. It is to assess whether the design conforms to enterprise-critical expectations: reuse of shared platforms, adherence to security and data obligations, consistency with integration strategy, and alignment with target-state technology direction. Well-designed compliance controls in EA therefore act as decision support. They direct attention to the issues that matter most while allowing low-risk areas to proceed with minimal friction.

5. Enabling Traceability from Strategy to Delivery Across the Transformation Lifecycle

Traceability is what ties the governance model together. Governance defines how decisions are made. EA provides the repository backbone. Standards and reference architectures define what good looks like. Traceability links all of that to real change as it moves from strategy into design and delivery.

In a large transformation program, traceability should begin with a small number of stable planning anchors: business outcomes, transformation objectives, target capabilities, value streams, and investment themes. These are the elements that justify change and shape prioritization. Governance becomes far stronger when solution designs can be traced not only to requirements, but beyond them to the business outcomes and capability gaps they are meant to address. That makes it possible to test whether a proposed solution is genuinely advancing the transformation or simply delivering local functionality.

As initiatives move into shaping and design, traceability should connect those strategic drivers to architecture constructs such as target-state capabilities, business processes, information concepts, applications, integrations, and technology services. This creates a navigable chain from intent to structure. If a transformation objective is to improve customer onboarding speed, the repository should show which capabilities are being enhanced, which process changes are required, which applications are affected, which data entities are authoritative, and which integration services must be introduced or modified. That visibility helps teams assess whether scope decisions remain coherent when priorities shift.

Traceability is especially important across transition states, because large programs rarely deliver the target architecture in a single step. Work packages and solution increments should therefore be linked not only to target architectures, but also to transition architectures, roadmap milestones, and dependency relationships. This allows governance to see whether a temporary interface supports a defined migration stage, whether a legacy component is scheduled for retirement, and whether a dependency on a future platform has become a risk to current delivery.

A realistic example comes from identity modernization. A program may need a temporary coexistence model involving legacy Active Directory, a new cloud identity provider, and application-specific role mappings. That arrangement may be acceptable for a period, but only if the transition path, ownership, and retirement conditions are explicit in the repository. Without those links, the coexistence design becomes “temporary” in name only.

From a delivery assurance perspective, traceability should also extend into implementation evidence. EA does not need to replace delivery management tools, but it should preserve enough linkage for architecture decisions to remain testable during execution. Approved solution components can be linked to epics, features, releases, interfaces, controls, and, where practical, deployment or test evidence. When implementation deviates, governance can identify exactly which standards, assumptions, or target-state commitments are affected. This is particularly important in multi-vendor programs, where design intent can become diluted as responsibilities pass between teams.

Another major benefit is impact analysis. Transformation programs are dynamic. Business priorities change, regulatory requirements emerge, platform decisions are revised, and delivery schedules slip. When that happens, architects need to understand quickly which capabilities, solutions, interfaces, and transition plans are affected. A well-structured EA repository makes that possible. Instead of reconstructing dependencies from slide decks and spreadsheets, governance teams can assess consequences directly from the model and respond with greater speed and confidence.

Traceability is not an administrative exercise. It is the mechanism that preserves decision continuity across the lifecycle. It keeps delivery connected to enterprise intent, supports deliberate governance of transition states, and allows change to be assessed in terms of business impact rather than isolated technical activity.

6. Scaling Governance Practices: Metrics, Risk Management, and Continuous Improvement

As transformation expands, governance has to do more than review designs and record decisions. It must show whether architecture control is working, where risk is accumulating, and how the governance model itself should evolve. This is the point at which many programs begin to weaken: forums continue, models grow, but there is little evidence of whether governance is improving outcomes. Because EA holds the underlying decisions, standards, and traceability, it can also become the source of governance insight.

The first step is to define a small set of metrics that support decision-making rather than simply reporting activity. Counting diagrams, meetings, or repository updates says very little about governance effectiveness. More useful measures include:

- proportion of solutions aligned to approved reference architectures,

- number of active exceptions by domain,

- age of unresolved deviations,

- traceability completeness for critical initiatives,

- frequency of repeated non-compliance against the same standard,

- duplicated capabilities or technology components emerging across workstreams,

- volume of transition-state components past their intended retirement date.

These indicators show where governance is strong and where it is weakening. Because the underlying data is already represented in the repository through statuses, tagged values, relationships, and exception records, EA can provide evidence without creating a separate reporting universe.

Risk management should also be treated as an explicit architectural discipline. In large transformation programs, architecture risk usually appears in recurring forms: excessive transition complexity, unmanaged legacy dependencies, inconsistent data ownership, proliferation of point-to-point integrations, unsupported technology choices, and weak alignment between platform roadmaps and delivery commitments. The value of EA is that these risks can be linked directly to the affected architecture elements and assessed in context. A solution does not simply carry a generic “high risk” label. The repository can show whether the issue stems from security exposure, migration sequencing, resilience concerns, vendor lock-in, or target-state divergence.

This matters because risk treatment in transformation is rarely about eliminating risk altogether. More often, it is about making risk transparent, assigning ownership, and linking mitigation to roadmap decisions. If several initiatives rely on a temporary integration pattern that is known to be unsustainable, governance should be able to show how widespread that dependency is, which milestone is intended to remove it, and what happens if the target platform is delayed.

Technology lifecycle governance is a good example. If multiple solutions still depend on a database version marked for retirement next year, the repository should show where it is used, who owns remediation, and whether funding is in place. If that visibility is missing, the issue typically surfaces late, when remediation becomes more expensive and delivery options are narrower.

Continuous improvement is the third scaling discipline. Governance models that work in early program phases often become too slow, too shallow, or too fragmented as delivery expands. Review criteria may need refinement. Decision thresholds may need adjustment. Repository structures may need simplification to remain usable. Standards that generate repeated exceptions may need revision. Some controls may need to be strengthened, while others should be streamlined to reduce friction for low-risk changes.

A mature governance approach therefore examines not only architecture content, but governance performance itself. Useful questions include:

- Which review steps are detecting issues too late?

- Which standards produce repeated exceptions and may need revision?

- Which domains lack clear ownership or model quality?

- Where are teams bypassing governance because the process is disproportionate?

- Which transition-state exceptions are becoming permanent liabilities?

EA can provide evidence for these questions rather than leaving them to anecdote. Patterns in exceptions, repeated design rework, chronic traceability gaps, or ageing transitional components all indicate where governance needs to change.

At scale, architecture governance succeeds when it becomes measurable, risk-aware, and adaptive. Sparx EA supports that maturity by making structural weaknesses visible, linking risks to real architectural constructs, and providing the evidence needed to improve governance over time. multi-team Sparx EA collaboration

Conclusion

Architecture governance in a large transformation program works when it is treated as an operating capability rather than an approval ritual. Its purpose is disciplined decision-making: making design intent visible, assessing deviations consciously, and preserving continuity between planning and execution. Sparx EA can support that purpose when it is used as the governance backbone for repository control, decision structures, standards, traceability, and improvement.

The main lesson is straightforward. Effective governance is not created by tooling alone. EA becomes valuable only when it is integrated into the delivery lifecycle, supported by clear decision rights, and populated with the architectural relationships that governance actually needs. When that happens, reviews become evidence-based, transition states can be governed deliberately, and implementation drift becomes visible before it causes lasting damage.

In large transformation programs, the pressure to optimize locally is constant. Teams move quickly, compromises are unavoidable, and temporary solutions can easily become permanent liabilities. Governance provides the discipline that keeps those trade-offs visible and bounded. Sparx EA provides the structural memory and traceability that allow that discipline to scale.

The goal is not model completeness for its own sake. It is dependable decision quality across strategy, design, and delivery. When Sparx EA is positioned and governed in that way, it helps the enterprise move through deliberate transition states while preserving coherence in the target direction. That continuity is what prevents transformation momentum from turning into fragmentation and keeps architecture relevant under real delivery conditions.

Frequently Asked Questions

What is architecture governance in enterprise architecture?

Architecture governance is the set of practices, processes, and standards that ensure architectural decisions are consistent, traceable, and aligned to strategy. It includes an Architecture Review Board, modeling standards, lifecycle management, compliance checking, and exception handling.

How does Sparx EA support architecture governance?

Sparx EA supports governance through package-level security, model validation rules, tagged value lifecycle tracking, baseline management, and report generation. Architecture decisions, compliance status, and review outcomes can all be tracked as model elements with defined owners and statuses.

What are the key elements of an effective EA governance checklist?

An effective EA governance checklist covers: principles alignment, standards compliance, integration impact assessment, security and data classification review, requirements traceability, roadmap alignment, and operational readiness. Each gate should produce model-based evidence, not just a presentation.