⏱ 23 min read

Implementing Architecture Governance with Sparx EA in a Large Transformation Program Sparx EA training

Learn how to implement architecture governance with Sparx Enterprise Architect in a large transformation program. Explore governance frameworks, repository structure, standards compliance, review workflows, and stakeholder reporting. Sparx EA best practices

architecture governance, Sparx EA, Sparx Enterprise Architect, enterprise architecture, transformation program, architecture review board, governance framework, architecture repository, solution architecture, standards compliance, traceability, modeling governance, EA repository management, architecture assurance, large-scale transformation

Introduction

Architecture governance becomes indispensable once a transformation program moves beyond a handful of loosely connected projects and begins coordinating change across business units, platforms, delivery teams, and third parties. At that scale, architecture is no longer only a design activity. It becomes a mechanism for directing decisions, controlling risk, and keeping execution aligned with enterprise intent. The real challenge is not writing principles or publishing target-state diagrams. It is establishing a governance model capable of guiding hundreds of interdependent decisions without stalling delivery.

In large programs, governance usually fails for operational reasons rather than conceptual ones. Principles exist, but they are not connected to planning or funding decisions. Review boards meet, yet their conclusions are hard to trace into solution designs, risks, or implementation milestones. Teams create architecture artifacts, but those artifacts end up dispersed across slide decks, spreadsheets, collaboration sites, and local drives. Over time, it becomes difficult to determine what has been approved, what remains under review, and where delivery has already drifted from the intended architecture. A tool-supported approach addresses this by creating a governed system of record for architectural knowledge, decisions, and compliance evidence.

Sparx Enterprise Architect (Sparx EA) is well suited to that role when used as more than a document repository. It can link business capabilities, processes, applications, integrations, data, technology platforms, standards, transition states, and roadmap increments within a single model. That matters because architecture decisions in transformation programs rarely sit in isolation. A decision to modernize identity and access management, for instance, can alter application onboarding, access models, audit controls, and sequencing across several workstreams. When those relationships are visible, governance becomes more grounded and less dependent on memory or interpretation.

The value of Sparx EA does not come from modeling everything. It comes from structuring governance around decision-making. Architects and program leaders need to know which standards apply to a solution, which exceptions have been granted, what dependencies are affected, and which roadmap increments are exposed to unresolved design choices. To support that, the repository must do more than hold diagrams. It has to enable reviews, traceability, impact analysis, and evidence-based approvals.

The sections that follow take that perspective throughout. They begin with the case for governance in large transformation programs, then define the operating model, show how controls can be implemented in Sparx EA, and examine how standardization, traceability, and reuse strengthen governance. From there, the discussion turns to integration with delivery and portfolio management before closing with measures of governance effectiveness and continuous improvement. The central argument remains consistent: Sparx EA delivers the most value when it serves as the governed system of record for architecture decisions, not merely a place to store architecture content. Sparx EA guide

1. Why Architecture Governance Matters in Large Transformation Programs

Large transformation programs create a level of complexity that informal architectural coordination cannot absorb for long. Multiple workstreams progress in parallel, each with its own sponsors, delivery partners, technical constraints, and timelines. As the number of decisions increases, local optimization becomes the path of least resistance unless there is a governance mechanism strong enough to preserve enterprise coherence.

The effects of weak governance usually emerge gradually rather than through a single visible failure. Teams duplicate capabilities, fragment data flows, choose incompatible integration approaches, introduce security gaps, and increase long-term operating cost. Under delivery pressure, each decision can appear reasonable in isolation. Taken together, however, they create architectural drift. Governance exists to detect and contain that drift before it becomes the program’s default mode of operation.

That does not mean governance should reject every deviation from the target state. In a transformation program, short-term trade-offs are often unavoidable. A team may need to retain a legacy component for one release, postpone a data remediation activity, or introduce a temporary interface to meet a milestone. Governance adds value by making those trade-offs explicit. It asks what risk is being accepted, what dependency is being created, how long the exception will remain in place, and what future cost it adds to the roadmap. In that sense, governance is not simply a compliance exercise. It is a discipline for making architectural decisions consciously.

Governance also matters because transformation happens in stages. Programs move through releases, transition states, and interim solutions rather than through a single clean design-and-implement cycle. The target architecture therefore cannot be defined once and left untouched. It has to be interpreted repeatedly through delivery decisions. Governance provides the mechanism for doing that while keeping the program aligned with business priorities, regulatory expectations, and technology standards.

Another essential role of governance is to hold the architecture domains together. Business, application, data, security, and technology architects often work with different assumptions and at different levels of detail. Each domain may produce sensible recommendations, yet the overall solution can still fall out of alignment if cross-domain effects are not reviewed systematically. A business process redesign may change master data ownership. A cloud migration may alter resilience patterns, identity controls, and support responsibilities. Governance brings those implications into view before they surface as delivery failures.

This is where Sparx EA becomes particularly useful as a governed system of record. If architectural relationships, decisions, standards, and exceptions are captured in one repository, governance can work from evidence rather than fragmented documents and institutional memory. The model shows what has been approved, what is provisional, and what is non-conformant. A board decision to adopt Kafka as the default event backbone, for example, can be linked directly to affected applications, integration patterns, and transition releases instead of remaining buried in meeting minutes. Likewise, if a payments workstream proposes direct point-to-point interfaces for a short-lived migration phase, the exception can be tied to the relevant systems, risk owners, and retirement date.

Governance also creates accountability. It clarifies who reviews what, on what basis, and with what evidence. In large programs, that distinction matters. Stakeholders need to know the difference between approved design, accepted exception, identified risk, and ungoverned deviation. Without that clarity, escalation, assurance, and executive reporting all weaken.

Ultimately, governance protects the outcome after the program ends. Transformation is not successful simply because projects are delivered on time. It succeeds when the resulting enterprise landscape is supportable, coherent, and capable of further change. Decisions made during the program shape future maintenance effort, vendor dependency, platform complexity, and adaptability. Governance helps ensure that delivery pressure does not undermine that longer-term result.

2. Defining the Governance Operating Model, Roles, and Decision Rights

If governance is expected to control architectural drift, it needs an operating model that makes decisions predictable. In large programs, the problem is rarely a lack of intent. More often, teams know architecture approval is required but remain unclear about which forum owns which decision, what evidence is needed, or whether a review outcome is advisory or binding. A governance operating model removes that ambiguity.

The first step is to distinguish decision types. Not every architecture decision belongs in the same forum. Enterprise-wide standards, strategic technology choices, and target-state principles usually sit with an enterprise architecture forum. Program-level trade-offs, transition-state decisions, and cross-workstream dependencies are better handled by a transformation architecture board. Solution-specific design conformance and implementation deviations can often be addressed by domain architects or solution review panels. A layered model keeps senior forums focused on enterprise-impacting issues while allowing lower-level decisions to move at delivery speed.

Roles need to reflect how delivery actually works, not just the formal organization chart. Enterprise architects set strategic direction and define guardrails. Domain architects interpret those guardrails in business, data, application, security, or infrastructure terms. Solution architects show how a delivery increment conforms to them or where it departs. Program leadership accepts trade-offs when architecture choices affect cost, scope, or timeline. Product owners, engineering leads, service owners, and design authorities also need explicit roles, because many architecture decisions continue to shape operations long after implementation.

Decision rights are just as important as role definitions. Governance weakens quickly when architects are expected to review without authority, or when they are given authority without clear escalation limits. A practical model distinguishes between decisions architects can approve directly, decisions they can recommend for approval, and decisions they can only escalate as non-conformance.

A standard integration pattern may require only domain architect sign-off. A departure from a cybersecurity control, by contrast, may require formal risk acceptance from security leadership and a program sponsor. Similarly, an architecture board might approve an identity modernization approach based on a central identity provider and phased application onboarding, while granting a time-bound exception for two legacy platforms that cannot yet support federation. In another common scenario, a data board may approve a canonical customer model for new services while allowing a temporary duplicate customer reference in a claims platform until downstream reconciliation controls are in place. When decision rights are explicit, teams understand whether governance is acting as advisor, control point, or escalation path.

Decision rights also need to align with artifact maturity. Early concept reviews should ask only for enough evidence to judge strategic fit, major impacts, and key constraints. Detailed design reviews should require traceable evidence such as interfaces, data classifications, technology choices, and identified exceptions. That balance prevents governance from demanding implementation detail too early while still enforcing rigor before delivery commitments harden.

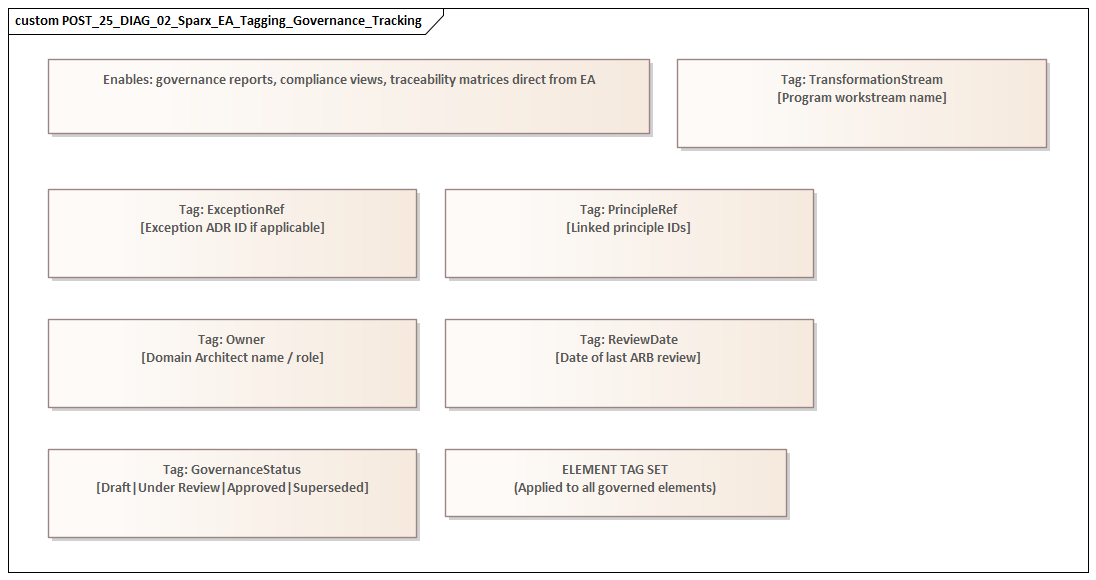

Sparx EA can help operationalize this model. Package states, tagged values, review workflows, and linked decision elements can indicate what is ready for review, who needs to review it, and what outcome has been recorded. Used properly, the repository supports not just architecture content but the mechanics of governance itself.

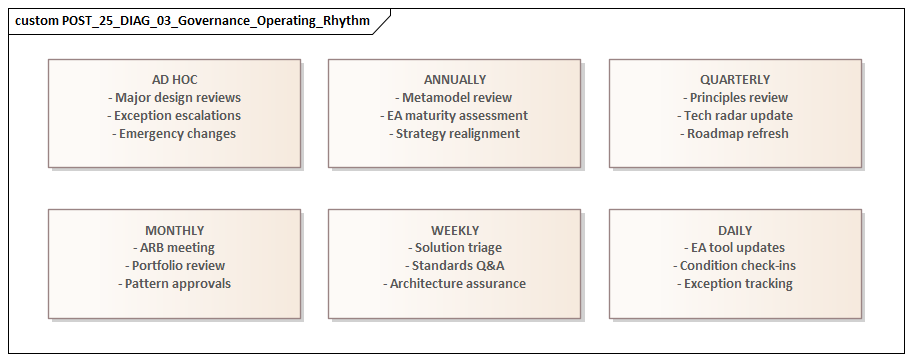

Cadence matters as well. Monthly review boards are rarely sufficient when delivery teams are making architecture-affecting decisions every week. In practice, the most workable model combines formal boards with lightweight asynchronous reviews, design clinics, and time-boxed exception handling. That keeps governance responsive without giving up formal control where it matters most. Service expectations such as review turnaround times, escalation paths, and minimum evidence requirements also help prevent architecture from being seen as a bottleneck.

The operating model turns governance from a broad intention into something teams can use. Once those structures are defined, they can be embedded in Sparx EA as practical controls. free Sparx EA maturity assessment

3. Establishing Governance Processes and Controls in Sparx EA

Once the operating model is clear, the next task is to translate governance from policy into practice. In Sparx EA, that means configuring the repository so that reviews, approvals, and exceptions are visible, repeatable, and auditable. The aim is not to turn the tool into a cumbersome workflow engine. It is to make governance tangible.

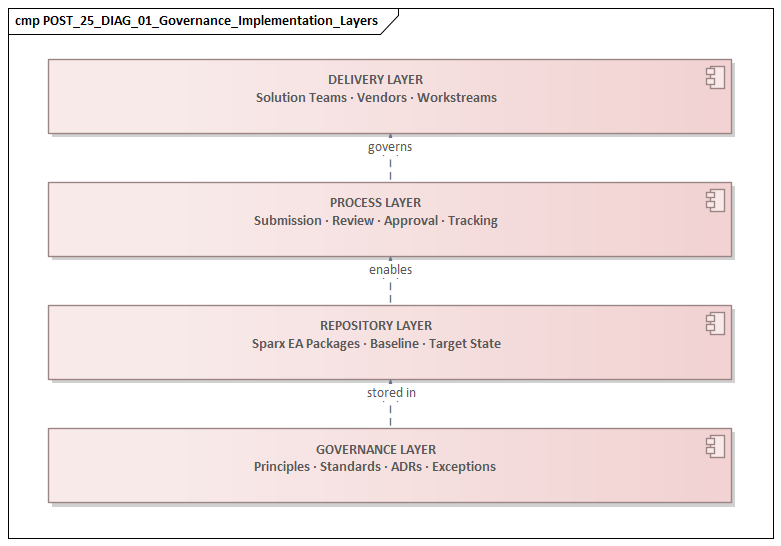

A sensible starting point is repository structure. Packages should reflect how governance is managed, not just how architecture content is categorized. A typical structure might include:

- target architecture,

- transition architectures,

- solution architectures,

- standards and patterns,

- decisions,

- risks and issues,

- exceptions,

- and roadmap or release views.

Within solution areas, package structures should align with delivery increments, workstreams, or products, because those are usually the units through which funding, milestones, and accountability are managed. That makes it easier to answer practical questions: which releases are waiting for review, which solutions are approved, and where unresolved exceptions remain.

Lifecycle control comes next. Sparx EA supports status fields, baselines, versioning, and package control mechanisms that can be combined into review states such as Draft, In Review, Approved, Conditionally Approved, Rejected, and Superseded. Those states need clear meaning. A package marked In Review, for example, should meet defined evidence criteria before it reaches a board. That may include linked requirements, impacted applications, major interfaces, key data entities, relevant standards, and identified risks. In this way, the model itself becomes part of the control framework for review readiness.

Standards compliance should also be represented directly in the repository. Governance works best when it evaluates explicit trade-offs rather than undocumented assumptions. Approved patterns, technology standards, security controls, and data principles can therefore be modeled as reference elements and linked to solution components. Solutions can then be assessed not only by what they contain but by how they align with architectural guardrails. Technology lifecycle governance is a useful example: if Oracle 11g is marked as “retire” and PostgreSQL on the strategic cloud platform is marked as “standard,” new solution designs can be checked explicitly against that policy before procurement or build begins.

Where a solution diverges, the deviation should be captured as an exception element rather than disappearing into meeting notes. At minimum, an exception should record:

- rationale,

- impacted components,

- risk rating,

- owner,

- approving authority,

- approval date,

- and sunset or review date.

This is particularly important in transformation programs, where temporary exceptions have a habit of becoming permanent unless they are visible and actively managed. Consider a realistic migration case: a customer communications workstream may need to retain an on-premise file transfer mechanism for six months because a regulator-facing partner cannot yet consume APIs. If that exception is modeled properly, governance can see the affected interfaces, the operational risk, the target retirement milestone, and the release dependency tied to partner readiness.

Decision traceability is another core control. Architecture decisions should exist as first-class elements in the repository, linked to affected capabilities, applications, interfaces, technologies, and roadmap increments. That makes impact analysis possible when assumptions change, and it gives review forums a way to revisit prior decisions in context. It also strengthens accountability by showing whether implementation reflects an approved decision, an accepted exception, or an ungoverned deviation.

Tagged values, stereotypes, and validation rules can reinforce governance if used selectively. They should focus on governance-critical metadata rather than creating administrative burden. Common examples include business criticality, data classification, hosting model, regulatory relevance, lifecycle state, and review status. Applied consistently, these attributes support reporting across the program and help identify patterns such as repeated use of non-standard technologies or solutions approaching implementation without a completed review.

The control model is completed by preserving evidence. Review outcomes, comments, approvals, and baseline snapshots should be stored so the program can reconstruct what was assessed at a particular point in time. In large transformations, that history matters when decisions are challenged, audits occur, or transition architectures need to be revisited.

Once roles, decision rights, and review stages are clear, Sparx EA can be configured to enforce them in a way that is practical rather than ceremonial. fixing Sparx EA performance problems

4. Standardizing Architecture Content, Traceability, and Reuse

The governance controls described above depend on one further condition: architecture content must be consistent enough to compare, review, and reuse. In large programs, that consistency cannot be left to individual preference. Different teams may model the same concept in different ways, use incompatible document structures, or omit key relationships entirely. When that happens, governance becomes slower and more subjective because reviewers must first interpret the artifact before they can assess the design.

Standardization starts with a pragmatic metamodel. The repository should define a manageable set of element types, stereotypes, naming conventions, and mandatory relationships that reflect how the program actually makes decisions. The objective is not theoretical purity. It is shared interpretation. If one team models an application service, an interface, or a business capability, another team should be able to understand it immediately and use it in downstream analysis.

A practical metamodel often includes relationships such as:

- objectives to capabilities,

- capabilities to processes,

- processes to applications,

- applications to interfaces,

- applications to data,

- technologies to hosted components,

- and solutions to standards, decisions, and exceptions.

These are the decision-relevant paths that make governance and impact analysis useful. If they are reliable, review discussions become faster and less subjective.

Template-based content is the next enabler. Sparx EA can provide standard package structures for solution architectures, transition-state views, integration designs, and domain assessments. A solution package, for example, might always contain: multi-team Sparx EA collaboration

- scope and context,

- impacted capabilities,

- application components,

- interfaces,

- major data objects or classifications,

- technology choices,

- key risks,

- decisions,

- and exceptions.

That improves review quality because governance forums are comparing like with like. It also saves architects from spending time deciding how to structure each solution from scratch.

Once content is standardized, traceability becomes genuinely valuable. Stakeholders can follow the chain from strategic intent to implementation consequence. They can see which business outcomes depend on a platform upgrade, which applications are affected by a change in data ownership, or which roadmap items rely on a technology that is being phased out. For example, if a program decides to centralize product pricing into a shared service, the repository should make it possible to trace that decision to impacted sales processes, consuming applications, integration changes, and release dependencies. Without that traceability, governance discussions tend to revert to opinion.

The key is not to pursue exhaustive linkage. Repositories often fail when teams try to connect everything to everything else. Governance does not need universal traceability; it needs decision-relevant traceability. For most transformation programs, the paths listed above are sufficient to support planning, impact assessment, and conformance review. The discipline lies in maintaining the critical links consistently.

Reuse is the third benefit of standardization. Large programs encounter the same design problems repeatedly: integrating with shared platforms, onboarding users, exposing APIs, managing reference data, applying audit controls, or handling identity and access. Without reusable architecture assets, each team solves the same problem independently and introduces unnecessary variation. Sparx EA can store approved patterns, reference architectures, canonical information models, standard technology stacks, and common business objects as governed assets.

A realistic example is event integration. If the enterprise has approved a Kafka-based event pattern for publishing customer status changes, that pattern can be reused across billing, CRM, and service operations rather than redesigned by each team. Another example is API security: an approved pattern for OAuth2 token validation through a central API gateway can be applied consistently across digital channels instead of being reinterpreted in every product squad.

Reuse improves governance and delivery speed at the same time. Teams start from structures that have already been reviewed and approved rather than inventing new ones for each initiative. Reviewers, in turn, assess familiar patterns instead of entirely novel designs. Over time, the repository becomes more than a record of architecture. It becomes an asset base that actively shapes future solutions.

This is where standardization pays for itself. It reduces interpretive effort, improves comparability, and raises the quality of both governance and delivery.

5. Integrating Governance with Delivery, Change, and Portfolio Management

Even a well-structured repository has limited value if governance sits beside delivery rather than inside it. Architecture governance creates impact only when it influences funding, planning, design, implementation, and change decisions at the right moments. Otherwise, reviews happen too late and the repository becomes retrospective instead of operational.

At portfolio level, governance should shape investment decisions before initiatives are fully committed. Proposed initiatives can be linked in Sparx EA to capabilities, target-state components, transition architectures, and known constraints. That allows portfolio leaders to ask whether an initiative advances the target architecture, duplicates an existing solution, creates avoidable technical debt, or depends on unresolved platform decisions. In that role, the repository supports prioritization rather than merely documenting decisions after the fact.

Delivery integration is equally important. Architecture approval should not be treated as a one-time event. It should occur at key points such as concept shaping, solution outline, detailed design, pre-build assurance, and pre-release review. Sparx EA supports this by allowing solution packages to mature over time while carrying forward linked decisions, standards, exceptions, and review history.

That gives delivery teams a practical way to demonstrate architecture readiness without rebuilding the same evidence in separate documents for every checkpoint. It also helps architects and delivery managers stay clear on their different responsibilities. Delivery managers need to know whether an architecture issue is blocking, advisory, or accepted as managed risk. Architects need visibility into whether conditions of approval have been implemented, deferred, or superseded. In Sparx EA, those relationships can be represented through links between architecture decisions, work packages, releases, and milestones.

Change management is another critical integration point. Transformation programs constantly absorb scope changes, vendor issues, regulatory updates, and operational findings. If change control is disconnected from architecture governance, teams may approve changes that seem reasonable locally but conflict with transition-state dependencies or target-state direction. By using Sparx EA to assess impacts against the governed model, change boards can see whether a proposed change affects shared interfaces, data ownership, security patterns, standards compliance, or roadmap sequencing.

A practical example is vendor substitution. Suppose an infrastructure workstream proposes replacing the approved managed integration platform with a niche tool because of a short-term procurement delay. Without architecture visibility, that may appear to be a harmless schedule recovery action. With repository-based governance, the board can see the wider implications: additional support skills, divergence from integration standards, changes to monitoring patterns, and future migration cost.

Portfolio reporting should also include architectural health, not just scope, cost, and schedule. Because Sparx EA stores standards links, review states, exceptions, and dependencies, it can provide indicators such as:

- unresolved exceptions by domain,

- concentration of non-standard technologies,

- major dependency exposure,

- pending decisions affecting critical releases,

- and solution areas with weak traceability.

These are leading indicators of delivery risk and future operating cost, not technical curiosities. A release train with multiple open architecture exceptions in identity, data retention, and resilience deserves executive attention even if delivery status is still reported as green.

Governance becomes a management discipline when it is connected to portfolio, delivery, and change processes. That is the point at which architecture stops being a parallel assurance function and starts influencing how the program is actually run.

6. Measuring Governance Effectiveness and Driving Continuous Improvement

Governance should not be measured by activity alone. The number of reviews held, diagrams created, or packages approved may indicate effort, but it says little about whether governance is improving decisions or reducing risk. To remain credible, governance needs measures that focus on outcomes.

A practical measurement framework should cover four dimensions:

- Decision quality

Are decisions made with sufficient evidence, at the right time, and with clear accountability?

- Conformance

Are approved standards, patterns, and target-state principles reflected in solution design and implementation?

- Delivery enablement

Is governance helping teams move faster through reuse, earlier issue identification, and clearer decision paths?

- Risk control

Are exceptions, technical debt, and cross-domain dependencies being surfaced early enough to influence planning?

These dimensions can be translated into measurable indicators using the repository structures established in earlier sections. Typical metrics include:

- review turnaround time,

- percentage of solutions reaching implementation with approved architecture status,

- number of active exceptions by domain,

- age of exceptions,

- percentage of exceptions with sunset dates,

- reuse rate of approved patterns,

- traceability completeness for critical initiatives,

- recurring non-conformance themes,

- and frequency of late-stage design changes caused by unresolved architecture issues.

Those metrics need careful interpretation. A low number of exceptions may indicate strong conformance, but it may also suggest that teams are bypassing governance. Fast review times may look positive, but not if approvals are superficial. Governance metrics therefore need to be balanced and discussed in context.

Sparx EA can serve as the primary evidence source because decisions, standards links, review states, and exceptions are recorded directly in the model. That supports consistent reporting across workstreams and helps architecture leaders identify trends such as persistent use of non-standard technology, weak traceability in specific domains, or repeated delays in closing review conditions. Technology lifecycle reporting, for example, may show that projects continue to introduce components on a platform already marked “contain” or “retire.” That is a clear signal that standards enforcement or migration planning needs attention.

Continuous improvement should be built into the governance cadence. Periodic retrospectives should examine:

- where reviews are adding value,

- where evidence requirements are excessive,

- which standards are frequently challenged,

- which exception types recur,

- and which parts of the repository are underused or misunderstood.

Some improvements will be procedural, such as simplifying a review gate or clarifying decision rights. Others will be model-related, such as refining the metamodel, adjusting mandatory attributes, or expanding reusable reference content. The objective is not to make governance more elaborate. It is to keep governance aligned with the way the program actually delivers change.

That returns the discussion to the central idea introduced at the start: Sparx EA is most valuable when it supports a living governance system. Measurement and improvement keep that system useful rather than allowing it to harden into static bureaucracy.

Conclusion

Implementing architecture governance with Sparx EA in a large transformation program is ultimately about preserving architectural intent in the face of delivery pressure, organizational complexity, and constant change. The tool does not create governance maturity by itself. What matters is the combination of clear decision structures, disciplined repository design, standardized content, and integration with delivery and portfolio processes.

The strongest governance models share a few characteristics. They make decision rights explicit. They treat the repository as the system of record for standards, decisions, exceptions, and review evidence. They focus traceability on the relationships that matter for planning and impact analysis. They enable reuse so teams can move faster with less variation. And they measure success by decision quality, risk reduction, and delivery enablement rather than by documentation volume.

In practice, the most effective governance model is rarely the most elaborate. It is the one that makes the critical decisions explicit, traceable, and reviewable at the right time. When Sparx EA is overloaded with low-value detail, governance becomes slower and less credible. When it is structured around real delivery decisions, it becomes a source of both control and speed.

Successful architecture governance is not measured by compliance alone. It is measured by whether the program can change direction without losing coherence, absorb delivery variation without accumulating unmanaged complexity, and leave behind an enterprise landscape that is strategically aligned, operationally sustainable, and ready for further change.

Frequently Asked Questions

What is architecture governance in enterprise architecture?

Architecture governance is the set of practices, processes, and standards that ensure architectural decisions are consistent, traceable, and aligned to strategy. It includes an Architecture Review Board, modeling standards, lifecycle management, compliance checking, and exception handling.

How does Sparx EA support architecture governance?

Sparx EA supports governance through package-level security, model validation rules, tagged value lifecycle tracking, baseline management, and report generation. Architecture decisions, compliance status, and review outcomes can all be tracked as model elements with defined owners and statuses.

What are the key elements of an effective EA governance checklist?

An effective EA governance checklist covers: principles alignment, standards compliance, integration impact assessment, security and data classification review, requirements traceability, roadmap alignment, and operational readiness. Each gate should produce model-based evidence, not just a presentation.