⏱ 25 min read

How We Built a Capability Map for a Digital Transformation Program

Learn how we built a business capability map to support a digital transformation program, align strategy with execution, identify gaps, and prioritize technology and operating model change.

capability map, business capability mapping, digital transformation, enterprise architecture, transformation program, operating model, business architecture, capability assessment, strategy alignment, target operating model, architecture roadmap, organizational change, technology transformation, capability-based planning free Sparx EA maturity assessment

Introduction

Many digital transformation programs start too low in the stack. Teams move quickly into platform choices, delivery mobilization, or solution design before agreeing on the business abilities the enterprise actually needs. We saw the same pattern. Business units used different language for similar needs. Local issues were presented as enterprise priorities. Discussions slipped into system ownership, funding boundaries, and organization politics. Before making sound architecture and investment decisions, we needed a more stable frame of reference. how architecture review boards use Sparx EA

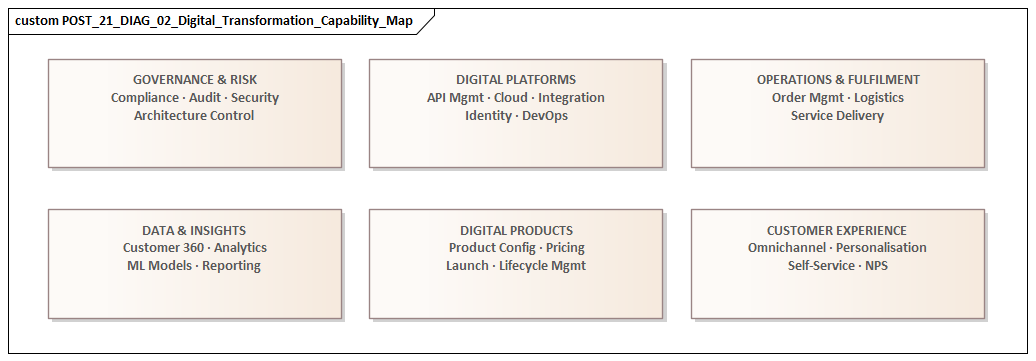

We chose to build a capability map. A capability map describes the enduring business abilities an enterprise needs, independent of current structures, process variants, or application portfolios. It is not an org chart, so it does not show reporting lines or accountability models. It is not a process model, so it does not describe workflow sequence. Its purpose is simpler and more durable: define what the business must be able to do to execute strategy.

That distinction mattered because the transformation crossed multiple business units, legacy platforms, and competing delivery agendas. We did not want an abstract enterprise architecture artifact that looked good in a slide deck and then disappeared. The map had to work as a practical tool for executive alignment, portfolio prioritization, and target-state design. It needed to give business and technology leaders a shared language for discussing strategic needs, capability health, and investment trade-offs.

It also helped us diagnose problems more accurately. A request framed as a project or system issue often turned out to be a symptom of something broader: weak data stewardship, fragmented governance, inconsistent decisioning, or missing shared enablement. In one case, several teams argued for separate workflow products to improve case handling. Once we looked through a capability lens, the issue was not workflow tooling at all. The enterprise lacked a coherent case management capability, with common data, routing rules, and ownership boundaries.

That business-first lens improved decision-making. Instead of prioritizing initiatives by sponsor influence or system age, we could ask more useful questions: Which capabilities matter most to strategic outcomes? How well do they perform today? Where would investment create enterprise-wide leverage? In several instances, the map showed that isolated automation or replacement proposals would not solve the underlying constraint. The real issue sat in fragmented data, inconsistent accountability, or poor cross-functional coordination.

From an architecture standpoint, the capability map became the bridge between strategy and execution. Once capabilities were defined, we could link them to processes, information, applications, integrations, and technology risks. That gave us a more disciplined way to rationalize the application estate, identify shared dependencies, and sequence transformation work realistically. It also made architecture decisions easier to explain in business terms. Rejecting a duplicate tool is a different conversation when you can show that the priority gap is in a shared business capability, not in product selection.

The sections that follow explain how we built the map, kept it usable, and applied it to prioritization, roadmaps, and governance. The central lesson is straightforward: capability mapping works when it is used as a decision framework, not as documentation for its own sake.

Why Capability Mapping Was the Right Starting Point

Capability mapping gave the program something many transformations lack at the outset: a stable anchor. Most other planning lenses in a large enterprise are volatile. Organizations are restructured. Leadership priorities move. Processes are redesigned. Applications are upgraded or retired. If transformation is anchored too early in those moving parts, architecture tends to mirror current constraints rather than future business intent.

Capabilities are more durable. They describe what the enterprise must be able to do regardless of who performs the work, which process variant is followed, or what systems happen to support it today. That durability was particularly useful in a program spanning several business units, each with its own terminology, operating model, and technology estate. Without a common model, stakeholders often believed they were discussing the same issue when in fact they were working at different levels of abstraction or describing different scopes.

The capability map gave us a normalized vocabulary. It separated enterprise needs from local implementation preferences and exposed where apparently different initiatives were actually addressing the same business ability. That reduced ambiguity and improved planning across domains. Just as importantly, it prevented the program from becoming application-centric too early.

In many transformations, the first serious conversations focus on platforms, integration patterns, or replacement candidates. Those questions matter, but they are downstream. The capability lens establishes business intent first. We used that sequence deliberately: define the required business abilities, assess their strategic importance and current health, and then examine process, data, application, and technology implications.

That shift improved investment logic. Instead of asking which systems were oldest or which projects had the strongest sponsorship, we asked which capabilities were most critical to strategic outcomes and where constraints were limiting enterprise performance. Some highly visible systems turned out to support non-differentiating capabilities and did not justify immediate transformation. At the same time, several less visible areas emerged as foundational because weaknesses there affected multiple value streams. Identity and access management was a good example. A set of customer portal modernization requests initially looked like separate channel initiatives. The capability assessment showed that inconsistent authentication and authorization models were the real barrier to a unified customer experience.

Capability mapping also let us break down the enterprise without losing coherence. Executives needed a concise view of the business landscape. Delivery teams needed enough detail to identify meaningful change. A tiered model supported both. We kept a compact set of level-one and level-two capabilities for strategic discussion, then decomposed only the priority areas that required deeper analysis.

Finally, the map became the anchor for connecting architecture domains. Once capabilities were defined, we could map processes to them, identify the information they required, inventory the applications that enabled them, and expose technology dependencies and risks. Had we started from a technical estate inventory, we would have spent months inferring business relevance afterward. Starting from capabilities gave us a business-first structure for rationalization and target-state design.

In short, capability mapping was the right starting point because it gave strategy, architecture, and execution a common frame before delivery mechanics took over. Scope definition, current-state assessment, prioritization, and roadmap sequencing all depended on that foundation.

Defining Scope, Objectives, and Transformation Outcomes

Once we agreed that the capability map would anchor the program, we had to decide what it needed to cover and what decisions it was expected to support. That sounds administrative, but it is often where capability efforts go wrong. Some become so broad that they lose operational value. Others are so narrow that they cannot influence enterprise change. We wanted a middle path.

We defined scope across three dimensions: enterprise breadth, planning horizon, and depth of decomposition.

Enterprise breadth

The first question was coverage. Which parts of the business belonged in the map? Because the transformation crossed several domains, we included all major business areas contributing to the target customer and operational outcomes, even where some units were not part of the first delivery wave. That was necessary if we wanted to avoid reproducing existing silos. At the same time, we excluded highly local or specialized functions unless they had a material dependency on enterprise change. Otherwise, workshops would have been consumed by edge cases with little strategic relevance.

Planning horizon

The map was intended to support a multi-year transformation, not just a current-state review. It therefore had to reflect not only what the organization does today, but what it must be able to do in the future to execute strategy. That changed the tone of the conversation. Instead of preserving current operating assumptions, we asked what capabilities would be required if the enterprise wanted faster policy changes, more proactive customer engagement, greater automation, or stronger regulatory responsiveness.

Depth of decomposition

The third decision concerned detail. Too little detail would make assessment superficial. Too much would turn the exercise into process analysis under another name. We used a simple rule: decompose only until a capability is specific enough to assess ownership, pain points, enablement, and investment options. That gave us enough granularity to identify meaningful transformation candidates without creating an unwieldy taxonomy.

With scope defined, we made the objectives explicit. The map needed to support:

- prioritization of transformation investment

- identification of duplicated or fragmented capabilities

- exposure of weak enabling foundations such as data, governance, integration, and security

- target-state architecture design

- communication across executives, architects, and portfolio teams

Making those objectives explicit prevented the map from becoming a polished but ambiguous artifact.

We then translated broad transformation ambition into concrete outcome categories. Rather than relying on phrases such as “be more digital,” we defined outcomes like:

- reduced cycle time

- improved straight-through processing

- stronger data accountability

- better cross-channel consistency

- increased adaptability for regulatory or product change

- improved resilience and control

Those outcomes later became the basis for judging capability importance. They also made it easier to connect business ambition to architecture choices. A capability associated with faster decision-making, for example, might depend as much on data quality, business rules governance, and event-driven integration as on front-end application change.

A practical example came from operations visibility. One domain wanted near-real-time status tracking across fulfillment and service teams. The initial assumption was that another reporting layer would solve the issue. The capability discussion showed otherwise. The real requirement was timely event propagation across operational systems. That led to an event architecture using Kafka to publish business events, rather than another batch-oriented dashboard solution.

By the end of this stage, we had a bounded scope, a clear set of objectives, and a shared understanding of the outcomes the capability map was meant to support. That clarity made the next stages faster and more credible.

Establishing the Capability Model and Level of Detail

With scope and objectives in place, the next step was to define the structure of the capability model itself. This is where the exercise moved from idea to method. The challenge was not simply listing capabilities, but organizing them in a way that would remain stable, comparable across domains, and useful for later analysis.

We started with a small set of modeling principles.

First, capabilities had to be expressed as business abilities, not departments, processes, systems, or project labels. If a proposed capability sounded like a team name, a workflow step, or a technology function, we treated that as a sign that the definition needed revision.

Second, capability names had to be understandable across business units. Since one of the main goals was to create a normalized vocabulary, we avoided local language wherever possible.

Third, the model had to remain valid if ownership changed or enabling platforms were replaced. That discipline kept the map from becoming trapped in current-state structures.

The tiered model

To keep the model usable, we organized capabilities into a tiered hierarchy:

- Level 1 represented major business domains

- Level 2 described distinct business abilities that could be assessed meaningfully

- Level 3 was used selectively in priority areas where additional detail would support investment decisions or architectural design

This gave executives a concise view while still giving architects and delivery teams enough depth to work with. Decomposition was driven by decision need, not by a desire for theoretical completeness. If a lower-level capability could not be tied to distinct pain points, ownership concerns, or architectural implications, it usually did not belong in the model.

Writing clear capability definitions

A practical lesson emerged early: names alone were not enough. Each capability needed a short statement describing its purpose and boundaries. We documented:

- what the capability includes

- what it excludes

- how it differs from adjacent capabilities

That reduced ambiguity in later workshops. Without those definitions, stakeholders interpreted the same term in different ways and assessment quality deteriorated quickly.

For example, “Customer Management” was initially used to cover everything from onboarding to servicing to complaint handling. That was too broad to assess meaningfully. We split it into more discrete capabilities, including customer onboarding, customer profile management, and customer service resolution. Once those boundaries were clear, pain points and investment needs became much easier to isolate.

Designing for cross-domain traceability

The tiered model also created a stable anchor for cross-domain mapping. Once level-two capabilities were settled, we could associate them with process groups, information concepts, application services, and technology platforms without confusing business intent with implementation. Sparx EA training

That enabled several useful forms of analysis:

- where multiple applications supported the same capability

- where a capability depended on fragmented data sources

- where technical debt in a shared platform created risk across several business areas

- where target-state design required governance or ownership changes as well as system change

At that point, the capability map became more than a taxonomy. It turned into the reference key linking architecture inventories that would otherwise remain disconnected.

Iterative validation

We did not try to perfect the model in isolation. Early drafts were tested with business leaders, architects, and delivery stakeholders. We asked three practical questions:

- Does the hierarchy make sense?

- Are the names intuitive?

- Can the model support real transformation decisions?

That validation exposed both gaps and over-modeling. In some cases, we merged capabilities that had been separated artificially. In others, we split broad categories that concealed materially different investment needs. The goal was not conceptual purity. It was a model detailed enough to guide architecture and portfolio decisions while remaining understandable and maintainable throughout the program. Sparx EA guide

By the end of this stage, we had a capability model that could support the next step: grounding the map in current-state evidence rather than abstract design.

Engaging Stakeholders and Capturing the Current State

Once the capability structure was in place, the work shifted from modeling to evidence gathering. A capability map can look coherent on paper and still fail if it does not reflect how the enterprise actually performs, where constraints sit, and how different stakeholders experience the same capability. Capturing the current state therefore required a deliberate engagement approach.

Choosing the right stakeholder mix

We identified stakeholder groups based on the perspective each could contribute:

- Business executives clarified strategic importance and enterprise pain points

- Operational managers described how capabilities performed in practice, including workarounds, control issues, and handoff failures

- Product and delivery leaders highlighted change demand, backlog pressure, and dependency hotspots

- Enterprise and domain architects connected business concerns to applications, data flows, integration patterns, and technology risk

No single group had a complete picture. In several areas, what leaders described as process inefficiency turned out to be a symptom of fragmented information ownership or brittle integration design.

Using structured prompts

Rather than asking stakeholders whether a capability was “good” or “bad,” we used a consistent set of prompts to gather evidence. For each priority capability, we explored questions such as:

- What outcomes does this capability need to support?

- Where are the main performance constraints?

- How fragmented is ownership?

- How many systems are involved?

- How much manual intervention is required?

- How reliable is the underlying data?

- How difficult is it to change policy, rules, or workflow?

These prompts moved the discussion beyond opinion and helped reveal whether issues were primarily organizational, informational, or technical.

Separating workshop types

One practical lesson was to separate stakeholder sessions by purpose. Large mixed workshops were useful for building shared understanding and exposing cross-functional dependencies, but they were less effective for detailed current-state assessment. In large forums, people generalize, and unresolved disagreements can consume the agenda.

We therefore used a combination of:

- broad alignment workshops

- smaller domain-focused sessions

- targeted interviews

The smaller sessions produced better evidence on local pain points, variation, and enabling-system complexity. The larger sessions helped validate where those findings had enterprise significance.

Linking capabilities to architectural conditions

The map only became useful when it connected business concerns to architecture. During current-state capture, we documented for each priority capability:

- key associated processes

- critical information objects

- main supporting applications

- integration touchpoints

- notable technology constraints

We were not trying to build exhaustive inventories. The aim was to identify where capability weakness correlated with architectural conditions such as duplicated platforms, inconsistent master data, point-to-point interfaces, excessive customization, or dependence on aging infrastructure.

One example came from service operations. Repeated delays were initially framed as an execution issue. The architecture view showed that more than twenty tightly coupled interfaces synchronized status updates overnight. The business wanted faster response and better visibility; the real blocker was an integration pattern built around batch synchronization rather than event publication.

Capturing variation across business units

One of the more revealing findings at this stage was not simply that a capability was weak, but that it was performed differently across the enterprise and produced materially different outcomes. In some cases, one business unit had already solved a problem that others still treated as structural. In others, local optimization improved a narrow metric while increasing enterprise complexity.

Capturing those differences helped us distinguish true capability gaps from inconsistent implementation patterns. That distinction mattered later. Not every weak capability required a new platform or a major transformation initiative. Some needed standardization, reuse, or wider adoption of practices that were already working elsewhere. Sparx EA best practices

Triangulating evidence

To keep the assessment credible, we treated the current state as triangulated evidence rather than workshop consensus. We compared stakeholder input with:

- operational metrics

- audit findings

- application landscape data

- incident trends

- delivery backlog themes

That discipline mattered because transformation programs attract strong narratives, and not all of them are equally well supported by facts.

By the end of this stage, the capability map was no longer just a model of what the business needed to do. It had become a grounded view of how well those capabilities actually performed and where architecture would need to intervene to improve them.

Prioritizing Capabilities for Investment and Change

Once the current state was understood, the next question was which capabilities justified enterprise investment. In any transformation, demand exceeds capacity, and almost every domain can make a plausible case for change. The capability map gave us a way to compare unlike requests through a common business lens.

The prioritization factors

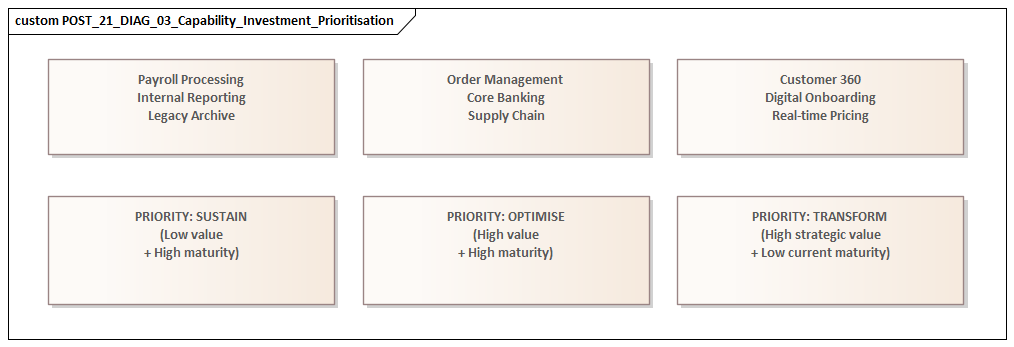

We avoided simple ranking based on stakeholder preference or visible pain alone. Instead, we evaluated capabilities across four factors:

- Business criticality

How strongly does the capability influence target outcomes such as growth, service quality, compliance, resilience, or cost efficiency?

- Capability health

How effectively does the enterprise currently perform in that area, taking into account fragmentation, manual effort, data reliability, and adaptability?

- Enterprise leverage

Would improving the capability create benefits across multiple value streams, business units, or programs?

- Transformation readiness

Is there enough ownership clarity, architectural understanding, and delivery capacity to act?

This gave us a more balanced view than maturity scoring alone. A low-maturity capability was not automatically a top priority if its strategic impact was limited. Conversely, a moderately performing capability could become a major investment candidate if it was foundational to several strategic outcomes and constrained broader change.

Looking beyond visible pain

Some of the most important capabilities were not the most visible. Data management, integration, decisioning, and workflow orchestration often lacked strong business sponsorship because they sat behind customer-facing outcomes. Yet weaknesses there repeatedly slowed or diluted benefits elsewhere.

The capability map helped make those dependencies visible. It gave us a way to show why certain enabling capabilities deserved investment even when they were not the loudest demands in the portfolio.

Grouping capabilities by investment pattern

We found it more useful to classify capabilities into investment patterns than to force a single ranked list. The main categories were:

- Differentiating capabilities requiring targeted innovation

- Foundational capabilities requiring standardization, simplification, or platform renewal

- Commodity or necessary capabilities requiring containment, efficiency, and risk reduction rather than expansion

This categorization improved portfolio conversations because it linked priority to the type of architectural response, not just to urgency.

Bringing architecture into the prioritization logic

From an enterprise architecture perspective, prioritization only became credible when each capability was considered together with its enabling landscape. A capability that looked attractive from a business standpoint could still be a poor near-term candidate if it depended on unresolved master data issues, deeply coupled legacy platforms, or scarce integration capacity.

On the other hand, some capabilities offered unusually high return because they sat on reusable architectural building blocks. Strengthening identity, event integration, rules management, or shared data services could unlock progress across multiple business domains. That was how IAM modernization was ultimately prioritized: not as a security-only upgrade, but as a foundational capability enabling customer self-service, partner onboarding, and stronger access control across channels.

Another example involved product change. Several business units complained that launching or updating offerings took too long. Early proposals focused on replacing front-end tools. The capability assessment showed that the real issue was fragmented rules management and duplicated product configuration logic spread across legacy systems. Prioritization shifted from channel tooling to a shared rules and product definition capability, which delivered broader leverage.

Managing dependencies explicitly

Dependencies were treated as first-class inputs. We mapped which priority capabilities relied on:

- common platforms

- shared data domains

- upstream governance decisions

- scarce delivery or integration capacity

That exposed a familiar pattern: business leaders naturally prioritized front-stage capabilities, while architects could see that several of them depended on the same backstage constraints being addressed first. The capability map helped frame this not as a conflict, but as sequencing logic.

The output: a decision framework

The final output was not a static heatmap. For each priority capability, we documented:

- why it mattered

- what type of change it required

- which architectural constraints were most relevant

- whether it belonged in the near-, mid-, or longer-term roadmap

That turned prioritization into something more useful than a workshop artifact. It created a defensible basis for sequencing investment, aligning domain roadmaps, and ensuring that the transformation portfolio reflected enterprise design logic rather than isolated demand signals.

Using the Capability Map to Drive Roadmaps, Governance, and Decisions

A capability map becomes most valuable when it stops being a diagnostic artifact and starts shaping real decisions. For us, that happened when we began using the map as the organizing structure for roadmap design, governance forums, and investment trade-offs.

Roadmap construction

Rather than building the transformation roadmap as a list of projects, we structured it around capability outcomes and the architectural changes required to achieve them. That shifted the role of projects. They became vehicles for capability improvement, not ends in themselves.

In roadmap workshops, each proposed initiative had to show:

- which capability or sub-capability it would strengthen

- what measurable outcome it supported

- what dependencies it introduced or resolved

That quickly exposed initiatives that were active but only weakly connected to strategic change. It also highlighted where multiple projects were trying to improve the same capability in uncoordinated ways.

This approach improved sequencing discipline. We could distinguish between visible business enhancements and the enabling work required beneath them. A roadmap item aimed at faster customer response, for example, often depended on shared data quality, workflow orchestration, decision rules management, or API modernization. In another case, a near-term initiative to improve operational visibility was deliberately sequenced behind a Kafka-based event backbone because the business outcome depended on timely event propagation, not another reporting layer.

Governance and investment review

The map also became a governance tool. In portfolio and architecture review forums, we used capabilities as the reference point for evaluating change requests, exception decisions, and investment proposals.

That changed the quality of governance conversations. Instead of asking mainly whether a project had budget, sponsorship, or a compelling local business case, we asked:

- Does it advance a priority capability?

- Does it align with the target-state direction for that capability?

- Does it reuse or strengthen enterprise building blocks?

- Does it duplicate enablement already planned elsewhere?

This made governance less reactive and more design-led. It also gave architecture teams a stronger basis for challenging duplicative solutions without sounding purely technology-driven. For example, the architecture board approved a shared IAM platform and declined a business unit request for a standalone customer login product because the latter would have reinforced fragmentation in a priority enterprise capability.

Decision traceability

One of the less obvious benefits was improved traceability. Because capabilities linked strategy, business outcomes, and enabling architecture, we could explain why certain decisions had been made and what assumptions they depended on.

If a steering committee chose to delay a customer-facing initiative in favor of foundational data work, that decision could be framed in capability terms rather than as an abstract architectural preference. That was especially useful in executive settings, where technology arguments alone rarely carry enough weight.

Cross-domain coordination

Many transformation decisions appear local when viewed through budget lines or system ownership, but their consequences are enterprise-wide. Capability-based governance helped surface those effects early.

When one domain proposed a specialized platform to solve an immediate need, the map helped us test whether the proposal:

- duplicated a shared capability already being developed elsewhere

- fragmented ownership of a common business ability

- created divergence from the broader target architecture

In several cases, that did not lead to rejection of the business need. It led to a different response: shared services, common platforms, or phased convergence. The same logic improved technology lifecycle governance. Where several capabilities depended on aging middleware nearing end of support, we treated renewal as an enterprise risk decision rather than a series of isolated infrastructure upgrades.

From artifact to operating instrument

Over time, the capability map became a standing management instrument rather than a one-time deliverable. It informed:

- roadmap refreshes

- quarterly investment reviews

- target-state discussions

- dependency management across programs

- application rationalization

- data ownership and governance decisions

- technology lifecycle governance

Its value was not just visual clarity. It introduced discipline into enterprise decision-making. Every major change could be evaluated in terms of the capability it improved, the architecture it required, and the broader transformation logic it either reinforced or undermined.

Conclusion

Building the capability map gave the transformation program more than a planning artifact. It created a durable decision structure that remained useful as priorities shifted, funding pressures increased, and delivery realities changed. That was its real value: not documenting an idealized view of the business, but helping the organization make better trade-offs under pressure.

Several lessons stood out.

First, capability mapping only works when it is tied to decisions. If it remains a workshop output, it quickly loses relevance. If it is connected to portfolio governance, domain roadmaps, application rationalization, data ownership decisions, and technology lifecycle governance, it becomes a living reference model for enterprise change.

Second, the level of detail matters. The model has to be specific enough to support assessment and traceability, but restrained enough to remain understandable and maintainable. Selective decomposition was essential to keeping the map practical.

Third, the strongest capability maps connect business weakness to architectural conditions. Some of the most significant constraints in our program were not in the applications themselves, but in fragmented accountability, inconsistent information semantics, aging integration patterns, and weak cross-domain coordination. The map helped surface those structural issues early enough to influence target-state design.

Finally, capability mapping improved organizational alignment. It gave leaders, architects, and delivery teams a shared way to discuss change without collapsing immediately into project language or technical detail. That common frame improved prioritization, reduced duplication, and made investment logic easier to defend.

For any enterprise undertaking digital transformation, that is the real advantage of capability mapping: it gives architecture a practical mechanism for coherence, sequencing, and sustained business-focused decision-making.

Frequently Asked Questions

What is an enterprise architecture capability map?

A capability map is a structured view of what a business must be able to do, organised by domain. Capabilities represent stable business outcomes independent of the current organisation or systems. They are used to frame investment decisions, identify gaps, and link strategy to architecture.

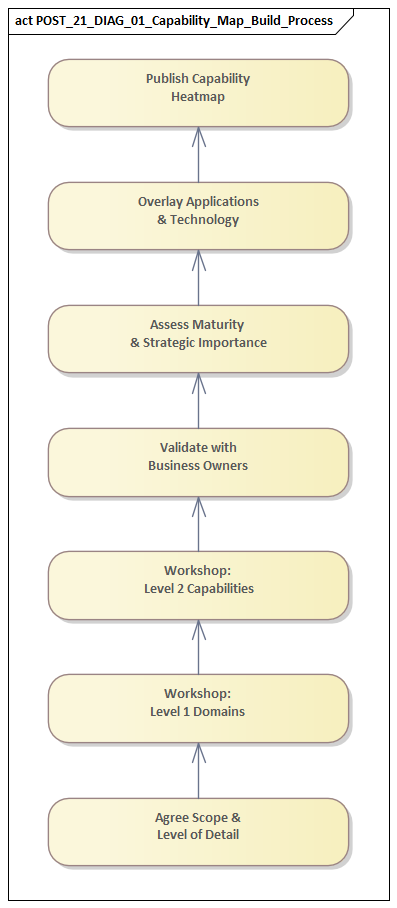

How do you build a capability map for a digital transformation?

Start by identifying L1 domains (Customer, Product, Operations, Finance, etc.) then decompose to L2 capabilities within each. Validate with business stakeholders. Rate each capability on strategic importance and current maturity. Link capabilities to supporting applications to reveal gaps and redundancies.

How does a capability map link to applications in Sparx EA?

In Sparx EA, capabilities are modeled as elements in the Business or Strategy layer. Application Components in the Application layer are linked to capabilities via Realisation or Association relationships. This creates a queryable capability-to-application map that enables portfolio analysis and investment prioritisation.