⏱ 23 min read

ArchiMate Modeling Standards for Architecture Teams | Best Practices ArchiMate training

Learn how to define ArchiMate modeling standards for architecture teams, improve consistency, governance, and collaboration, and create scalable enterprise architecture practices. ArchiMate tutorial for enterprise architects

ArchiMate modeling standards, ArchiMate best practices, architecture team standards, enterprise architecture modeling, ArchiMate governance, modeling conventions, architecture repository, ArchiMate framework, enterprise architecture standards, architecture collaboration ArchiMate layers explained

Introduction

ArchiMate gives architecture teams a shared notation for describing structure, behavior, and relationships across business, application, and technology domains. The notation, however, is only the starting point. In most enterprises, the real challenge is not whether ArchiMate can represent the landscape, but whether teams use it consistently enough for models to support decisions across portfolios, domains, and delivery stages. ArchiMate relationship types

Without standards, models usually drift toward individual preference. One architect produces dense cross-layer diagrams. Another prefers simplified stakeholder views. A third uses different names for the same concept. Each model may be defensible on its own, yet together they become difficult to compare, govern, and reuse. That weakens one of the main reasons organizations adopt an enterprise modeling language in the first place: to build architecture knowledge once and use it many times.

A practical modeling standard resolves that problem by defining how the organization will apply ArchiMate in its own context. It sets expectations for viewpoint selection, abstraction level, naming, relationship usage, and traceability. Those expectations help teams answer everyday questions. What belongs in a solution context view? How much technology detail should appear in a business-oriented diagram? When should a relationship be shown explicitly, and when is it enough to keep it in the repository? The goal is not notation completeness. The goal is useful models that support governance, delivery, and communication. ArchiMate modeling best practices

Standards matter for another reason: enterprise architecture is cumulative. Models created today should still be understandable a year later by people who did not create them. When teams apply common conventions, repository content becomes easier to search, analyze, and connect to adjacent practices such as portfolio management, service management, risk management, and technology lifecycle planning. A standard technology view, for example, can quickly show which customer-facing services still depend on an identity platform scheduled for retirement. In that sense, modeling standards are not just about cleaner diagrams. They provide a foundation for architecture analytics and institutional memory.

At the same time, standards need to remain workable. Rules that are too loose do little to improve consistency. Rules that are too rigid usually drive people around the process rather than through it. The most effective teams strike a balance. They define a small set of mandatory conventions, support them with templates and examples, and allow controlled flexibility where the architectural question genuinely requires it.

This article explains how architecture teams can establish ArchiMate modeling standards that are pragmatic, scalable, and aligned with enterprise decision-making. It focuses less on teaching the notation itself and more on the operating discipline around it: the principles, viewpoints, governance, tooling, and adoption practices that make ArchiMate a dependable instrument for collaboration and long-term architecture management.

Why ArchiMate Standards Matter

The value of ArchiMate standards becomes clear as soon as architecture work moves beyond isolated modeling and into shared delivery and governance processes. Models are rarely created for their own sake. Teams use them to assess change impact, guide solution design, support governance reviews, explain dependencies, and inform investment decisions. In those settings, standards make models dependable. Reviewers can concentrate on the architectural issue instead of first decoding each architect’s style.

This matters even more in federated organizations, where architecture responsibility is distributed across business domains, programs, and product teams. Different teams often model the same part of the enterprise from different angles. One may emphasize capabilities and business processes. Another may focus on application services and interfaces. A third may concentrate on deployment and platform dependencies. All of those views can be valid, but without standards they are hard to assemble into a coherent enterprise picture.

That coherence has a direct effect on governance. Architecture review boards and design authorities typically need to determine whether a proposal aligns with target architecture, reuses existing services, complies with standards, and manages risk appropriately. If submitted models vary widely in scope, naming, and relationship usage, governance becomes slower and more subjective. Teams spend time clarifying the artifact instead of evaluating the decision. Standardized views create predictable inputs and make comparison across initiatives far easier.

Consider a realistic example. An architecture board reviewing IAM modernization may require every proposal to show the same minimum content: identity provider, authentication flows, consuming applications, privileged access controls, and transition dependencies. When those elements appear in a standard pattern, the board can compare options in minutes. Without that pattern, each proposal has to be interpreted from scratch.

Standards also help teams model at the right level for the question being asked. Enterprise architecture becomes ineffective when a single diagram tries to satisfy every audience. Business stakeholders need views that clarify capabilities, value delivery, and organizational impact. Solution teams need enough precision to understand services, interfaces, and dependencies. Platform teams need deployment and technology context. Standards define what “good enough” looks like for each viewpoint so teams avoid both under-modeling and over-modeling.

Repository reuse and analytics depend on the same discipline. If naming and classification are inconsistent, it becomes difficult to identify duplication, assess impact, or aggregate portfolio information. If similar architecture facts are modeled in different ways, reporting becomes unreliable. Common conventions turn the repository into more than a collection of diagrams. They make it a usable knowledge base.

A second example illustrates the point. In an event-driven environment, teams may need to trace which applications publish customer events to Kafka and which downstream services consume them. If one team models topics as application interfaces, another as data objects, and a third does not model them at all, enterprise-wide analysis quickly breaks down. A standard representation of producers, topics, event contracts, and consumer services makes those questions answerable.

In the end, standards shift modeling from an individual craft to a team capability. That shift matters because enterprise architecture depends on continuity. People change roles, initiatives overlap, and stakeholders come and go. A standardized model is easier to understand, maintain, and extend over time. For architecture teams, that is the real benefit: not simply better-looking diagrams, but architecture knowledge that can be shared, governed, and acted on across the enterprise.

Core Principles for Defining Standards

Effective ArchiMate standards start from a simple premise: the standard should serve architecture work, not the other way around. The principles below provide the foundation for the more detailed rules in later sections.

1. Purpose over completeness

No model should try to describe the entire architecture unless the question genuinely demands it. Teams should model only what is needed to answer a defined question, whether that means showing business impact, clarifying integration dependencies, or illustrating a transition state. This keeps diagrams decision-oriented and prevents them from becoming visual inventories.

A solution assessment for a new claims platform, for example, may need to show customer service processes, core application services, external integrations, and hosting constraints. It does not need every server, database schema, or operational support process. The standard should make that boundary explicit.

2. Consistent abstraction

Many modeling problems come from mixing very different levels of detail in the same view. A capability map, a solution context view, and a deployment view should not operate at the same granularity. Standards should define abstraction boundaries for enterprise-level, domain-level, and solution-level content, and make clear when one level may refer to another.

This is especially important in shared repositories where strategic and delivery models sit side by side. If a strategic capability model suddenly drops into Kubernetes namespaces or firewall zones, the audience loses the thread. Likewise, a deployment view that includes broad strategic goals but omits runtime dependencies is unlikely to help engineers or reviewers.

3. Semantic clarity over diagram density

ArchiMate can express rich relationships, but more lines do not automatically create more understanding. Teams should show only the relationships that materially improve interpretation for the intended audience. The repository can preserve deeper traceability without forcing every dependency onto a stakeholder-facing diagram.

A common failure mode is the “everything connected to everything” view. Technically it may be accurate. Practically it is unreadable. Standards should therefore favor diagrams that explain rather than diagrams that merely encode.

4. Controlled vocabulary and naming discipline

Naming consistency is one of the strongest indicators of repository quality. If the same concept is labeled differently across teams, confidence in the repository drops quickly. Standards should define naming conventions by element type, treatment of acronyms, singular versus plural usage, and rules for reusing existing elements before creating new ones.

For instance, if one team uses “Customer Identity Service,” another uses “CIAM Platform,” and a third uses “Login Service” for the same enterprise capability, impact analysis becomes unreliable. Naming discipline is not cosmetic; it is essential for reuse, analytics, and governance comparability.

5. Traceability by design

A good standard makes it possible to follow a line of reasoning across layers and lifecycle states without requiring every diagram to show the entire chain. Teams should define minimum traceability between strategy, business, application, data, and technology concepts, as well as between baseline, transition, and target architectures.

An IAM target-state model, for example, should allow a reviewer to trace from security principles and access-management capabilities down to identity services, integration points, and platform choices. If that chain is missing, the model may still look polished while failing to support decision-making.

6. Governable flexibility

No standard will fit every initiative perfectly. The answer is not to abandon standards, but to distinguish mandatory conventions from recommended practices and to define how exceptions are handled. That keeps the standard usable while still giving governance bodies a reliable basis for review.

Flexibility matters most at the edges. A merger integration, for example, may require temporary viewpoints or transitional naming that would not be appropriate in steady-state architecture. The standard should allow such cases without turning every exception into a precedent.

Taken together, these principles establish the logic for the rest of the standard. Viewpoints, relationship patterns, governance checks, and tooling decisions should reinforce them rather than work against them.

Standardizing Viewpoints, Layers, and Relationships

Once the principles are in place, teams need to turn them into operational modeling rules. This is where standards become practical. Broad agreement on quality goals is not enough; architects also need explicit guidance on how to structure models so that similar questions produce comparable outputs.

Define a small set of mandatory viewpoints

Most organizations do not need dozens of viewpoint types in regular use. In practice, they usually gain more from a stable set of reusable views that support common decisions. Typical examples include:

- capability-to-application mapping

- business process support view

- solution context view

- integration landscape view

- technology deployment view

- transition architecture view

For each viewpoint, the standard should define:

- purpose

- intended audience

- required elements

- optional elements

- prohibited detail

- expected abstraction level

This is a direct application of the principles already discussed. A solution context view, for example, may require business actors, business services, application services, and major interfaces, while explicitly excluding low-level infrastructure unless it is relevant to the decision. A transition architecture view may require baseline and target markers, major work-package impacts, and capability changes, while leaving implementation detail to delivery documentation.

Define layer usage by viewpoint

One of the most common sources of inconsistency is uncontrolled movement between business, application, and technology layers. Standards should therefore state the primary layer of each viewpoint, which secondary layers may be referenced, and under what conditions cross-layer relationships should be shown.

For example:

- A capability map is primarily a business-layer view and may reference supporting application services, but should not normally descend into nodes and network paths.

- A deployment view is primarily a technology-layer view and may reference deployed application components, but should not attempt to model the full business operating model.

- A solution context view may span business and application layers, with limited technology references where they are architecturally significant.

These boundaries keep views readable while preserving traceability across the repository.

Standardize preferred relationship patterns

ArchiMate allows multiple valid ways to connect concepts. That flexibility is useful, but in enterprise practice it often creates inconsistency. Teams should define approved relationship patterns for recurring scenarios so similar architecture facts are modeled in similar ways.

Examples might include:

- business process realizes business service

- application component realizes application service

- application service serves business process or business role, depending on intent

- application component accesses data object

- node hosts system software and application component

The standard does not need to list every possible ArchiMate relationship. It should focus on the patterns the organization uses most often and provide examples of correct use.

A practical micro-example helps here. In a customer onboarding domain, the standard may define that the “Customer Verification API” is modeled as an application service realized by the “KYC Platform” application component, while the “Onboard Customer” business process consumes that service. If every team follows that pattern, reviewers can interpret onboarding, lending, and fraud-prevention models in the same way.

Define relationship constraints and simplification rules

The standard should also clarify which technically valid relationships are discouraged or restricted in practice. Common examples include:

- discouraging generic association when a more precise relationship is available

- restricting direct cross-layer shortcuts where an intermediate concept should exist

- defining when derived relationships may be shown for readability

- distinguishing between repository traceability and diagram visibility

This is where semantic clarity becomes practical. Not every valid relationship needs to appear in every diagram. Stakeholder views should stay readable, while the underlying repository preserves rigor.

For instance, a payment modernization diagram shown to executives may display that a payment orchestration service supports settlement and fraud-checking capabilities. The repository may also contain detailed traces to message schemas, integration flows, and hosting nodes, but those should not all appear in the board-level view.

Standardize visual conventions

View composition matters almost as much as the metamodel. Even when architects use the same elements and relationships, diagrams can still vary enough in layout and labeling to confuse reviewers. Standards should therefore define conventions such as:

- left-to-right or top-to-bottom flow

- grouping by domain, lifecycle state, or ownership

- treatment of external or out-of-scope elements

- color semantics, if colors are used

- labeling rules for reused elements and interfaces

These conventions may seem cosmetic, but they improve review efficiency and reduce cognitive load. A reviewer should not have to learn a new visual language every time a new model appears.

Tie viewpoints to governance checkpoints

A standard becomes much stronger when it is linked directly to delivery practice. If projects must submit a solution overview, integration view, and transition view at defined lifecycle stages, each artifact should already have a standard ArchiMate pattern behind it. That ensures modeling is not optional decoration, but part of the architecture operating model.

A realistic example is a phased retirement of a legacy directory service. Before approval, the architecture board may require a transition architecture view showing the current authentication flows, interim coexistence with the new identity provider, cutover dependencies, and decommission triggers. Because the viewpoint is standardized, the board can focus on sequencing and risk rather than on diagram interpretation.

In short, the principles from the previous section become actionable here: purpose defines the viewpoint, abstraction defines its scope, semantic clarity limits clutter, naming supports reuse, and traceability connects the resulting views across the repository.

Governance, Quality Assurance, and Model Lifecycle Management

Defining standards is only the starting point. Their value depends on how they are governed, how model quality is assessed, and how repository content is maintained over time. Without that discipline, even a well-designed standard will drift into inconsistent practice.

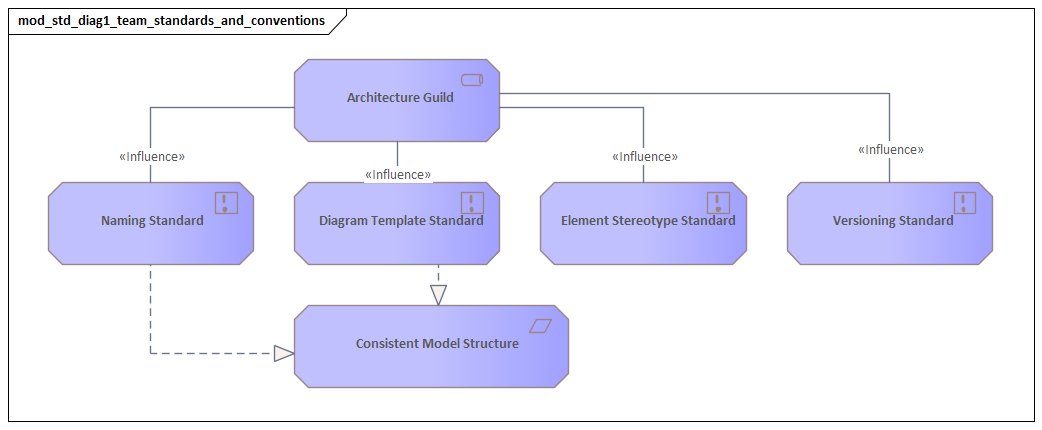

Assign clear ownership

A practical governance model starts with explicit ownership. Teams should define:

- who owns the standard

- who approves changes to it

- who is accountable for repository quality

- who is responsible for the accuracy of specific models

A common pattern is centralized stewardship of the standard by the enterprise architecture function, with distributed accountability for model content across domain and solution architects. That balance preserves consistency without losing local knowledge.

Review for both conformance and usefulness

Notation compliance on its own is not enough. Quality assurance should operate at two levels:

- Conformance quality: Does the model follow the defined conventions for viewpoint, naming, abstraction, and relationship usage?

- Architectural quality: Is the model actually useful for decision-making? Does it show the dependencies, impacts, and constraints relevant to the audience?

This distinction matters because a model can be formally correct and still fail to support governance or delivery. Reviews should test both. An architecture board decision may hinge less on whether the notation is perfect than on whether the model clearly shows reuse of an existing identity service or the impact of introducing a new event broker.

Use lightweight review criteria

To keep standards practical, teams should define a concise review model. Typical checks include:

- mandatory viewpoints are present for the lifecycle stage

- existing repository elements were considered before creating new ones

- naming and classification follow the standard

- traceability exists to relevant target-state decisions or principles

- the model reflects approved scope and abstraction level

These checks can be built into design authority reviews, governance gates, or portfolio checkpoints. The aim is to normalize model quality without creating unnecessary overhead.

Automate repeatable controls

Many tools can validate naming patterns, mandatory attributes, missing relationships, orphaned elements, and duplicate candidates. Automation is most useful for high-volume, repeatable controls. Human review should focus on semantics, stakeholder relevance, and architectural judgment.

That division of labor is important. Automation can tell you whether an application component is missing an owner attribute. It cannot tell you whether the diagram explains the architectural trade-off clearly enough for a steering committee to make a decision.

Manage model lifecycle states

Architecture models lose value when they are not maintained after initial delivery. Teams should define lifecycle states such as:

- draft

- in review

- approved

- implemented

- superseded

- retired

For each state, the standard should specify who may update the model, what evidence is needed for status changes, and how the model should be interpreted. This helps distinguish conceptual target architecture from implemented reality and prevents outdated transition views from being treated as current-state facts.

A useful micro-example is cloud migration. A target deployment view may show a customer analytics platform running on a managed Kubernetes service. Until the migration is complete, that model should not be treated as the current production state. Clear lifecycle status prevents governance teams, auditors, and operations staff from drawing the wrong conclusion.

Establish review and archival cycles

Some models should be updated alongside project milestones. Others should be reviewed periodically as part of domain architecture management. Content that is no longer relevant should be archived rather than left active and ambiguous. Versioning rules should clarify when to update an existing model, create a new transition state, or baseline a target view at a point in time.

This is particularly important for technology lifecycle governance, where approved standards, tolerated exceptions, and retirement dates need to remain explicit and current. If baseline, transition, and target content are not governed consistently, roadmap analysis and impact assessment quickly become unreliable.

Governance, quality assurance, and lifecycle management are what turn standards into an operating discipline. They are what keep the repository trustworthy enough to support decisions over time.

Tooling, Repository Management, and Collaboration Practices

Standards become sustainable only when tooling and collaboration reinforce them. In many teams, inconsistency comes less from disagreement with the standard than from the practical realities of how models are created, stored, and shared.

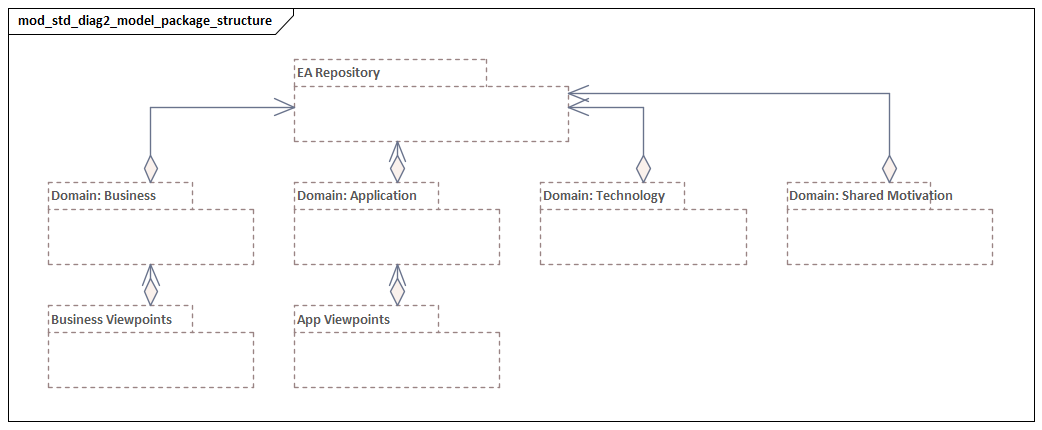

Structure the repository deliberately

Teams need a clear repository structure for:

- enterprise reference content

- domain architecture content

- solution or initiative workspaces

- reusable building blocks

- baseline, transition, and target states

Without that structure, architects struggle to determine whether a concept already exists, and reuse declines. A common approach is to separate governed shared content from project workspaces, then define promotion rules for moving initiative-specific content into reusable enterprise assets. This directly supports naming, traceability, and lifecycle management.

Configure tools to reinforce standards

Where possible, tools should embody the standard rather than rely on users to remember it. Useful configurations include:

- viewpoint templates

- restricted palettes for approved element and relationship types

- mandatory metadata fields

- naming pattern validation

- predefined visual conventions

For example, application components may require attributes such as owner, lifecycle status, criticality, and external inventory ID. Business capabilities may require domain, level, and strategic relevance. Kafka topics might require data classification, owning domain, and retention policy. These attributes make the repository useful for analysis and integration, not just for diagramming.

Integrate with adjacent repositories

Architecture repositories become far more valuable when they connect to application inventories, CMDBs, technology standards, risk registers, and project portfolios. Full synchronization is not always necessary, but there should be a deliberate integration strategy built on shared identifiers, clear ownership, and controlled imports.

This supports practical questions such as:

- Which capabilities depend on technologies nearing end of support?

- Which initiatives affect the same application service?

- Which business processes rely on a platform with unresolved risk?

These use cases depend on the consistency and traceability established in earlier sections.

Support shared modeling workflows

Architecture modeling is rarely a solo activity. Standards are applied more consistently when teams use shared workflows such as:

- peer reviews

- model pairing for complex changes

- reuse checks before creating new elements

- regular repository curation sessions

These practices reduce duplicate modeling, surface ambiguity early, and make standards easier to learn in day-to-day work.

Separate working models from publication views

Architects need space to explore structure and compare options before producing stakeholder-facing diagrams. If draft material is mixed directly into governed repository areas, quality deteriorates quickly. Teams should support exploratory workspaces with lighter controls, then require review before content is promoted into shared views and reusable objects.

This is a practical expression of governable flexibility: allow experimentation, but protect the integrity of the shared knowledge base.

Provide accessible guidance

Standards are easier to adopt when guidance is embedded in everyday work. Useful support includes:

- quick-reference patterns

- approved example models

- tool-based help

- onboarding materials

- community forums or office hours

When tooling, repository design, and collaboration practices line up, standards stop feeling like abstract rules and become the normal way architecture work gets done.

Adoption Strategy, Training, and Continuous Improvement

Even a strong standard will fail if it is published as a document and left to individual interpretation. Adoption needs to be managed as a change initiative, with clear scope, support, and feedback loops.

Adopt by role and use case

Not everyone needs the same level of ArchiMate proficiency. Enterprise architects, domain architects, solution architects, and specialist teams interact with the standard in different ways. The adoption model should define:

- who must create models

- who must review them

- who only needs to interpret them

- which viewpoints are mandatory for each role

This role-based approach reduces resistance and focuses training effort where it matters most.

Start with priority scenarios

Adoption works best when teams see immediate value. Rather than beginning with abstract policy training, start with a few high-value scenarios such as project initiation, integration design, transition planning, or impact assessment. Pilot the standard in visible initiatives, use the resulting views in governance forums, and show how consistency improves review speed and clarity.

Good pilots are usually concrete efforts. IAM modernization is one. Kafka-based event integration is another. A technology refresh program tied to end-of-support deadlines is a third. These scenarios generate real architectural questions and expose whether the standards for viewpoints, relationships, and governance are practical.

Train for capability, not just notation

Most architects do not struggle because they cannot recognize ArchiMate symbols. They struggle because they are unsure how to apply the organization’s standards consistently. Effective training should therefore combine:

- the organization’s modeling conventions

- worked examples from real architecture scenarios

- hands-on practice in the selected toolset

Short clinics on topics such as capability mapping, transition modeling, service decomposition, or event architecture are often more effective than generic training on the full language.

Embed support mechanisms

Sustained adoption depends on accessible support. Useful mechanisms include:

- modeling champions or practice leads

- office hours

- peer-learning sessions

- curated example libraries

- channels for resolving edge cases

These mechanisms create a feedback loop between practice and standard. Repeated questions often reveal where guidance is unclear, too rigid, or incomplete.

Improve the standard through evidence

Standards should evolve based on observed outcomes, not opinion alone. Teams should periodically review indicators such as:

- model reuse rates

- common validation failures

- time spent clarifying artifacts in governance

- frequency of exceptions

- stakeholder satisfaction with architecture views

These measures help identify whether issues come from tool friction, unclear standards, or capability gaps.

Treat the standard as a product

A useful mindset is to manage the standard as a product with its own roadmap. That roadmap may include:

- new viewpoint templates

- stronger repository integrations

- revised review checklists

- additional guidance for cloud, data, or platform domains

- improved automation and reporting

This reinforces the central point of the article: ArchiMate standards are not static documentation. They are a managed capability that should become easier to use and more valuable over time.

Conclusion

ArchiMate modeling standards are most effective when they shape how architecture teams work, not just how they draw. Their value lies in making architecture knowledge consistent enough to compare, govern, and reuse across the enterprise.

The strongest standards begin with a few clear principles: model for purpose, keep abstraction consistent, favor semantic clarity over density, enforce naming discipline, design for traceability, and allow controlled flexibility. From there, teams can define a manageable set of viewpoints, standard relationship patterns, and practical governance checks. Tooling, repository structure, and collaboration practices should reinforce those choices, while adoption and training should focus on real delivery scenarios rather than notation theory alone.

When these elements come together, models become easier to trust. Governance reviews move faster. Teams reuse more architecture knowledge instead of recreating it. Repositories become useful for impact analysis, roadmap planning, and technology risk assessment rather than serving as static diagram stores. That is what makes architecture board decisions, IAM transformation, Kafka-based event design, and technology lifecycle governance easier to manage at scale.

For most organizations, the goal is not perfect standardization. It is disciplined usefulness at scale. Start with a small number of high-value conventions, connect them to governance and tooling, and refine them through practice. Treated as a living capability, ArchiMate modeling standards can help architecture teams produce work that is clearer, more reusable, and more influential in enterprise decision-making.

Frequently Asked Questions

What is architecture governance in enterprise architecture?

Architecture governance is the set of practices, processes, and standards that ensure architecture decisions are consistent, traceable, and aligned to organisational strategy. It typically includes an Architecture Review Board (ARB), architecture principles, modeling standards, and compliance checking.

How does ArchiMate support architecture governance?

ArchiMate supports governance by providing a standard language that makes architecture proposals comparable and reviewable. Governance decisions, architecture principles, and compliance requirements can be modeled as Motivation layer elements and traced to the architectural elements they constrain.

What are architecture principles and how are they modeled?

Architecture principles are fundamental rules that guide architecture decisions. In ArchiMate, they are modeled in the Motivation layer as Principle elements, often linked to Goals and Drivers that justify them, and connected via Influence relationships to the constraints they impose on design decisions.